Aerial photographic and satellite image interpretation

Aerial photographic and satellite image interpretation, or just image interpretation when in context, is the act of examining photographic images, particularly airborne and spaceborne, to identify objects and judging their significance.[1] This is commonly used in military aerial reconnaissance, using photographs taken from reconnaissance aircraft and reconnaissance satellites.

The principles of image interpretation have been developed empirically for more than 150 years. The most basic are the elements of image interpretation: location, size, shape, shadow, tone/color, texture, pattern, height/depth and site/situation/association. They are routinely used when interpreting aerial photos and analyzing photo-like images. An experienced image interpreter uses many of these elements intuitively. However, a beginner may not only have to consciously evaluate an unknown object according to these elements, but also analyze each element's significance in relation to the image's other objects and phenomena.

Angle of view

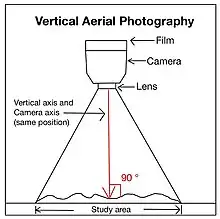

Vertical imagery and photographs

Vertical aerial photographs represent more than 95% of all captured aerial images.[2] The principles of capturing vertical photographs are shown in Figure 2.[3][4] Two major axes which originate from the camera lens are included.[3] One is the vertical axis which is always at 90° to the study area.[3] Another one is the camera axis which changes with the angle of the camera.[3] To capture a vertical aerial photograph, both of these axes must be in the same position.[3] The vertical pictures are captured by the camera which is above the object being photographed without any tilting or deviation of the camera axis.[5] Areas in a vertical aerial photograph often have a consistent size.[4]

Oblique aerial photographs

Oblique aerial photographs are captured when the cameras are set at specific angles to the land.[5] It is a very helpful enhancement or addition to the traditional vertical image.[2] It allows the vision to pass through a relatively high proportion of the plant cover and leaves of trees.[2] Oblique aerial photographs can be classified into two types.

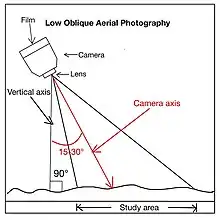

Low oblique

Low oblique aerial photographs are generated when the camera axis has a 15–30° angle difference from the vertical axis, shown in figure 3.[3] The horizon, the dividing border between planet and atmosphere from a viewing angle,[6] is unobservable in a low oblique aerial photograph.[5] The length between two points is unable to be calculated and is not accurate because a low oblique image does not have a scale. The orientation of objects is also inaccurate.[5] Low oblique photographs can be used as a reference before site investigation because they give updated details of local places.[3][4]

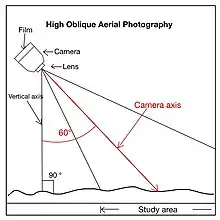

High oblique

High oblique aerial photographs are generated when the camera axis has a 60° angle difference from the vertical axis, shown in figure 4.[3] In this case, the horizon is observable.[5] This type of photograph captures a fairly sizable region.[5] As in a low oblique photograph, the length between two points and the orientation of objects are inaccurate.[5] High oblique aerial photographs are widely used in assisting field investigation because the line of sight shown is more similar to humans.[3] Features and structures can be easily recognized.[4] However, landscapes, buildings and hillslopes that are blocked by the mountainous areas are not visible.[4]

Color and false color

Black and white

Black and white aerial photographs are frequently used for drawing maps, such as topographic maps.[5] Topographic maps are precise, in-depth descriptions of the terrain characteristics found in the areas or regions.[7] Black and white aerial photography is capable of producing good-quality images under poor weather conditions, such as foggy and misty air.[2]

Color

Color aerial photographs preserve and capture the colors of the original objects through the numerous layers inside the film.[8] Color photographs can be used to distinguish different kinds of soils, rocks, and deposits that are located above the rock layers, and some contaminated water sources.[5] The degradation of trees driven by the insects can also be identified using color aerial photos.[5] It can assist in locating storage of materials in the natural environment, such as trees, wild animals and oil.[4]

Color infrared

Color infrared aerial photographs are captured using false color film which changes the original color of different features into "false color".[2][5] For example, grasslands and forests which are green in nature have a red color.[2] But some artificial objects which are covered in green may have a blue color.[2] This phenomenon is due to the fact that plants reflect more infrared radiation (IR) than man-made objects.[2] Dense vegetation cover may give a more intense red color than that of sparse vegetation cover. This helps in determining whether the trees are healthy or not.[5] It also gives evidence for the growth rate of plants.[5] It is helpful when identifying the boundary between land and ocean or lakes because the ocean does not reflect the IR.[2]

Altitudes

High-altitude

High-altitude aerial photographs are taken when the plane is flying in the altitude range of 10,000 to 25,000 feet.[2] The advantage of high-altitude aerial photography is that it can record the information of a larger area by taking one photograph only.[5] However, high-altitude photographs cannot show as many details as low-altitude photographs since some objects, such as buildings, roads, and infrastructures, are of a very tiny in size in the image.[5]

Low-altitude

Low-altitude aerial photographs are taken when the plane is flying at an altitude of less than 10,000 feet.[2] The objects in the photograph are of a larger in size and contain more details compared with those in high-altitude photographs.[5] Due to this advantage, a routine collection of low-altitude photographs has been conducted every six months since 1985.[2]

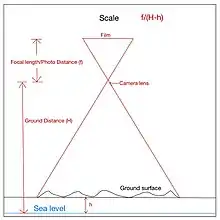

Scale

The scale of aerial photography or satellite imagery is the value calculated when the elevation difference between the photo film and the camera lens is divided by the difference between the camera lens and the terrain surface.[4] It can also be measured by dividing the measured length of two locations in the photo by the length of those locations in reality.[4][3] In figure 5, several related terms and symbols are shown. Focal length (f) refers to the elevation difference between the film and the lens.[2][4] H is the elevation difference between the lens and the sea level, which is the average level of the water surface.[2][4] h is the elevation difference between the terrain surface and the sea level.[2][4] S is the scale of aerial photographs.[2][4] is the formula for scale measurement.[2][4] This measurement controls the amount and types of buildings observed in the photographs, the occurrence of some specific characteristics, and the certainty of the measurements.[3] For example, a large-scale photograph usually gives a more accurate measurement of distance compared with a small-scale photograph. 1:6000 to 1:10000 is the best range of scale for landslip research and geological mapping for ground assessment.[2]

Large-scale imagery

Large-scale aerial photographs are those taken at a scale of 1:500 to 1:1000.[9] This type of photograph is best suited for local site investigations.[9] It looks like a zoomed-in map.

Small-scale imagery

Small-scale aerial photographs are those taken at a scale of 1:5000 to 1:20000.[9] It is more suitable for provincial or large area research.[9]

Elements to interpret

Size

- The size of an object is one of the most distinguishing characteristics and one of the most important elements of interpretation. Most commonly, length, width and perimeter are measured. To be able to do this successfully, it is necessary to know the scale of the photo. Measuring the size of an unknown object allows the interpreter to rule out possible alternatives. It has proved to be helpful to measure the size of a few well-known objects to give a comparison to the unknown-object. For example, field dimensions of major sports like soccer, football, and baseball are standard throughout the world. If objects like this are visible in the image, it is possible to determine the size of the unknown object by simply comparing the two.

Shape

- There is an infinite number of uniquely shaped natural and man-made objects in the world. A few examples of shape are the triangular shape of modern jet aircraft and the shape of a common single-family dwelling. Humans have modified the landscape in very interesting ways that has given shape to many objects, but nature also shapes the landscape in its own ways. In general, straight, recti-linear features in the environment are of human origin. Nature produces more subtle shapes.

Shadow

- Virtually all remotely sensed data are collected within 2 hours of solar noon to avoid extended shadows in the image or photo. This is because shadows can obscure other objects that could otherwise be identified. On the other hand, the shadow cast by an object act as a key for the identification of the object as the length of the shadow will be used to estimate the height of the object which is vital for the recognition of the object. Take for example, the Washington Monument in Washington D.C. While viewing this from above, it can be difficult to discern the shape of the monument, but with a shadow cast, this process becomes much easier. It is a good practice to orient the photos so that the shadows are falling towards the interpreter. A pseudoscopic illusion can be produced if the shadow is oriented away from the observer. This happens when low points appear high and high points appear low.

Tone and color

- Real-world materials like vegetation, water and bare soil reflect different proportions of energy in the blue, green, red, and infrared portions of the electro-magnetic spectrum. An interpreter can document the amount of energy reflected from each at specific wavelengths to create a spectral signature. These signatures can help to understand why certain objects appear as they do on black and white or color imagery. These shades of gray are referred to as tone. The darker an object appears, the less light it reflects. Color imagery is often preferred because, as opposed to shades of gray, humans can detect thousands of different colors. Color aids in the process of photo interpretation.

Texture

- This is defined as the “characteristic placement and arrangement of repetitions of tone or color in an image.” Adjectives often used to describe texture are smooth (uniform, homogeneous), intermediate, and rough (coarse, heterogeneous). It is important to remember that texture is a product of scale. On a large scale depiction, objects could appear to have an intermediate texture. But, as the scale becomes smaller, the texture could appear to be more uniform, or smooth. A few examples of texture could be the “smoothness” of a paved road, or the “coarseness” a pine forest.

Pattern

- Pattern is the spatial arrangement of objects in the landscape. The objects may be arranged randomly or systematically. They can be natural, as with a drainage pattern of a river, or man-made, as with the squares formed from the United States Public Land Survey System. Typical adjectives used in describing pattern are: random, systematic, circular, oval, linear, rectangular, and curvilinear to name a few.

Height and depth

- Height and depth, also known as “elevation” and “bathymetry”, is one of the most diagnostic elements of image interpretation. This is because any object, such as a building or an electric pole that rises above the local landscape will exhibit some sort of radial relief. Also, objects that exhibit this relief will cast a shadow that can also provide information as to its height or elevation. A good example of this would be buildings of any major city.

Other factors to consider during interpretation

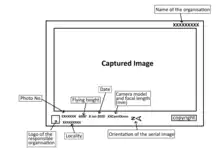

Photograph Metadata

An aerial photograph marks different data and information about the covered area and the airplane's position and condition.[2] These details are measured and noted by the data panel which includes different devices and instruments for the specific measurements.[2] Figure 8 shows the general format of a vertical aerial photograph.

Location

- There are two primary methods to obtain a precise location in the form of coordinates. 1) survey in the field by using traditional surveying techniques or global positioning system instruments

- 2) collect remotely sensed data of the object, rectify the image and then extract the desired coordinate information. Most scientists who choose option 1 now use relatively inexpensive GPS instruments in the field to obtain the desired location of an object. If option 2 is chosen, most aircraft used to collect the remotely sensed data have a GPS receiver.

Site background and association

- Site has unique physical characteristics which might include elevation, slope, and type of surface cover (e.g., grass, forest, water, bare soil). Site can also have socioeconomic characteristics such as the value of land or the closeness to water. Situation refers to how the objects in the photo or image are organized and “situated” in respect to each other. Most power plants have materials and building associated in a fairly predictable manner. Association refers to the fact that when you find a certain activity within a photo or image, you usually encounter related or “associated” features or activities. Site, situation, and association are rarely used independent of each other when analyzing an image. An example of this would be a large shopping mall. Usually there are multiple large buildings, massive parking lots, and it is usually located near a major road or intersection.

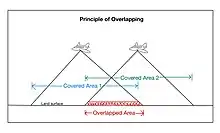

Aerial photographs overlap

Overlapping of aerial photos means that around 60% of the covered area of every aerial image overlays that of the one before it.[2] Every object along the flying path can be observed twice at a minimum.[2] The purpose of overlapping the aerial photography is to generate the 3D topography or relief when using a stereoscope for interpretation.[2] The stereoscope is an instrument used to see the 3D overlapping aerial images.[2]

Reaching the requirements of the two chosen, overlapping images is simple. The principal points (central point of the image in geometry) of the two photos must be in different locations on the terrain.[2] Another restriction is that the scale of the images must be the same.[2] The flying routes of the planes and the time of day are not restricted.[2]

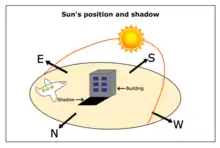

Aerial photo-geometry orientation

The preferred orientation of an aerial photograph is closely related to the position of the Sun and the shadow element.[2] In the Northern Hemisphere, the south direction is always the position of the sun.[2] Therefore, shadows are usually formed on the north side.[2] This then affects the shadow element in aerial photographs. For example, if the plane is flying from North to South, the sunlight is also coming North and the shadows that show the shapes of objects can be clearly observed in aerial photographs.[2] If the plane is flying from South to North, shadows will not be clearly observed.[2] For photo interpretation, it is preferred that the image is taken so that the shadows can be clearly observed[2] as shadows can highlight the relief of the topography.[2] This orientation of the image also helps geologists link the 3D pictures to what they observe.[2]

Displacement and distortion

Distortion and displacement are two common phenomena observed on an aerial photograph.

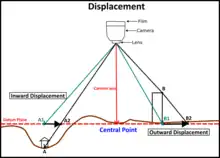

Displacement

Displacement is a result of varying topographic relief within a covered area.[4] A datum plane, which refers to the average sea level, is essential in the displacement phenomenon.[4] For a location which has an elevation higher than that of the datum plane, the original position will move away from the central point of the image.[4] For a location which has an elevation lower than that of the datum plane, the original position will move closer to the central point of the image.[4]

Distortion

Distortion refers to any change in an object's or region's location on an aerial photo that modifies its original features and shapes.[2] It usually appears near the picture's border.[2] There are two causes of distortion. The first one is the tilt and tip of a plane.[4] When the aircraft is rising or descending it produces a tip.[4] When the aircraft leans in a particular direction during the aerial survey, it produces a tilt.[4] The tilt axis is perpendicular to the tip axis.[4] The second reason is the processing of photographs.[4] This is a process used to modify the exposed photo film to generate an aerial photograph.[10] When the moistened film dries, it expands through one orientation and contracts through the other orientation.[4] Only a small amount of distortion is caused by this.[4]

Pre-digital interpretation methods using a mirror stereoscope

- Choose two (2) aerial photos which are taken one after the other and make sure there is at least 60% superimposition.[2]

- Make sure they are placed in the same orientation and the northern part of the aerial photograph as well as the generated shadows should be placed towards the interpreter.[2]

- Make sure the distance between the pair of eyepieces, a lens used in the stereoscope for observation, fits the distance between the eyes.[2]

- Put both forefingers on the same, easily identified object in each of the photos.[2]

- Slowly drag the photos until the two objects are overlapped under the stereoscope.[2]

- The overlapped areas then appear 3D under the stereoscope.[2]

Figure 12: An example of a mirror stereoscope.

Figure 12: An example of a mirror stereoscope.

See also

References

- American Society of Photogrammetry; Colwell, R.N. (1960). Manual of Photographic Interpretation. Manual of Photographic Interpretation. American Society of Photogrammetry. Retrieved 2022-01-23.

- Ho, H (2004). "Application of aerial photograph interpretation in geotechnical practice in Hong Kong (MSc thesis)". University of Hong Kong, Pokfulam, Hong Kong SAR. doi:10.5353/th_b4257758.

- National Council of Educational Research and Training. (2006). Introduction To Aerial Photographs. In Practical Work In Geography (pp. 69–83). Publication Division by the Secretary. https://www.philoid.com/epub/ncert/11/214/

- Crisco, W. (1988). Interpretation of Aerial Photographs. U.S. Department of the Interior, Bureau of Land Management.

- Imam, E. (2018). Aerial Photography and Photogrammetary.

- Salamone, M. A. (2017). Equality and Justice in Early Greek Cosmologies: The Paradigm of the "Line of the Horizon".

- Australia Government. (2014). What is a topographic map? Geoscience Australia.

- National Film and Sound Archive of Australia. (2018). COLOUR FILM. https://www.nfsa.gov.au/preservation/preservation-glossary/colour-film

- Geotechnical Engineering Office, Civil Engineering and Development Department. (1987). Guide to Site Investigation (Geoguide 2) (pp. 1–352) https://www.cedd.gov.hk/filemanager/eng/content_108/eg2_20171218.pdf

- Karlheinz Keller et al. "Photography" in Ullmann's Encyclopedia of Industrial Chemistry, 2005, Wiley-VCH, Weinheim. doi:10.1002/14356007.a20_001

Further reading

- Jensen, John R. (2000). Remote Sensing of the Environment. Prentice Hall. ISBN 978-0-13-489733-2.

- Olson, C. E. (1960). "Elements of photographic interpretation common to several sensors". Photogrammetric Engineering. 26 (4): 651–656.

- Philipson, Warren R. (1997). Manual of Photographic Interpretation (2nd ed.). American Society for Photogrammetry and Remote Sensing. ISBN 978-1-57083-039-6.