Artificial neural network

Artificial neural networks (ANNs, also shortened to neural networks (NNs) or neural nets) are a branch of machine learning models that are built using principles of neuronal organization discovered by connectionism in the biological neural networks constituting animal brains.[1][2]

| Part of a series on |

| Machine learning and data mining |

|---|

|

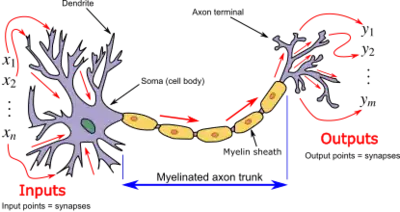

An ANN is based on a collection of connected units or nodes called artificial neurons, which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit a signal to other neurons. An artificial neuron receives signals then processes them and can signal neurons connected to it. The "signal" at a connection is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs. The connections are called edges. Neurons and edges typically have a weight that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Neurons may have a threshold such that a signal is sent only if the aggregate signal crosses that threshold.

Typically, neurons are aggregated into layers. Different layers may perform different transformations on their inputs. Signals travel from the first layer (the input layer), to the last layer (the output layer), possibly after traversing the layers multiple times.

Training

Neural networks learn (or are trained) by processing examples, each of which contains a known "input" and "result", forming probability-weighted associations between the two, which are stored within the data structure of the net itself. The training of a neural network from a given example is usually conducted by determining the difference between the processed output of the network (often a prediction) and a target output. This difference is the error. The network then adjusts its weighted associations according to a learning rule and using this error value. Successive adjustments will cause the neural network to produce output that is increasingly similar to the target output. After a sufficient number of these adjustments, the training can be terminated based on certain criteria. This is a form of supervised learning.

Such systems "learn" to perform tasks by considering examples, generally without being programmed with task-specific rules. For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat" and using the results to identify cats in other images. They do this without any prior knowledge of cats, for example, that they have fur, tails, whiskers, and cat-like faces. Instead, they automatically generate identifying characteristics from the examples that they process.

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

History

The simplest kind of feedforward neural network (FNN) is a linear network, which consists of a single layer of output nodes; the inputs are fed directly to the outputs via a series of weights. The sum of the products of the weights and the inputs is calculated in each node. The mean squared errors between these calculated outputs and the given target values are minimized by creating an adjustment to the weights. This technique has been known for over two centuries as the method of least squares or linear regression. It was used as a means of finding a good rough linear fit to a set of points by Legendre (1805) and Gauss (1795) for the prediction of planetary movement.[4][5][6][7][8]

Wilhelm Lenz and Ernst Ising created and analyzed the Ising model (1925)[9] which is essentially a non-learning artificial recurrent neural network (RNN) consisting of neuron-like threshold elements.[7] In 1972, Shun'ichi Amari made this architecture adaptive.[10][7] His learning RNN was popularised by John Hopfield in 1982.[11]

Warren McCulloch and Walter Pitts[12] (1943) also considered a non-learning computational model for neural networks.[13] In the late 1940s, D. O. Hebb[14] created a learning hypothesis based on the mechanism of neural plasticity that became known as Hebbian learning. Farley and Wesley A. Clark[15] (1954) first used computational machines, then called "calculators", to simulate a Hebbian network. In 1958, psychologist Frank Rosenblatt invented the perceptron, the first implemented artificial neural network,[16][17][18][19] funded by the United States Office of Naval Research.[20]

Some say that research stagnated following Minsky and Papert (1969),[21] who discovered that basic perceptrons were incapable of processing the exclusive-or circuit and that computers lacked sufficient power to process useful neural networks. However, by the time this book came out, methods for training multilayer perceptrons (MLPs) were already known.

The first deep learning MLP was published by Alexey Grigorevich Ivakhnenko and Valentin Lapa in 1965, as the Group Method of Data Handling.[22][23][24] The first deep learning MLP trained by stochastic gradient descent[25] was published in 1967 by Shun'ichi Amari.[26][7] In computer experiments conducted by Amari's student Saito, a five layer MLP with two modifiable layers learned useful internal representations to classify non-linearily separable pattern classes.[7]

Self-organizing maps (SOMs) were described by Teuvo Kohonen in 1982.[27][28] SOMs are neurophysiologically inspired[29] neural networks that learn low-dimensional representations of high-dimensional data while preserving the topological structure of the data. They are trained using competitive learning.[27]

The convolutional neural network (CNN) architecture with convolutional layers and downsampling layers was introduced by Kunihiko Fukushima in 1980.[30] He called it the neocognitron. In 1969, he also introduced the ReLU (rectified linear unit) activation function.[31][7] The rectifier has become the most popular activation function for CNNs and deep neural networks in general.[32] CNNs have become an essential tool for computer vision.

The backpropagation algorithm is an efficient application of the Leibniz chain rule (1673)[33] to networks of differentiable nodes.[7] It is also known as the reverse mode of automatic differentiation or reverse accumulation, due to Seppo Linnainmaa (1970).[34][35][36][37][7] The term "back-propagating errors" was introduced in 1962 by Frank Rosenblatt,[38][7] but he did not have an implementation of this procedure, although Henry J. Kelley[39] and Bryson[40] had dynamic programming based continuous precursors of backpropagation[22][41][42][43] already in 1960–61 in the context of control theory.[7] In 1973, Dreyfus used backpropagation to adapt parameters of controllers in proportion to error gradients.[44] In 1982, Paul Werbos applied backpropagation to MLPs in the way that has become standard.[45][41] In 1986 Rumelhart, Hinton and Williams showed that backpropagation learned interesting internal representations of words as feature vectors when trained to predict the next word in a sequence.[46]

The time delay neural network (TDNN) of Alex Waibel (1987) combined convolutions and weight sharing and backpropagation.[47][48] In 1988, Wei Zhang et al. applied backpropagation to a CNN (a simplified Neocognitron with convolutional interconnections between the image feature layers and the last fully connected layer) for alphabet recognition.[49][50] In 1989, Yann LeCun et al. trained a CNN to recognize handwritten ZIP codes on mail.[51] In 1992, max-pooling for CNNs was introduced by Juan Weng et al. to help with least-shift invariance and tolerance to deformation to aid 3D object recognition.[52][53][54] LeNet-5 (1998), a 7-level CNN by Yann LeCun et al.,[55] that classifies digits, was applied by several banks to recognize hand-written numbers on checks digitized in 32x32 pixel images.

From 1988 onward,[56][57] the use of neural networks transformed the field of protein structure prediction, in particular when the first cascading networks were trained on profiles (matrices) produced by multiple sequence alignments.[58]

In the 1980s, backpropagation did not work well for deep FNNs and RNNs. To overcome this problem, Juergen Schmidhuber (1992) proposed a hierarchy of RNNs pre-trained one level at a time by self-supervised learning.[59] It uses predictive coding to learn internal representations at multiple self-organizing time scales. This can substantially facilitate downstream deep learning. The RNN hierarchy can be collapsed into a single RNN, by distilling a higher level chunker network into a lower level automatizer network.[59][7] In 1993, a chunker solved a deep learning task whose depth exceeded 1000.[60]

In 1992, Juergen Schmidhuber also published an alternative to RNNs[61] which is now called a linear Transformer or a Transformer with linearized self-attention[62][63][7] (save for a normalization operator). It learns internal spotlights of attention:[64] a slow feedforward neural network learns by gradient descent to control the fast weights of another neural network through outer products of self-generated activation patterns FROM and TO (which are now called key and value for self-attention).[62] This fast weight attention mapping is applied to a query pattern.

The modern Transformer was introduced by Ashish Vaswani et al. in their 2017 paper "Attention Is All You Need."[65] It combines this with a softmax operator and a projection matrix.[7] Transformers have increasingly become the model of choice for natural language processing.[66] Many modern large language models such as ChatGPT, GPT-4, and BERT use it. Transformers are also increasingly being used in computer vision.[67]

In 1991, Juergen Schmidhuber also published adversarial neural networks that contest with each other in the form of a zero-sum game, where one network's gain is the other network's loss.[68][69][70] The first network is a generative model that models a probability distribution over output patterns. The second network learns by gradient descent to predict the reactions of the environment to these patterns. This was called "artificial curiosity."

In 2014, this principle was used in a generative adversarial network (GAN) by Ian Goodfellow et al.[71] Here the environmental reaction is 1 or 0 depending on whether the first network's output is in a given set. This can be used to create realistic deepfakes.[72] Excellent image quality is achieved by Nvidia's StyleGAN (2018)[73] based on the Progressive GAN by Tero Karras, Timo Aila, Samuli Laine, and Jaakko Lehtinen.[74] Here the GAN generator is grown from small to large scale in a pyramidal fashion.

Sepp Hochreiter's diploma thesis (1991)[75] was called "one of the most important documents in the history of machine learning" by his supervisor Juergen Schmidhuber.[7] Hochreiter identified and analyzed the vanishing gradient problem[75][76] and proposed recurrent residual connections to solve it. This led to the deep learning method called long short-term memory (LSTM), published in Neural Computation (1997).[77] LSTM recurrent neural networks can learn "very deep learning" tasks[78] with long credit assignment paths that require memories of events that happened thousands of discrete time steps before. The "vanilla LSTM" with forget gate was introduced in 1999 by Felix Gers, Schmidhuber and Fred Cummins.[79] LSTM has become the most cited neural network of the 20th century.[7] In 2015, Rupesh Kumar Srivastava, Klaus Greff, and Schmidhuber used the LSTM principle to create the Highway network, a feedforward neural network with hundreds of layers, much deeper than previous networks.[80][81] 7 months later, Kaiming He, Xiangyu Zhang; Shaoqing Ren, and Jian Sun won the ImageNet 2015 competition with an open-gated or gateless Highway network variant called Residual neural network.[82] This has become the most cited neural network of the 21st century.[7]

The development of metal–oxide–semiconductor (MOS) very-large-scale integration (VLSI), in the form of complementary MOS (CMOS) technology, enabled increasing MOS transistor counts in digital electronics. This provided more processing power for the development of practical artificial neural networks in the 1980s.[83]

Neural networks' early successes included predicting the stock market and in 1995 a (mostly) self-driving car.[lower-alpha 1][84]

Geoffrey Hinton et al. (2006) proposed learning a high-level representation using successive layers of binary or real-valued latent variables with a restricted Boltzmann machine[85] to model each layer. In 2012, Ng and Dean created a network that learned to recognize higher-level concepts, such as cats, only from watching unlabeled images.[86] Unsupervised pre-training and increased computing power from GPUs and distributed computing allowed the use of larger networks, particularly in image and visual recognition problems, which became known as "deep learning".[87]

Ciresan and colleagues (2010)[88] showed that despite the vanishing gradient problem, GPUs make backpropagation feasible for many-layered feedforward neural networks.[89] Between 2009 and 2012, ANNs began winning prizes in image recognition contests, approaching human level performance on various tasks, initially in pattern recognition and handwriting recognition.[90][91] For example, the bi-directional and multi-dimensional long short-term memory (LSTM)[92][93] of Graves et al. won three competitions in connected handwriting recognition in 2009 without any prior knowledge about the three languages to be learned.[92][93]

Ciresan and colleagues built the first pattern recognizers to achieve human-competitive/superhuman performance[94] on benchmarks such as traffic sign recognition (IJCNN 2012).

Models

ANNs began as an attempt to exploit the architecture of the human brain to perform tasks that conventional algorithms had little success with. They soon reoriented towards improving empirical results, abandoning attempts to remain true to their biological precursors. ANNs have the ability to learn and model non-linearities and complex relationships. This is achieved by neurons being connected in various patterns, allowing the output of some neurons to become the input of others. The network forms a directed, weighted graph.[95]

An artificial neural network consists of simulated neurons. Each neuron is connected to other nodes via links like a biological axon-synapse-dendrite connection. All the nodes connected by links take in some data and use it to perform specific operations and tasks on the data. Each link has a weight, determining the strength of one node's influence on another,[96] allowing weights to choose the signal between neurons.

Artificial neurons

ANNs are composed of artificial neurons which are conceptually derived from biological neurons. Each artificial neuron has inputs and produces a single output which can be sent to multiple other neurons.[97] The inputs can be the feature values of a sample of external data, such as images or documents, or they can be the outputs of other neurons. The outputs of the final output neurons of the neural net accomplish the task, such as recognizing an object in an image.

To find the output of the neuron we take the weighted sum of all the inputs, weighted by the weights of the connections from the inputs to the neuron. We add a bias term to this sum.[98] This weighted sum is sometimes called the activation. This weighted sum is then passed through a (usually nonlinear) activation function to produce the output. The initial inputs are external data, such as images and documents. The ultimate outputs accomplish the task, such as recognizing an object in an image.[99]

Organization

The neurons are typically organized into multiple layers, especially in deep learning. Neurons of one layer connect only to neurons of the immediately preceding and immediately following layers. The layer that receives external data is the input layer. The layer that produces the ultimate result is the output layer. In between them are zero or more hidden layers. Single layer and unlayered networks are also used. Between two layers, multiple connection patterns are possible. They can be 'fully connected', with every neuron in one layer connecting to every neuron in the next layer. They can be pooling, where a group of neurons in one layer connects to a single neuron in the next layer, thereby reducing the number of neurons in that layer.[100] Neurons with only such connections form a directed acyclic graph and are known as feedforward networks.[101] Alternatively, networks that allow connections between neurons in the same or previous layers are known as recurrent networks.[102]

Hyperparameter

A hyperparameter is a constant parameter whose value is set before the learning process begins. The values of parameters are derived via learning. Examples of hyperparameters include learning rate, the number of hidden layers and batch size.[103] The values of some hyperparameters can be dependent on those of other hyperparameters. For example, the size of some layers can depend on the overall number of layers.

Learning

Learning is the adaptation of the network to better handle a task by considering sample observations. Learning involves adjusting the weights (and optional thresholds) of the network to improve the accuracy of the result. This is done by minimizing the observed errors. Learning is complete when examining additional observations does not usefully reduce the error rate. Even after learning, the error rate typically does not reach 0. If after learning, the error rate is too high, the network typically must be redesigned. Practically this is done by defining a cost function that is evaluated periodically during learning. As long as its output continues to decline, learning continues. The cost is frequently defined as a statistic whose value can only be approximated. The outputs are actually numbers, so when the error is low, the difference between the output (almost certainly a cat) and the correct answer (cat) is small. Learning attempts to reduce the total of the differences across the observations. Most learning models can be viewed as a straightforward application of optimization theory and statistical estimation.[95][104]

Learning rate

The learning rate defines the size of the corrective steps that the model takes to adjust for errors in each observation.[105] A high learning rate shortens the training time, but with lower ultimate accuracy, while a lower learning rate takes longer, but with the potential for greater accuracy. Optimizations such as Quickprop are primarily aimed at speeding up error minimization, while other improvements mainly try to increase reliability. In order to avoid oscillation inside the network such as alternating connection weights, and to improve the rate of convergence, refinements use an adaptive learning rate that increases or decreases as appropriate.[106] The concept of momentum allows the balance between the gradient and the previous change to be weighted such that the weight adjustment depends to some degree on the previous change. A momentum close to 0 emphasizes the gradient, while a value close to 1 emphasizes the last change.

Cost function

While it is possible to define a cost function ad hoc, frequently the choice is determined by the function's desirable properties (such as convexity) or because it arises from the model (e.g. in a probabilistic model the model's posterior probability can be used as an inverse cost).

Backpropagation

Backpropagation is a method used to adjust the connection weights to compensate for each error found during learning. The error amount is effectively divided among the connections. Technically, backprop calculates the gradient (the derivative) of the cost function associated with a given state with respect to the weights. The weight updates can be done via stochastic gradient descent or other methods, such as extreme learning machines,[107] "no-prop" networks,[108] training without backtracking,[109] "weightless" networks,[110][111] and non-connectionist neural networks.

Learning paradigms

Machine learning is commonly separated into three main learning paradigms, supervised learning,[112] unsupervised learning[113] and reinforcement learning.[114] Each corresponds to a particular learning task.

Supervised learning

Supervised learning uses a set of paired inputs and desired outputs. The learning task is to produce the desired output for each input. In this case, the cost function is related to eliminating incorrect deductions.[115] A commonly used cost is the mean-squared error, which tries to minimize the average squared error between the network's output and the desired output. Tasks suited for supervised learning are pattern recognition (also known as classification) and regression (also known as function approximation). Supervised learning is also applicable to sequential data (e.g., for handwriting, speech and gesture recognition). This can be thought of as learning with a "teacher", in the form of a function that provides continuous feedback on the quality of solutions obtained thus far.

Unsupervised learning

In unsupervised learning, input data is given along with the cost function, some function of the data and the network's output. The cost function is dependent on the task (the model domain) and any a priori assumptions (the implicit properties of the model, its parameters and the observed variables). As a trivial example, consider the model where is a constant and the cost . Minimizing this cost produces a value of that is equal to the mean of the data. The cost function can be much more complicated. Its form depends on the application: for example, in compression it could be related to the mutual information between and , whereas in statistical modeling, it could be related to the posterior probability of the model given the data (note that in both of those examples, those quantities would be maximized rather than minimized). Tasks that fall within the paradigm of unsupervised learning are in general estimation problems; the applications include clustering, the estimation of statistical distributions, compression and filtering.

Reinforcement learning

In applications such as playing video games, an actor takes a string of actions, receiving a generally unpredictable response from the environment after each one. The goal is to win the game, i.e., generate the most positive (lowest cost) responses. In reinforcement learning, the aim is to weight the network (devise a policy) to perform actions that minimize long-term (expected cumulative) cost. At each point in time the agent performs an action and the environment generates an observation and an instantaneous cost, according to some (usually unknown) rules. The rules and the long-term cost usually only can be estimated. At any juncture, the agent decides whether to explore new actions to uncover their costs or to exploit prior learning to proceed more quickly.

Formally the environment is modeled as a Markov decision process (MDP) with states and actions . Because the state transitions are not known, probability distributions are used instead: the instantaneous cost distribution , the observation distribution and the transition distribution , while a policy is defined as the conditional distribution over actions given the observations. Taken together, the two define a Markov chain (MC). The aim is to discover the lowest-cost MC.

ANNs serve as the learning component in such applications.[116][117] Dynamic programming coupled with ANNs (giving neurodynamic programming)[118] has been applied to problems such as those involved in vehicle routing,[119] video games, natural resource management[120][121] and medicine[122] because of ANNs ability to mitigate losses of accuracy even when reducing the discretization grid density for numerically approximating the solution of control problems. Tasks that fall within the paradigm of reinforcement learning are control problems, games and other sequential decision making tasks.

Self-learning

Self-learning in neural networks was introduced in 1982 along with a neural network capable of self-learning named crossbar adaptive array (CAA).[123] It is a system with only one input, situation s, and only one output, action (or behavior) a. It has neither external advice input nor external reinforcement input from the environment. The CAA computes, in a crossbar fashion, both decisions about actions and emotions (feelings) about encountered situations. The system is driven by the interaction between cognition and emotion.[124] Given the memory matrix, W =||w(a,s)||, the crossbar self-learning algorithm in each iteration performs the following computation:

In situation s perform action a; Receive consequence situation s'; Compute emotion of being in consequence situation v(s'); Update crossbar memory w'(a,s) = w(a,s) + v(s').

The backpropagated value (secondary reinforcement) is the emotion toward the consequence situation. The CAA exists in two environments, one is behavioral environment where it behaves, and the other is genetic environment, where from it initially and only once receives initial emotions about to be encountered situations in the behavioral environment. Having received the genome vector (species vector) from the genetic environment, the CAA will learn a goal-seeking behavior, in the behavioral environment that contains both desirable and undesirable situations.[125]

Neuroevolution

Neuroevolution can create neural network topologies and weights using evolutionary computation. It is competitive with sophisticated gradient descent approaches. One advantage of neuroevolution is that it may be less prone to get caught in "dead ends".[126]

Stochastic neural network

Stochastic neural networks originating from Sherrington–Kirkpatrick models are a type of artificial neural network built by introducing random variations into the network, either by giving the network's artificial neurons stochastic transfer functions, or by giving them stochastic weights. This makes them useful tools for optimization problems, since the random fluctuations help the network escape from local minima.[127] Stochastic neural networks trained using a Bayesian approach are known as Bayesian neural networks.[128]

Other

In a Bayesian framework, a distribution over the set of allowed models is chosen to minimize the cost. Evolutionary methods,[129] gene expression programming,[130] simulated annealing,[131] expectation-maximization, non-parametric methods and particle swarm optimization[132] are other learning algorithms. Convergent recursion is a learning algorithm for cerebellar model articulation controller (CMAC) neural networks.[133][134]

Modes

Two modes of learning are available: stochastic and batch. In stochastic learning, each input creates a weight adjustment. In batch learning weights are adjusted based on a batch of inputs, accumulating errors over the batch. Stochastic learning introduces "noise" into the process, using the local gradient calculated from one data point; this reduces the chance of the network getting stuck in local minima. However, batch learning typically yields a faster, more stable descent to a local minimum, since each update is performed in the direction of the batch's average error. A common compromise is to use "mini-batches", small batches with samples in each batch selected stochastically from the entire data set.

Types

ANNs have evolved into a broad family of techniques that have advanced the state of the art across multiple domains. The simplest types have one or more static components, including number of units, number of layers, unit weights and topology. Dynamic types allow one or more of these to evolve via learning. The latter is much more complicated but can shorten learning periods and produce better results. Some types allow/require learning to be "supervised" by the operator, while others operate independently. Some types operate purely in hardware, while others are purely software and run on general purpose computers.

Some of the main breakthroughs include: convolutional neural networks that have proven particularly successful in processing visual and other two-dimensional data;[135][136] long short-term memory avoid the vanishing gradient problem[137] and can handle signals that have a mix of low and high frequency components aiding large-vocabulary speech recognition,[138][139] text-to-speech synthesis,[140][41][141] and photo-real talking heads;[142] competitive networks such as generative adversarial networks in which multiple networks (of varying structure) compete with each other, on tasks such as winning a game[143] or on deceiving the opponent about the authenticity of an input.[71]

Network design

Neural architecture search (NAS) uses machine learning to automate ANN design. Various approaches to NAS have designed networks that compare well with hand-designed systems. The basic search algorithm is to propose a candidate model, evaluate it against a dataset, and use the results as feedback to teach the NAS network.[144] Available systems include AutoML and AutoKeras.[145] scikit-learn library provides functions to help with building a deep network from scratch. We can then implement a deep network with TensorFlow or Keras.

Design issues include deciding the number, type, and connectedness of network layers, as well as the size of each and the connection type (full, pooling, etc. ).

Hyperparameters must also be defined as part of the design (they are not learned), governing matters such as how many neurons are in each layer, learning rate, step, stride, depth, receptive field and padding (for CNNs), etc.[146]

The Python code snippet provides an overview of the training function, which uses the training dataset, number of hidden layer units, learning rate, and number of iterations as parameters:def train(X, y, n_hidden, learning_rate, n_iter):

m, n_input = X.shape

# 1. random initialize weights and biases

w1 = np.random.randn(n_input, n_hidden)

b1 = np.zeros((1, n_hidden))

w2 = np.random.randn(n_hidden, 1)

b2 = np.zeros((1, 1))

# 2. in each iteration, feed all layers with the latest weights and biases

for i in range(n_iter + 1):

z2 = np.dot(X, w1) + b1

a2 = sigmoid(z2)

z3 = np.dot(a2, w2) + b2

a3 = z3

dz3 = a3 - y

dw2 = np.dot(a2.T, dz3)

db2 = np.sum(dz3, axis=0, keepdims=True)

dz2 = np.dot(dz3, w2.T) * sigmoid_derivative(z2)

dw1 = np.dot(X.T, dz2)

db1 = np.sum(dz2, axis=0)

# 3. update weights and biases with gradients

w1 -= learning_rate * dw1 / m

w2 -= learning_rate * dw2 / m

b1 -= learning_rate * db1 / m

b2 -= learning_rate * db2 / m

if i % 1000 == 0:

print("Epoch", i, "loss: ", np.mean(np.square(dz3)))

model = {"w1": w1, "b1": b1, "w2": w2, "b2": b2}

return model

Use

Using artificial neural networks requires an understanding of their characteristics.

- Choice of model: This depends on the data representation and the application. Overly complex models are slow learning.

- Learning algorithm: Numerous trade-offs exist between learning algorithms. Almost any algorithm will work well with the correct hyperparameters[147] for training on a particular data set. However, selecting and tuning an algorithm for training on unseen data requires significant experimentation.

- Robustness: If the model, cost function and learning algorithm are selected appropriately, the resulting ANN can become robust.

ANN capabilities fall within the following broad categories:[148]

- Function approximation,[149] or regression analysis,[150] including time series prediction, fitness approximation[151] and modeling.

- Classification, including pattern and sequence recognition, novelty detection and sequential decision making.[152]

- Data processing,[153] including filtering, clustering, blind source separation[154] and compression.

- Robotics, including directing manipulators and prostheses.

Applications

Because of their ability to reproduce and model nonlinear processes, artificial neural networks have found applications in many disciplines. Application areas include system identification and control (vehicle control, trajectory prediction,[155] process control, natural resource management), quantum chemistry,[156] general game playing,[157] pattern recognition (radar systems, face identification, signal classification,[158] 3D reconstruction,[159] object recognition and more), sensor data analysis,[160] sequence recognition (gesture, speech, handwritten and printed text recognition[161]), medical diagnosis, finance[162] (e.g. ex-ante models for specific financial long-run forecasts and artificial financial markets), data mining, visualization, machine translation, social network filtering[163] and e-mail spam filtering. ANNs have been used to diagnose several types of cancers[164][165] and to distinguish highly invasive cancer cell lines from less invasive lines using only cell shape information.[166][167]

ANNs have been used to accelerate reliability analysis of infrastructures subject to natural disasters[168][169] and to predict foundation settlements.[170] It can also be useful to mitigate flood by the use of ANNs for modelling rainfall-runoff.[171] ANNs have also been used for building black-box models in geoscience: hydrology,[172][173] ocean modelling and coastal engineering,[174][175] and geomorphology.[176] ANNs have been employed in cybersecurity, with the objective to discriminate between legitimate activities and malicious ones. For example, machine learning has been used for classifying Android malware,[177] for identifying domains belonging to threat actors and for detecting URLs posing a security risk.[178] Research is underway on ANN systems designed for penetration testing, for detecting botnets,[179] credit cards frauds[180] and network intrusions.

ANNs have been proposed as a tool to solve partial differential equations in physics[181][182][183] and simulate the properties of many-body open quantum systems.[184][185][186][187] In brain research ANNs have studied short-term behavior of individual neurons,[188] the dynamics of neural circuitry arise from interactions between individual neurons and how behavior can arise from abstract neural modules that represent complete subsystems. Studies considered long-and short-term plasticity of neural systems and their relation to learning and memory from the individual neuron to the system level.

Theoretical properties

Computational power

The multilayer perceptron is a universal function approximator, as proven by the universal approximation theorem. However, the proof is not constructive regarding the number of neurons required, the network topology, the weights and the learning parameters.

A specific recurrent architecture with rational-valued weights (as opposed to full precision real number-valued weights) has the power of a universal Turing machine,[189] using a finite number of neurons and standard linear connections. Further, the use of irrational values for weights results in a machine with super-Turing power.[190][191]

Capacity

A model's "capacity" property corresponds to its ability to model any given function. It is related to the amount of information that can be stored in the network and to the notion of complexity. Two notions of capacity are known by the community. The information capacity and the VC Dimension. The information capacity of a perceptron is intensively discussed in Sir David MacKay's book[192] which summarizes work by Thomas Cover.[193] The capacity of a network of standard neurons (not convolutional) can be derived by four rules[194] that derive from understanding a neuron as an electrical element. The information capacity captures the functions modelable by the network given any data as input. The second notion, is the VC dimension. VC Dimension uses the principles of measure theory and finds the maximum capacity under the best possible circumstances. This is, given input data in a specific form. As noted in,[192] the VC Dimension for arbitrary inputs is half the information capacity of a Perceptron. The VC Dimension for arbitrary points is sometimes referred to as Memory Capacity.[195]

Convergence

Models may not consistently converge on a single solution, firstly because local minima may exist, depending on the cost function and the model. Secondly, the optimization method used might not guarantee to converge when it begins far from any local minimum. Thirdly, for sufficiently large data or parameters, some methods become impractical.

Another issue worthy to mention is that training may cross some Saddle point which may lead the convergence to the wrong direction.

The convergence behavior of certain types of ANN architectures are more understood than others. When the width of network approaches to infinity, the ANN is well described by its first order Taylor expansion throughout training, and so inherits the convergence behavior of affine models.[196][197] Another example is when parameters are small, it is observed that ANNs often fits target functions from low to high frequencies. This behavior is referred to as the spectral bias, or frequency principle, of neural networks.[198][199][200][201] This phenomenon is the opposite to the behavior of some well studied iterative numerical schemes such as Jacobi method. Deeper neural networks have been observed to be more biased towards low frequency functions.[202]

Generalization and statistics

Applications whose goal is to create a system that generalizes well to unseen examples, face the possibility of over-training. This arises in convoluted or over-specified systems when the network capacity significantly exceeds the needed free parameters. Two approaches address over-training. The first is to use cross-validation and similar techniques to check for the presence of over-training and to select hyperparameters to minimize the generalization error.

The second is to use some form of regularization. This concept emerges in a probabilistic (Bayesian) framework, where regularization can be performed by selecting a larger prior probability over simpler models; but also in statistical learning theory, where the goal is to minimize over two quantities: the 'empirical risk' and the 'structural risk', which roughly corresponds to the error over the training set and the predicted error in unseen data due to overfitting.

Supervised neural networks that use a mean squared error (MSE) cost function can use formal statistical methods to determine the confidence of the trained model. The MSE on a validation set can be used as an estimate for variance. This value can then be used to calculate the confidence interval of network output, assuming a normal distribution. A confidence analysis made this way is statistically valid as long as the output probability distribution stays the same and the network is not modified.

By assigning a softmax activation function, a generalization of the logistic function, on the output layer of the neural network (or a softmax component in a component-based network) for categorical target variables, the outputs can be interpreted as posterior probabilities. This is useful in classification as it gives a certainty measure on classifications.

The softmax activation function is:

Criticism

Training

A common criticism of neural networks, particularly in robotics, is that they require too much training for real-world operation.[203] Potential solutions include randomly shuffling training examples, by using a numerical optimization algorithm that does not take too large steps when changing the network connections following an example, grouping examples in so-called mini-batches and/or introducing a recursive least squares algorithm for CMAC.[133]

Theory

A central claim of ANNs is that they embody new and powerful general principles for processing information. These principles are ill-defined. It is often claimed that they are emergent from the network itself. This allows simple statistical association (the basic function of artificial neural networks) to be described as learning or recognition. In 1997, Alexander Dewdney commented that, as a result, artificial neural networks have a "something-for-nothing quality, one that imparts a peculiar aura of laziness and a distinct lack of curiosity about just how good these computing systems are. No human hand (or mind) intervenes; solutions are found as if by magic; and no one, it seems, has learned anything".[204] One response to Dewdney is that neural networks handle many complex and diverse tasks, ranging from autonomously flying aircraft[205] to detecting credit card fraud to mastering the game of Go.

Technology writer Roger Bridgman commented:

Neural networks, for instance, are in the dock not only because they have been hyped to high heaven, (what hasn't?) but also because you could create a successful net without understanding how it worked: the bunch of numbers that captures its behaviour would in all probability be "an opaque, unreadable table...valueless as a scientific resource".

In spite of his emphatic declaration that science is not technology, Dewdney seems here to pillory neural nets as bad science when most of those devising them are just trying to be good engineers. An unreadable table that a useful machine could read would still be well worth having.[206]

Biological brains use both shallow and deep circuits as reported by brain anatomy,[207] displaying a wide variety of invariance. Weng[208] argued that the brain self-wires largely according to signal statistics and therefore, a serial cascade cannot catch all major statistical dependencies.

Hardware

Large and effective neural networks require considerable computing resources.[209] While the brain has hardware tailored to the task of processing signals through a graph of neurons, simulating even a simplified neuron on von Neumann architecture may consume vast amounts of memory and storage. Furthermore, the designer often needs to transmit signals through many of these connections and their associated neurons – which require enormous CPU power and time.

Schmidhuber noted that the resurgence of neural networks in the twenty-first century is largely attributable to advances in hardware: from 1991 to 2015, computing power, especially as delivered by GPGPUs (on GPUs), has increased around a million-fold, making the standard backpropagation algorithm feasible for training networks that are several layers deeper than before.[22] The use of accelerators such as FPGAs and GPUs can reduce training times from months to days.[209]

Neuromorphic engineering or a physical neural network addresses the hardware difficulty directly, by constructing non-von-Neumann chips to directly implement neural networks in circuitry. Another type of chip optimized for neural network processing is called a Tensor Processing Unit, or TPU.[210]

Practical counterexamples

Analyzing what has been learned by an ANN is much easier than analyzing what has been learned by a biological neural network. Furthermore, researchers involved in exploring learning algorithms for neural networks are gradually uncovering general principles that allow a learning machine to be successful. For example, local vs. non-local learning and shallow vs. deep architecture.[211]

Gallery

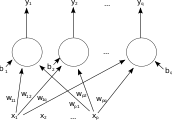

A single-layer feedforward artificial neural network. Arrows originating from are omitted for clarity. There are p inputs to this network and q outputs. In this system, the value of the qth output, , is calculated as

A single-layer feedforward artificial neural network. Arrows originating from are omitted for clarity. There are p inputs to this network and q outputs. In this system, the value of the qth output, , is calculated as A two-layer feedforward artificial neural network

A two-layer feedforward artificial neural network An artificial neural network

An artificial neural network.svg.png.webp) An ANN dependency graph

An ANN dependency graph A single-layer feedforward artificial neural network with 4 inputs, 6 hidden nodes and 2 outputs. Given position state and direction, it outputs wheel based control values.

A single-layer feedforward artificial neural network with 4 inputs, 6 hidden nodes and 2 outputs. Given position state and direction, it outputs wheel based control values. A two-layer feedforward artificial neural network with 8 inputs, 2x8 hidden nodes and 2 outputs. Given position state, direction and other environment values, it outputs thruster based control values.

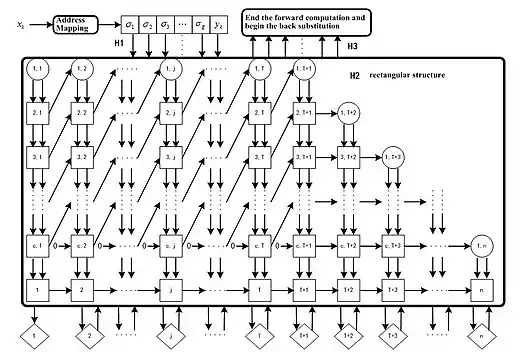

A two-layer feedforward artificial neural network with 8 inputs, 2x8 hidden nodes and 2 outputs. Given position state, direction and other environment values, it outputs thruster based control values. Parallel pipeline structure of CMAC neural network. This learning algorithm can converge in one step.

Parallel pipeline structure of CMAC neural network. This learning algorithm can converge in one step.

See also

- ADALINE

- Autoencoder

- Bio-inspired computing

- Blue Brain Project

- Catastrophic interference

- Cognitive architecture

- Connectionist expert system

- Connectomics

- Hyperdimensional computing

- Large width limits of neural networks

- List of machine learning concepts

- Neural gas

- Neural network software

- Optical neural network

- Parallel distributed processing

- Philosophy of artificial intelligence

- Quantum neural network

- Recurrent neural networks

- Spiking neural network

- Stochastic parrot

- Tensor product network

Notes

- Steering for the 1995 "No Hands Across America" required "only a few human assists".

References

- Hardesty, Larry (14 April 2017). "Explained: Neural networks". MIT News Office. Retrieved 2 June 2022.

- Yang, Z.R.; Yang, Z. (2014). Comprehensive Biomedical Physics. Karolinska Institute, Stockholm, Sweden: Elsevier. p. 1. ISBN 978-0-444-53633-4. Archived from the original on 28 July 2022. Retrieved 28 July 2022.

- Ferrie, C., & Kaiser, S. (2019). Neural Networks for Babies. Sourcebooks. ISBN 978-1492671206.

{{cite book}}: CS1 maint: multiple names: authors list (link) - Mansfield Merriman, "A List of Writings Relating to the Method of Least Squares"

- Stigler, Stephen M. (1981). "Gauss and the Invention of Least Squares". Ann. Stat. 9 (3): 465–474. doi:10.1214/aos/1176345451.

- Bretscher, Otto (1995). Linear Algebra With Applications (3rd ed.). Upper Saddle River, NJ: Prentice Hall.

- Schmidhuber, Juergen (2022). "Annotated History of Modern AI and Deep Learning". arXiv:2212.11279 [cs.NE].

- Stigler, Stephen M. (1986). The History of Statistics: The Measurement of Uncertainty before 1900. Cambridge: Harvard. ISBN 0-674-40340-1.

- Brush, Stephen G. (1967). "History of the Lenz-Ising Model". Reviews of Modern Physics. 39 (4): 883–893. Bibcode:1967RvMP...39..883B. doi:10.1103/RevModPhys.39.883.

- Amari, Shun-Ichi (1972). "Learning patterns and pattern sequences by self-organizing nets of threshold elements". IEEE Transactions. C (21): 1197–1206.

- Hopfield, J. J. (1982). "Neural networks and physical systems with emergent collective computational abilities". Proceedings of the National Academy of Sciences. 79 (8): 2554–2558. Bibcode:1982PNAS...79.2554H. doi:10.1073/pnas.79.8.2554. PMC 346238. PMID 6953413.

- McCulloch, Warren; Walter Pitts (1943). "A Logical Calculus of Ideas Immanent in Nervous Activity". Bulletin of Mathematical Biophysics. 5 (4): 115–133. doi:10.1007/BF02478259.

- Kleene, S.C. (1956). "Representation of Events in Nerve Nets and Finite Automata". Annals of Mathematics Studies. No. 34. Princeton University Press. pp. 3–41. Retrieved 17 June 2017.

- Hebb, Donald (1949). The Organization of Behavior. New York: Wiley. ISBN 978-1-135-63190-1.

- Farley, B.G.; W.A. Clark (1954). "Simulation of Self-Organizing Systems by Digital Computer". IRE Transactions on Information Theory. 4 (4): 76–84. doi:10.1109/TIT.1954.1057468.

- Haykin (2008) Neural Networks and Learning Machines, 3rd edition

- Rosenblatt, F. (1958). "The Perceptron: A Probabilistic Model For Information Storage And Organization in the Brain". Psychological Review. 65 (6): 386–408. CiteSeerX 10.1.1.588.3775. doi:10.1037/h0042519. PMID 13602029. S2CID 12781225.

- Werbos, P.J. (1975). Beyond Regression: New Tools for Prediction and Analysis in the Behavioral Sciences.

- Rosenblatt, Frank (1957). "The Perceptron—a perceiving and recognizing automaton". Report 85-460-1. Cornell Aeronautical Laboratory.

- Olazaran, Mikel (1996). "A Sociological Study of the Official History of the Perceptrons Controversy". Social Studies of Science. 26 (3): 611–659. doi:10.1177/030631296026003005. JSTOR 285702. S2CID 16786738.

- Minsky, Marvin; Papert, Seymour (1969). Perceptrons: An Introduction to Computational Geometry. MIT Press. ISBN 978-0-262-63022-1.

- Schmidhuber, J. (2015). "Deep Learning in Neural Networks: An Overview". Neural Networks. 61: 85–117. arXiv:1404.7828. doi:10.1016/j.neunet.2014.09.003. PMID 25462637. S2CID 11715509.

- Ivakhnenko, A. G. (1973). Cybernetic Predicting Devices. CCM Information Corporation.

- Ivakhnenko, A. G.; Lapa, Valentin Grigorʹevich (1967). Cybernetics and forecasting techniques. American Elsevier Pub. Co.

- Robbins, H.; Monro, S. (1951). "A Stochastic Approximation Method". The Annals of Mathematical Statistics. 22 (3): 400. doi:10.1214/aoms/1177729586.

- Amari, Shun'ichi (1967). "A theory of adaptive pattern classifier". IEEE Transactions. EC (16): 279–307.

- Kohonen, Teuvo; Honkela, Timo (2007). "Kohonen Network". Scholarpedia. 2 (1): 1568. Bibcode:2007SchpJ...2.1568K. doi:10.4249/scholarpedia.1568.

- Kohonen, Teuvo (1982). "Self-Organized Formation of Topologically Correct Feature Maps". Biological Cybernetics. 43 (1): 59–69. doi:10.1007/bf00337288. S2CID 206775459.

- Von der Malsburg, C (1973). "Self-organization of orientation sensitive cells in the striate cortex". Kybernetik. 14 (2): 85–100. doi:10.1007/bf00288907. PMID 4786750. S2CID 3351573.

- Fukushima, Kunihiko (1980). "Neocognitron: A Self-organizing Neural Network Model for a Mechanism of Pattern Recognition Unaffected by Shift in Position" (PDF). Biological Cybernetics. 36 (4): 193–202. doi:10.1007/BF00344251. PMID 7370364. S2CID 206775608. Retrieved 16 November 2013.

- Fukushima, K. (1969). "Visual feature extraction by a multilayered network of analog threshold elements". IEEE Transactions on Systems Science and Cybernetics. 5 (4): 322–333. doi:10.1109/TSSC.1969.300225.

- Ramachandran, Prajit; Barret, Zoph; Quoc, V. Le (16 October 2017). "Searching for Activation Functions". arXiv:1710.05941 [cs.NE].

- Leibniz, Gottfried Wilhelm Freiherr von (1920). The Early Mathematical Manuscripts of Leibniz: Translated from the Latin Texts Published by Carl Immanuel Gerhardt with Critical and Historical Notes (Leibniz published the chain rule in a 1676 memoir). Open court publishing Company. ISBN 9780598818461.

- Linnainmaa, Seppo (1970). The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors (Masters) (in Finnish). University of Helsinki. pp. 6–7.

- Linnainmaa, Seppo (1976). "Taylor expansion of the accumulated rounding error". BIT Numerical Mathematics. 16 (2): 146–160. doi:10.1007/bf01931367. S2CID 122357351.

- Griewank, Andreas (2012). "Who Invented the Reverse Mode of Differentiation?". Optimization Stories. Documenta Matematica, Extra Volume ISMP. pp. 389–400. S2CID 15568746.

- Griewank, Andreas; Walther, Andrea (2008). Evaluating Derivatives: Principles and Techniques of Algorithmic Differentiation, Second Edition. SIAM. ISBN 978-0-89871-776-1.

- Rosenblatt, Frank (1962). Principles of Neurodynamics. Spartan, New York.

- Kelley, Henry J. (1960). "Gradient theory of optimal flight paths". ARS Journal. 30 (10): 947–954. doi:10.2514/8.5282.

- "A gradient method for optimizing multi-stage allocation processes". Proceedings of the Harvard Univ. Symposium on digital computers and their applications. April 1961.

- Schmidhuber, Jürgen (2015). "Deep Learning". Scholarpedia. 10 (11): 85–117. Bibcode:2015SchpJ..1032832S. doi:10.4249/scholarpedia.32832.

- Dreyfus, Stuart E. (1 September 1990). "Artificial neural networks, back propagation, and the Kelley-Bryson gradient procedure". Journal of Guidance, Control, and Dynamics. 13 (5): 926–928. Bibcode:1990JGCD...13..926D. doi:10.2514/3.25422. ISSN 0731-5090.

- Mizutani, E.; Dreyfus, S.E.; Nishio, K. (2000). "On derivation of MLP backpropagation from the Kelley-Bryson optimal-control gradient formula and its application". Proceedings of the IEEE-INNS-ENNS International Joint Conference on Neural Networks. IJCNN 2000. Neural Computing: New Challenges and Perspectives for the New Millennium. IEEE. pp. 167–172 vol.2. doi:10.1109/ijcnn.2000.857892. ISBN 0-7695-0619-4. S2CID 351146.

- Dreyfus, Stuart (1973). "The computational solution of optimal control problems with time lag". IEEE Transactions on Automatic Control. 18 (4): 383–385. doi:10.1109/tac.1973.1100330.

- Werbos, Paul (1982). "Applications of advances in nonlinear sensitivity analysis" (PDF). System modeling and optimization. Springer. pp. 762–770. Archived (PDF) from the original on 14 April 2016. Retrieved 2 July 2017.

- David E. Rumelhart, Geoffrey E. Hinton & Ronald J. Williams , "Learning representations by back-propagating errors Archived 8 March 2021 at the Wayback Machine," Nature, 323, pages 533–536 1986.

- Waibel, Alex (December 1987). Phoneme Recognition Using Time-Delay Neural Networks. Meeting of the Institute of Electrical, Information and Communication Engineers (IEICE). Tokyo, Japan.

- Alexander Waibel et al., Phoneme Recognition Using Time-Delay Neural Networks IEEE Transactions on Acoustics, Speech, and Signal Processing, Volume 37, No. 3, pp. 328. – 339 March 1989.

- Zhang, Wei (1988). "Shift-invariant pattern recognition neural network and its optical architecture". Proceedings of Annual Conference of the Japan Society of Applied Physics.

- Zhang, Wei (1990). "Parallel distributed processing model with local space-invariant interconnections and its optical architecture". Applied Optics. 29 (32): 4790–7. Bibcode:1990ApOpt..29.4790Z. doi:10.1364/AO.29.004790. PMID 20577468.

- LeCun et al., "Backpropagation Applied to Handwritten Zip Code Recognition," Neural Computation, 1, pp. 541–551, 1989.

- J. Weng, N. Ahuja and T. S. Huang, "Cresceptron: a self-organizing neural network which grows adaptively Archived 21 September 2017 at the Wayback Machine," Proc. International Joint Conference on Neural Networks, Baltimore, Maryland, vol I, pp. 576–581, June 1992.

- J. Weng, N. Ahuja and T. S. Huang, "Learning recognition and segmentation of 3-D objects from 2-D images Archived 21 September 2017 at the Wayback Machine," Proc. 4th International Conf. Computer Vision, Berlin, Germany, pp. 121–128, May 1993.

- J. Weng, N. Ahuja and T. S. Huang, "Learning recognition and segmentation using the Cresceptron Archived 25 January 2021 at the Wayback Machine," International Journal of Computer Vision, vol. 25, no. 2, pp. 105–139, Nov. 1997.

- LeCun, Yann; Léon Bottou; Yoshua Bengio; Patrick Haffner (1998). "Gradient-based learning applied to document recognition" (PDF). Proceedings of the IEEE. 86 (11): 2278–2324. CiteSeerX 10.1.1.32.9552. doi:10.1109/5.726791. S2CID 14542261. Retrieved 7 October 2016.

- Qian, Ning, and Terrence J. Sejnowski. "Predicting the secondary structure of globular proteins using neural network models." Journal of molecular biology 202, no. 4 (1988): 865-884.

- Bohr, Henrik, Jakob Bohr, Søren Brunak, Rodney MJ Cotterill, Benny Lautrup, Leif Nørskov, Ole H. Olsen, and Steffen B. Petersen. "Protein secondary structure and homology by neural networks The α-helices in rhodopsin." FEBS letters 241, (1988): 223-228

- Rost, Burkhard, and Chris Sander. "Prediction of protein secondary structure at better than 70% accuracy." Journal of molecular biology 232, no. 2 (1993): 584-599.

- Schmidhuber, Jürgen (1992). "Learning complex, extended sequences using the principle of history compression" (PDF). Neural Computation. 4 (2): 234–242. doi:10.1162/neco.1992.4.2.234. S2CID 18271205.

- Schmidhuber, Jürgen (1993). Habilitation Thesis (PDF).

- Schmidhuber, Jürgen (1 November 1992). "Learning to control fast-weight memories: an alternative to recurrent nets". Neural Computation. 4 (1): 131–139. doi:10.1162/neco.1992.4.1.131. S2CID 16683347.

- Schlag, Imanol; Irie, Kazuki; Schmidhuber, Jürgen (2021). "Linear Transformers Are Secretly Fast Weight Programmers". ICML 2021. Springer. pp. 9355–9366.

- Choromanski, Krzysztof; Likhosherstov, Valerii; Dohan, David; Song, Xingyou; Gane, Andreea; Sarlos, Tamas; Hawkins, Peter; Davis, Jared; Mohiuddin, Afroz; Kaiser, Lukasz; Belanger, David; Colwell, Lucy; Weller, Adrian (2020). "Rethinking Attention with Performers". arXiv:2009.14794 [cs.CL].

- Schmidhuber, Jürgen (1993). "Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets". ICANN 1993. Springer. pp. 460–463.

- Vaswani, Ashish; Shazeer, Noam; Parmar, Niki; Uszkoreit, Jakob; Jones, Llion; Gomez, Aidan N.; Kaiser, Lukasz; Polosukhin, Illia (12 June 2017). "Attention Is All You Need". arXiv:1706.03762 [cs.CL].

- Wolf, Thomas; Debut, Lysandre; Sanh, Victor; Chaumond, Julien; Delangue, Clement; Moi, Anthony; Cistac, Pierric; Rault, Tim; Louf, Remi; Funtowicz, Morgan; Davison, Joe; Shleifer, Sam; von Platen, Patrick; Ma, Clara; Jernite, Yacine; Plu, Julien; Xu, Canwen; Le Scao, Teven; Gugger, Sylvain; Drame, Mariama; Lhoest, Quentin; Rush, Alexander (2020). "Transformers: State-of-the-Art Natural Language Processing". Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations. pp. 38–45. doi:10.18653/v1/2020.emnlp-demos.6. S2CID 208117506.

- He, Cheng (31 December 2021). "Transformer in CV". Transformer in CV. Towards Data Science.

- Schmidhuber, Jürgen (1991). "A possibility for implementing curiosity and boredom in model-building neural controllers". Proc. SAB'1991. MIT Press/Bradford Books. pp. 222–227.

- Schmidhuber, Jürgen (2010). "Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010)". IEEE Transactions on Autonomous Mental Development. 2 (3): 230–247. doi:10.1109/TAMD.2010.2056368. S2CID 234198.

- Schmidhuber, Jürgen (2020). "Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991)". Neural Networks. 127: 58–66. arXiv:1906.04493. doi:10.1016/j.neunet.2020.04.008. PMID 32334341. S2CID 216056336.

- Goodfellow, Ian; Pouget-Abadie, Jean; Mirza, Mehdi; Xu, Bing; Warde-Farley, David; Ozair, Sherjil; Courville, Aaron; Bengio, Yoshua (2014). Generative Adversarial Networks (PDF). Proceedings of the International Conference on Neural Information Processing Systems (NIPS 2014). pp. 2672–2680. Archived (PDF) from the original on 22 November 2019. Retrieved 20 August 2019.

- "Prepare, Don't Panic: Synthetic Media and Deepfakes". witness.org. Archived from the original on 2 December 2020. Retrieved 25 November 2020.

- "GAN 2.0: NVIDIA's Hyperrealistic Face Generator". SyncedReview.com. 14 December 2018. Retrieved 3 October 2019.

- Karras, Tero; Aila, Timo; Laine, Samuli; Lehtinen, Jaakko (1 October 2017). "Progressive Growing of GANs for Improved Quality, Stability, and Variation". arXiv:1710.10196 [cs.NE].

- S. Hochreiter., "Untersuchungen zu dynamischen neuronalen Netzen Archived 2015-03-06 at the Wayback Machine," Diploma thesis. Institut f. Informatik, Technische Univ. Munich. Advisor: J. Schmidhuber, 1991.

- Hochreiter, S.; et al. (15 January 2001). "Gradient flow in recurrent nets: the difficulty of learning long-term dependencies". In Kolen, John F.; Kremer, Stefan C. (eds.). A Field Guide to Dynamical Recurrent Networks. John Wiley & Sons. ISBN 978-0-7803-5369-5.

- Hochreiter, Sepp; Schmidhuber, Jürgen (1 November 1997). "Long Short-Term Memory". Neural Computation. 9 (8): 1735–1780. doi:10.1162/neco.1997.9.8.1735. ISSN 0899-7667. PMID 9377276. S2CID 1915014.

- Schmidhuber, J. (2015). "Deep Learning in Neural Networks: An Overview". Neural Networks. 61: 85–117. arXiv:1404.7828. doi:10.1016/j.neunet.2014.09.003. PMID 25462637. S2CID 11715509.

- Gers, Felix; Schmidhuber, Jürgen; Cummins, Fred (1999). "Learning to forget: Continual prediction with LSTM". 9th International Conference on Artificial Neural Networks: ICANN '99. Vol. 1999. pp. 850–855. doi:10.1049/cp:19991218. ISBN 0-85296-721-7.

- Srivastava, Rupesh Kumar; Greff, Klaus; Schmidhuber, Jürgen (2 May 2015). "Highway Networks". arXiv:1505.00387 [cs.LG].

- Srivastava, Rupesh K; Greff, Klaus; Schmidhuber, Juergen (2015). "Training Very Deep Networks". Advances in Neural Information Processing Systems. Curran Associates, Inc. 28: 2377–2385.

- He, Kaiming; Zhang, Xiangyu; Ren, Shaoqing; Sun, Jian (2016). Deep Residual Learning for Image Recognition. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Las Vegas, NV, US: IEEE. pp. 770–778. arXiv:1512.03385. doi:10.1109/CVPR.2016.90. ISBN 978-1-4673-8851-1.

- Mead, Carver A.; Ismail, Mohammed (8 May 1989). Analog VLSI Implementation of Neural Systems (PDF). The Kluwer International Series in Engineering and Computer Science. Vol. 80. Norwell, MA: Kluwer Academic Publishers. doi:10.1007/978-1-4613-1639-8. ISBN 978-1-4613-1639-8. Archived (PDF) from the original on 6 November 2019. Retrieved 24 January 2020.

- Domingos, Pedro (22 September 2015). "chapter 4". The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our World. Basic Books. ISBN 978-0465065707.

- Smolensky, P. (1986). "Information processing in dynamical systems: Foundations of harmony theory.". In D. E. Rumelhart; J. L. McClelland; PDP Research Group (eds.). Parallel Distributed Processing: Explorations in the Microstructure of Cognition. Vol. 1. pp. 194–281. ISBN 978-0-262-68053-0.

- Ng, Andrew; Dean, Jeff (2012). "Building High-level Features Using Large Scale Unsupervised Learning". arXiv:1112.6209 [cs.LG].

- Ian Goodfellow and Yoshua Bengio and Aaron Courville (2016). Deep Learning. MIT Press. Archived from the original on 16 April 2016. Retrieved 1 June 2016.

- Cireşan, Dan Claudiu; Meier, Ueli; Gambardella, Luca Maria; Schmidhuber, Jürgen (21 September 2010). "Deep, Big, Simple Neural Nets for Handwritten Digit Recognition". Neural Computation. 22 (12): 3207–3220. arXiv:1003.0358. doi:10.1162/neco_a_00052. ISSN 0899-7667. PMID 20858131. S2CID 1918673.

- Dominik Scherer, Andreas C. Müller, and Sven Behnke: "Evaluation of Pooling Operations in Convolutional Architectures for Object Recognition Archived 3 April 2018 at the Wayback Machine," In 20th International Conference Artificial Neural Networks (ICANN), pp. 92–101, 2010. doi:10.1007/978-3-642-15825-4_10.

- 2012 Kurzweil AI Interview Archived 31 August 2018 at the Wayback Machine with Jürgen Schmidhuber on the eight competitions won by his Deep Learning team 2009–2012

- "How bio-inspired deep learning keeps winning competitions | KurzweilAI". www.kurzweilai.net. Archived from the original on 31 August 2018. Retrieved 16 June 2017.

- Graves, Alex; Schmidhuber, Jürgen (2009). "Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks" (PDF). In Koller, D.; Schuurmans, Dale; Bengio, Yoshua; Bottou, L. (eds.). Advances in Neural Information Processing Systems 21 (NIPS 2008). Neural Information Processing Systems (NIPS) Foundation. pp. 545–552. ISBN 9781605609492.

- Graves, A.; Liwicki, M.; Fernandez, S.; Bertolami, R.; Bunke, H.; Schmidhuber, J. (May 2009). "A Novel Connectionist System for Unconstrained Handwriting Recognition" (PDF). IEEE Transactions on Pattern Analysis and Machine Intelligence. 31 (5): 855–868. CiteSeerX 10.1.1.139.4502. doi:10.1109/tpami.2008.137. ISSN 0162-8828. PMID 19299860. S2CID 14635907. Archived (PDF) from the original on 2 January 2014. Retrieved 30 July 2014.

- Ciresan, Dan; Meier, U.; Schmidhuber, J. (June 2012). "Multi-column deep neural networks for image classification". 2012 IEEE Conference on Computer Vision and Pattern Recognition. pp. 3642–3649. arXiv:1202.2745. Bibcode:2012arXiv1202.2745C. CiteSeerX 10.1.1.300.3283. doi:10.1109/cvpr.2012.6248110. ISBN 978-1-4673-1228-8. S2CID 2161592.

- Zell, Andreas (2003). "chapter 5.2". Simulation neuronaler Netze [Simulation of Neural Networks] (in German) (1st ed.). Addison-Wesley. ISBN 978-3-89319-554-1. OCLC 249017987.

- Artificial intelligence (3rd ed.). Addison-Wesley Pub. Co. 1992. ISBN 0-201-53377-4.

- Abbod, Maysam F. (2007). "Application of Artificial Intelligence to the Management of Urological Cancer". The Journal of Urology. 178 (4): 1150–1156. doi:10.1016/j.juro.2007.05.122. PMID 17698099.

- Dawson, Christian W. (1998). "An artificial neural network approach to rainfall-runoff modelling". Hydrological Sciences Journal. 43 (1): 47–66. doi:10.1080/02626669809492102.

- "The Machine Learning Dictionary". www.cse.unsw.edu.au. Archived from the original on 26 August 2018. Retrieved 4 November 2009.

- Ciresan, Dan; Ueli Meier; Jonathan Masci; Luca M. Gambardella; Jurgen Schmidhuber (2011). "Flexible, High Performance Convolutional Neural Networks for Image Classification" (PDF). Proceedings of the Twenty-Second International Joint Conference on Artificial Intelligence-Volume Volume Two. 2: 1237–1242. Archived (PDF) from the original on 5 April 2022. Retrieved 7 July 2022.

- Zell, Andreas (1994). Simulation Neuronaler Netze [Simulation of Neural Networks] (in German) (1st ed.). Addison-Wesley. p. 73. ISBN 3-89319-554-8.

- Miljanovic, Milos (February–March 2012). "Comparative analysis of Recurrent and Finite Impulse Response Neural Networks in Time Series Prediction" (PDF). Indian Journal of Computer and Engineering. 3 (1).

- Lau, Suki (10 July 2017). "A Walkthrough of Convolutional Neural Network – Hyperparameter Tuning". Medium. Archived from the original on 4 February 2023. Retrieved 23 August 2019.

- Kelleher, John D.; Mac Namee, Brian; D'Arcy, Aoife (2020). "7-8". Fundamentals of machine learning for predictive data analytics: algorithms, worked examples, and case studies (2nd ed.). Cambridge, MA. ISBN 978-0-262-36110-1. OCLC 1162184998.

{{cite book}}: CS1 maint: location missing publisher (link) - Wei, Jiakai (26 April 2019). "Forget the Learning Rate, Decay Loss". arXiv:1905.00094 [cs.LG].

- Li, Y.; Fu, Y.; Li, H.; Zhang, S. W. (1 June 2009). "The Improved Training Algorithm of Back Propagation Neural Network with Self-adaptive Learning Rate". 2009 International Conference on Computational Intelligence and Natural Computing. Vol. 1. pp. 73–76. doi:10.1109/CINC.2009.111. ISBN 978-0-7695-3645-3. S2CID 10557754.

- Huang, Guang-Bin; Zhu, Qin-Yu; Siew, Chee-Kheong (2006). "Extreme learning machine: theory and applications". Neurocomputing. 70 (1): 489–501. CiteSeerX 10.1.1.217.3692. doi:10.1016/j.neucom.2005.12.126. S2CID 116858.

- Widrow, Bernard; et al. (2013). "The no-prop algorithm: A new learning algorithm for multilayer neural networks". Neural Networks. 37: 182–188. doi:10.1016/j.neunet.2012.09.020. PMID 23140797.

- Ollivier, Yann; Charpiat, Guillaume (2015). "Training recurrent networks without backtracking". arXiv:1507.07680 [cs.NE].

- Hinton, G. E. (2010). "A Practical Guide to Training Restricted Boltzmann Machines". Tech. Rep. UTML TR 2010-003. Archived from the original on 9 May 2021. Retrieved 27 June 2017.

- ESANN. 2009.

- Bernard, Etienne (2021). Introduction to machine learning. Champaign. p. 9. ISBN 978-1579550486. Retrieved 22 March 2023.

{{cite book}}: CS1 maint: location missing publisher (link) - Bernard, Etienne (2021). Introduction to machine learning. Champaign. p. 12. ISBN 978-1579550486. Retrieved 22 March 2023.

{{cite book}}: CS1 maint: location missing publisher (link) - Bernard, Etienne (2021). Introduction to Machine Learning. Wolfram Media Inc. p. 9. ISBN 978-1-579550-48-6.

- Ojha, Varun Kumar; Abraham, Ajith; Snášel, Václav (1 April 2017). "Metaheuristic design of feedforward neural networks: A review of two decades of research". Engineering Applications of Artificial Intelligence. 60: 97–116. arXiv:1705.05584. Bibcode:2017arXiv170505584O. doi:10.1016/j.engappai.2017.01.013. S2CID 27910748.

- Dominic, S.; Das, R.; Whitley, D.; Anderson, C. (July 1991). "Genetic reinforcement learning for neural networks". IJCNN-91-Seattle International Joint Conference on Neural Networks. IJCNN-91-Seattle International Joint Conference on Neural Networks. Seattle, Washington, US: IEEE. pp. 71–76. doi:10.1109/IJCNN.1991.155315. ISBN 0-7803-0164-1.

- Hoskins, J.C.; Himmelblau, D.M. (1992). "Process control via artificial neural networks and reinforcement learning". Computers & Chemical Engineering. 16 (4): 241–251. doi:10.1016/0098-1354(92)80045-B.

- Bertsekas, D.P.; Tsitsiklis, J.N. (1996). Neuro-dynamic programming. Athena Scientific. p. 512. ISBN 978-1-886529-10-6. Archived from the original on 29 June 2017. Retrieved 17 June 2017.

- Secomandi, Nicola (2000). "Comparing neuro-dynamic programming algorithms for the vehicle routing problem with stochastic demands". Computers & Operations Research. 27 (11–12): 1201–1225. CiteSeerX 10.1.1.392.4034. doi:10.1016/S0305-0548(99)00146-X.

- de Rigo, D.; Rizzoli, A. E.; Soncini-Sessa, R.; Weber, E.; Zenesi, P. (2001). "Neuro-dynamic programming for the efficient management of reservoir networks". Proceedings of MODSIM 2001, International Congress on Modelling and Simulation. MODSIM 2001, International Congress on Modelling and Simulation. Canberra, Australia: Modelling and Simulation Society of Australia and New Zealand. doi:10.5281/zenodo.7481. ISBN 0-86740-525-2. Archived from the original on 7 August 2013. Retrieved 29 July 2013.

- Damas, M.; Salmeron, M.; Diaz, A.; Ortega, J.; Prieto, A.; Olivares, G. (2000). "Genetic algorithms and neuro-dynamic programming: application to water supply networks". Proceedings of 2000 Congress on Evolutionary Computation. 2000 Congress on Evolutionary Computation. Vol. 1. La Jolla, California, US: IEEE. pp. 7–14. doi:10.1109/CEC.2000.870269. ISBN 0-7803-6375-2.

- Deng, Geng; Ferris, M.C. (2008). "Neuro-dynamic programming for fractionated radiotherapy planning". Optimization in Medicine. Springer Optimization and Its Applications. Vol. 12. pp. 47–70. CiteSeerX 10.1.1.137.8288. doi:10.1007/978-0-387-73299-2_3. ISBN 978-0-387-73298-5.

- Bozinovski, S. (1982). "A self-learning system using secondary reinforcement". In R. Trappl (ed.) Cybernetics and Systems Research: Proceedings of the Sixth European Meeting on Cybernetics and Systems Research. North Holland. pp. 397–402. ISBN 978-0-444-86488-8.

- Bozinovski, S. (2014) "Modeling mechanisms of cognition-emotion interaction in artificial neural networks, since 1981 Archived 23 March 2019 at the Wayback Machine." Procedia Computer Science p. 255-263

- Bozinovski, Stevo; Bozinovska, Liljana (2001). "Self-learning agents: A connectionist theory of emotion based on crossbar value judgment". Cybernetics and Systems. 32 (6): 637–667. doi:10.1080/01969720118145. S2CID 8944741.

- "Artificial intelligence can 'evolve' to solve problems". Science | AAAS. 10 January 2018. Archived from the original on 9 December 2021. Retrieved 7 February 2018.

- Turchetti, Claudio (2004), Stochastic Models of Neural Networks, Frontiers in artificial intelligence and applications: Knowledge-based intelligent engineering systems, vol. 102, IOS Press, ISBN 9781586033880

- Jospin, Laurent Valentin; Laga, Hamid; Boussaid, Farid; Buntine, Wray; Bennamoun, Mohammed (2022). "Hands-On Bayesian Neural Networks—A Tutorial for Deep Learning Users". IEEE Computational Intelligence Magazine. 17 (2): 29–48. arXiv:2007.06823. doi:10.1109/mci.2022.3155327. ISSN 1556-603X. S2CID 220514248. Archived from the original on 4 February 2023. Retrieved 19 November 2022.

- de Rigo, D.; Castelletti, A.; Rizzoli, A. E.; Soncini-Sessa, R.; Weber, E. (January 2005). "A selective improvement technique for fastening Neuro-Dynamic Programming in Water Resources Network Management". In Pavel Zítek (ed.). Proceedings of the 16th IFAC World Congress – IFAC-PapersOnLine. 16th IFAC World Congress. Vol. 16. Prague, Czech Republic: IFAC. pp. 7–12. doi:10.3182/20050703-6-CZ-1902.02172. hdl:11311/255236. ISBN 978-3-902661-75-3. Archived from the original on 26 April 2012. Retrieved 30 December 2011.

- Ferreira, C. (2006). "Designing Neural Networks Using Gene Expression Programming". In A. Abraham; B. de Baets; M. Köppen; B. Nickolay (eds.). Applied Soft Computing Technologies: The Challenge of Complexity (PDF). Springer-Verlag. pp. 517–536. Archived (PDF) from the original on 19 December 2013. Retrieved 8 October 2012.

- Da, Y.; Xiurun, G. (July 2005). "An improved PSO-based ANN with simulated annealing technique". In T. Villmann (ed.). New Aspects in Neurocomputing: 11th European Symposium on Artificial Neural Networks. Vol. 63. Elsevier. pp. 527–533. doi:10.1016/j.neucom.2004.07.002. Archived from the original on 25 April 2012. Retrieved 30 December 2011.

- Wu, J.; Chen, E. (May 2009). "A Novel Nonparametric Regression Ensemble for Rainfall Forecasting Using Particle Swarm Optimization Technique Coupled with Artificial Neural Network". In Wang, H.; Shen, Y.; Huang, T.; Zeng, Z. (eds.). 6th International Symposium on Neural Networks, ISNN 2009. Lecture Notes in Computer Science. Vol. 5553. Springer. pp. 49–58. doi:10.1007/978-3-642-01513-7_6. ISBN 978-3-642-01215-0. Archived from the original on 31 December 2014. Retrieved 1 January 2012.

- Ting Qin; Zonghai Chen; Haitao Zhang; Sifu Li; Wei Xiang; Ming Li (2004). "A learning algorithm of CMAC based on RLS" (PDF). Neural Processing Letters. 19 (1): 49–61. doi:10.1023/B:NEPL.0000016847.18175.60. S2CID 6233899. Archived (PDF) from the original on 14 April 2021. Retrieved 30 January 2019.

- Ting Qin; Haitao Zhang; Zonghai Chen; Wei Xiang (2005). "Continuous CMAC-QRLS and its systolic array" (PDF). Neural Processing Letters. 22 (1): 1–16. doi:10.1007/s11063-004-2694-0. S2CID 16095286. Archived (PDF) from the original on 18 November 2018. Retrieved 30 January 2019.

- LeCun Y, Boser B, Denker JS, Henderson D, Howard RE, Hubbard W, Jackel LD (1989). "Backpropagation Applied to Handwritten Zip Code Recognition". Neural Computation. 1 (4): 541–551. doi:10.1162/neco.1989.1.4.541. S2CID 41312633.

- Yann LeCun (2016). Slides on Deep Learning Online Archived 23 April 2016 at the Wayback Machine

- Hochreiter, Sepp; Schmidhuber, Jürgen (1 November 1997). "Long Short-Term Memory". Neural Computation. 9 (8): 1735–1780. doi:10.1162/neco.1997.9.8.1735. ISSN 0899-7667. PMID 9377276. S2CID 1915014.

- Sak, Hasim; Senior, Andrew; Beaufays, Francoise (2014). "Long Short-Term Memory recurrent neural network architectures for large scale acoustic modeling" (PDF). Archived from the original (PDF) on 24 April 2018.

- Li, Xiangang; Wu, Xihong (15 October 2014). "Constructing Long Short-Term Memory based Deep Recurrent Neural Networks for Large Vocabulary Speech Recognition". arXiv:1410.4281 [cs.CL].

- Fan, Y.; Qian, Y.; Xie, F.; Soong, F. K. (2014). "TTS synthesis with bidirectional LSTM based Recurrent Neural Networks". Proceedings of the Annual Conference of the International Speech Communication Association, Interspeech: 1964–1968. Retrieved 13 June 2017.

- Zen, Heiga; Sak, Hasim (2015). "Unidirectional Long Short-Term Memory Recurrent Neural Network with Recurrent Output Layer for Low-Latency Speech Synthesis" (PDF). Google.com. ICASSP. pp. 4470–4474. Archived (PDF) from the original on 9 May 2021. Retrieved 27 June 2017.

- Fan, Bo; Wang, Lijuan; Soong, Frank K.; Xie, Lei (2015). "Photo-Real Talking Head with Deep Bidirectional LSTM" (PDF). Proceedings of ICASSP. Archived (PDF) from the original on 1 November 2017. Retrieved 27 June 2017.

- Silver, David; Hubert, Thomas; Schrittwieser, Julian; Antonoglou, Ioannis; Lai, Matthew; Guez, Arthur; Lanctot, Marc; Sifre, Laurent; Kumaran, Dharshan; Graepel, Thore; Lillicrap, Timothy; Simonyan, Karen; Hassabis, Demis (5 December 2017). "Mastering Chess and Shogi by Self-Play with a General Reinforcement Learning Algorithm". arXiv:1712.01815 [cs.AI].

- Zoph, Barret; Le, Quoc V. (4 November 2016). "Neural Architecture Search with Reinforcement Learning". arXiv:1611.01578 [cs.LG].

- Haifeng Jin; Qingquan Song; Xia Hu (2019). "Auto-keras: An efficient neural architecture search system". Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. ACM. arXiv:1806.10282. Archived from the original on 21 August 2019. Retrieved 21 August 2019 – via autokeras.com.

- Claesen, Marc; De Moor, Bart (2015). "Hyperparameter Search in Machine Learning". arXiv:1502.02127 [cs.LG]. Bibcode:2015arXiv150202127C

- Probst, Philipp; Boulesteix, Anne‐Laure; Bischl, Bernd (26 February 2018). "Tunability: Importance of Hyperparameters of Machine Learning Algorithms". J. Mach. Learn. Res. 20: 53:1–53:32. S2CID 88515435. Retrieved 18 March 2023.

- Zou, Jinming; Han, Yi; So, Sung-Sau (2009). "Overview of Artificial Neural Networks". Artificial Neural Networks. Methods in Molecular Biology. Vol. 458 (Livingstone, David J. ed.). Totowa, NJ: Humana Press. pp. 15–23. doi:10.1007/978-1-60327-101-1_2. ISBN 978-1-60327-101-1. PMID 19065803. Retrieved 18 March 2023.

- Esch, Robin (1990). "Functional Approximation". Handbook of Applied Mathematics (Springer US ed.). Boston, MA: Springer US. pp. 928–987. doi:10.1007/978-1-4684-1423-3_17. ISBN 978-1-4684-1423-3. Retrieved 18 March 2023.

- Sarstedt, Marko; Moo, Erik (2019). "Regression Analysis". A Concise Guide to Market Research. Springer Texts in Business and Economics. Springer Berlin Heidelberg. pp. 209–256. doi:10.1007/978-3-662-56707-4_7. ISBN 978-3-662-56706-7. S2CID 240396965.