Rate of convergence

In numerical analysis, the order of convergence and the rate of convergence of a convergent sequence are quantities that represent how quickly the sequence approaches its limit. A sequence that converges to is said to have order of convergence and rate of convergence if

| Differential equations |

|---|

| Scope |

| Classification |

| Solution |

| People |

The rate of convergence is also called the asymptotic error constant. Note that this terminology is not standardized and some authors will use rate where this article uses order (e.g., [2]).

In practice, the rate and order of convergence provide useful insights when using iterative methods for calculating numerical approximations. If the order of convergence is higher, then typically fewer iterations are necessary to yield a useful approximation. Strictly speaking, however, the asymptotic behavior of a sequence does not give conclusive information about any finite part of the sequence.

Similar concepts are used for discretization methods. The solution of the discretized problem converges to the solution of the continuous problem as the grid size goes to zero, and the speed of convergence is one of the factors of the efficiency of the method. However, the terminology, in this case, is different from the terminology for iterative methods.

Series acceleration is a collection of techniques for improving the rate of convergence of a series discretization. Such acceleration is commonly accomplished with sequence transformations.

Convergence speed for iterative methods

Convergence definitions

Suppose that the sequence converges to the number . The sequence is said to converge with order to , and with a rate of convergence[3] of , if

|

(Definition 1) |

for some positive constant if , and if .[4][5] It is not necessary, however, that be an integer. For example, the secant method, when converging to a regular, simple root, has an order of φ ≈ 1.618.

Convergence with order

- is called linear convergence if , and the sequence is said to converge Q-linearly to .

- is called quadratic convergence.

- is called cubic convergence.

- etc.

Order estimation

A practical method to calculate the order of convergence for a sequence is to calculate the following sequence, which converges to :[6]

Q-convergence definitions

In addition to the previously defined Q-linear convergence, a few other Q-convergence definitions exist. Given Definition 1 defined above, the sequence is said to converge Q-superlinearly to (i.e. faster than linearly) in all the cases where and also the case .[7] Given Definition 1, the sequence is said to converge Q-sublinearly to (i.e. slower than linearly) if . The sequence converges logarithmically to if the sequence converges sublinearly and additionally if[8]

Note that unlike previous definitions, logarithmic convergence is not called "Q-logarithmic."

In the definitions above, the "Q-" stands for "quotient" because the terms are defined using the quotient between two successive terms.[9]: 619 Often, however, the "Q-" is dropped and a sequence is simply said to have linear convergence, quadratic convergence, etc.

R-convergence definition

The Q-convergence definitions have a shortcoming in that they do not include some sequences, such as the sequence below, which converge reasonably fast, but whose rate is variable. Therefore, the definition of rate of convergence is extended as follows.

Suppose that converges to . The sequence is said to converge R-linearly to if there exists a sequence such that

and converges Q-linearly to zero.[3] The "R-" prefix stands for "root". [9]: 620

Examples

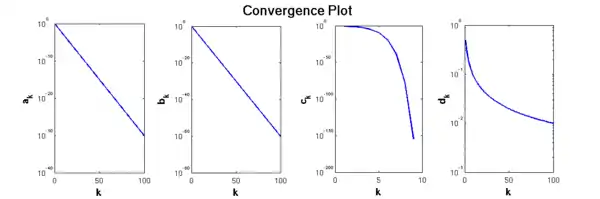

Consider the sequence

It can be shown that this sequence converges to . To determine the type of convergence, we plug the sequence into the definition of Q-linear convergence,

Thus, we find that converges Q-linearly and has a convergence rate of . More generally, for any , the sequence converges linearly with rate .

The sequence

also converges linearly to 0 with rate 1/2 under the R-convergence definition, but not under the Q-convergence definition. (Note that is the floor function, which gives the largest integer that is less than or equal to .)

The sequence

converges superlinearly. In fact, it is quadratically convergent.

Finally, the sequence

converges sublinearly and logarithmically.

Convergence speed for discretization methods

A similar situation exists for discretization methods designed to approximate a function , which might be an integral being approximated by numerical quadrature, or the solution of an ordinary differential equation (see example below). The discretization method generates a sequence , where each successive is a function of along with the grid spacing between successive values of the independent variable . The important parameter here for the convergence speed to is the grid spacing , inversely proportional to the number of grid points, i.e. the number of points in the sequence required to reach a given value of .

In this case, the sequence is said to converge to the sequence with order q if there exists a constant C such that

This is written as using big O notation.

This is the relevant definition when discussing methods for numerical quadrature or the solution of ordinary differential equations (ODEs).

A practical method to estimate the order of convergence for a discretization method is pick step sizes and and calculate the resulting errors and . The order of convergence is then approximated by the following formula:

which comes from writing the truncation error, at the old and new grid spacings, as

The error is, more specifically, a global truncation error (GTE), in that it represents a sum of errors accumulated over all iterations, as opposed to a local truncation error (LTE) over just one iteration.

Example of discretization methods

Consider the ordinary differential equation

with initial condition . We can solve this equation using the Forward Euler scheme for numerical discretization:

which generates the sequence

In terms of , this sequence is as follows, from the Binomial theorem:

The exact solution to this ODE is , corresponding to the following Taylor expansion in for :

In this case, the truncation error is

so converges to with a convergence rate .

Examples (continued)

The sequence with was introduced above. This sequence converges with order 1 according to the convention for discretization methods.

The sequence with , which was also introduced above, converges with order q for every number q. It is said to converge exponentially using the convention for discretization methods. However, it only converges linearly (that is, with order 1) using the convention for iterative methods.

Recurrent sequences and fixed points

The case of recurrent sequences which occurs in dynamical systems and in the context of various fixed-point theorems is of particular interest. Assuming that the relevant derivatives of f are continuous, one can (easily) show that for a fixed point such that , one has at least linear convergence for any starting value sufficiently close to p. If and , then one has at least quadratic convergence, and so on. If , then one has a repulsive fixed point and no starting value will produce a sequence converging to p (unless one directly jumps to the point p itself).

Acceleration of convergence

Many methods exist to increase the rate of convergence of a given sequence, i.e. to transform a given sequence into one converging faster to the same limit. Such techniques are in general known as "series acceleration". The goal of the transformed sequence is to reduce the computational cost of the calculation. One example of series acceleration is Aitken's delta-squared process. These methods in general (and in particular Aitken's method) do not increase the order of convergence, and are useful only if initially the convergence is not faster than linear: If convergences linearly, one gets a sequence that still converges linearly (except for pathologically designed special cases), but faster in the sense that . On the other hand, if the convergence is already of order ≥ 2, Aitken's method will bring no improvement.

References

- Ruye, Wang (2015-02-12). "Order and rate of convergence". hmc.edu. Retrieved 2020-07-31.

- Senning, Jonathan R. "Computing and Estimating the Rate of Convergence" (PDF). gordon.edu. Retrieved 2020-08-07.

- Bockelman, Brian (2005). "Rates of Convergence". math.unl.edu. Retrieved 2020-07-31.

- Hundley, Douglas. "Rate of Convergence" (PDF). Whitman College. Retrieved 2020-12-13.

- Porta, F. A. (1989). "On Q-Order and R-Order of Convergence" (PDF). Journal of Optimization Theory and Applications. 63 (3): 415–431. doi:10.1007/BF00939805. S2CID 116192710. Retrieved 2020-07-31.

- Senning, Jonathan R. "Computing and Estimating the Rate of Convergence" (PDF). gordon.edu. Retrieved 2020-08-07.

- Arnold, Mark. "Order of Convergence" (PDF). University of Arkansas. Retrieved 2022-12-13.

- Van Tuyl, Andrew H. (1994). "Acceleration of convergence of a family of logarithmically convergent sequences" (PDF). Mathematics of Computation. 63 (207): 229–246. doi:10.2307/2153571. JSTOR 2153571. Retrieved 2020-08-02.

- Nocedal, Jorge; Wright, Stephen J. (2006). Numerical Optimization (2nd ed.). Berlin, New York: Springer-Verlag. ISBN 978-0-387-30303-1.

Literature

The simple definition is used in

- Michelle Schatzman (2002), Numerical analysis: a mathematical introduction, Clarendon Press, Oxford. ISBN 0-19-850279-6.

The extended definition is used in

- Walter Gautschi (1997), Numerical analysis: an introduction, Birkhäuser, Boston. ISBN 0-8176-3895-4.

- Endre Süli and David Mayers (2003), An introduction to numerical analysis, Cambridge University Press. ISBN 0-521-00794-1.

The Big O definition is used in

- Richard L. Burden and J. Douglas Faires (2001), Numerical Analysis (7th ed.), Brooks/Cole. ISBN 0-534-38216-9

The terms Q-linear and R-linear are used in; The Big O definition when using Taylor series is used in

- Nocedal, Jorge; Wright, Stephen J. (2006). Numerical Optimization (2nd ed.). Berlin, New York: Springer-Verlag. pp. 619+620. ISBN 978-0-387-30303-1..