Layer (deep learning)

A layer in a deep learning model is a structure or network topology in the model's architecture, which takes information from the previous layers and then passes it to the next layer.

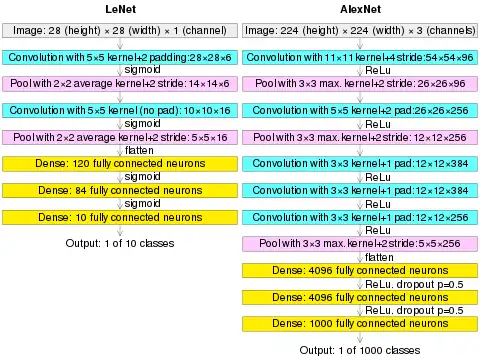

(AlexNet image size should be 227×227×3, instead of 224×224×3, so the math will come out right. The original paper said different numbers, but Andrej Karpathy, the former head of computer vision at Tesla, said it should be 227×227×3 (he said Alex didn't describe why he put 224×224×3). The next convolution should be 11×11 with stride 4: 55×55×96 (instead of 54×54×96). It would be calculated, for example, as: [(input width 227 - kernel width 11) / stride 4] + 1 = [(227 - 11) / 4] + 1 = 55. Since the kernel output is the same length as width, its area is 55×55.)

Layer Types

The first type of layer is the Dense layer, also called the fully-connected layer,[1][2][3][4] and is used for abstract representations of input data. In this layer, neurons connect to every neuron in the preceding layer. In multilayer perceptron networks, these layers are stacked together.

The Convolutional layer[5] is typically used for image analysis tasks. In this layer, the network detects edges, textures, and patterns. The outputs from this layer are then feed into a fully-connected layer for further processing. See also: CNN model.

The Pooling layer[6][7] is used to reduce the size of data input.

The Recurrent Layer is used for text processing with a memory function. Similar to the Convolutional layer, the output of recurrent layers are usually fed into a fully-connected layer for further processing. See also: RNN model.[8][9][10]

The Normalization layer adjusts the output data from previous layers to achieve a regular distribution. This results in improved scalability and model training.

Differences with layers of the neocortex

There is an intrinsic difference between deep learning layering and neocortical layering: deep learning layering depends on network topology, while neocortical layering depends on intra-layers homogeneity.

See also

References

- "CS231n Convolutional Neural Networks for Visual Recognition". CS231n Convolutional Neural Networks for Visual Recognition. 10 May 2016. Retrieved 27 Apr 2021.

Fully-connected layer: Neurons in a fully connected layer have full connections to all activations in the previous layer, as seen in regular Neural Networks.

- "Convolutional Neural Network. In this article, we will see what are… - by Arc". Medium. 26 Dec 2018. Retrieved 27 Apr 2021.

Fully Connected Layer is simply, feed forward neural networks.

- "Fully connected layer". MATLAB. 1 Mar 2021. Retrieved 27 Apr 2021.

A fully connected layer multiplies the input by a weight matrix and then adds a bias vector.

- Géron, Aurélien (2019). Hands-on machine learning with Scikit-Learn, Keras, and TensorFlow : concepts, tools, and techniques to build intelligent systems. Sebastopol, CA: O'Reilly Media, Inc. pp. 322–323. ISBN 978-1-4920-3264-9. OCLC 1124925244.

- Habibi, Aghdam, Hamed (2017-05-30). Guide to convolutional neural networks : a practical application to traffic-sign detection and classification. Heravi, Elnaz Jahani. Cham, Switzerland. ISBN 9783319575490. OCLC 987790957.

{{cite book}}: CS1 maint: location missing publisher (link) CS1 maint: multiple names: authors list (link) - Yamaguchi, Kouichi; Sakamoto, Kenji; Akabane, Toshio; Fujimoto, Yoshiji (November 1990). A Neural Network for Speaker-Independent Isolated Word Recognition. First International Conference on Spoken Language Processing (ICSLP 90). Kobe, Japan.

- Ciresan, Dan; Meier, Ueli; Schmidhuber, Jürgen (June 2012). "Multi-column deep neural networks for image classification". 2012 IEEE Conference on Computer Vision and Pattern Recognition. New York, NY: Institute of Electrical and Electronics Engineers (IEEE). pp. 3642–3649. arXiv:1202.2745. CiteSeerX 10.1.1.300.3283. doi:10.1109/CVPR.2012.6248110. ISBN 978-1-4673-1226-4. OCLC 812295155. S2CID 2161592.

- Dupond, Samuel (2019). "A thorough review on the current advance of neural network structures". Annual Reviews in Control. 14: 200–230.

- Abiodun, Oludare Isaac; Jantan, Aman; Omolara, Abiodun Esther; Dada, Kemi Victoria; Mohamed, Nachaat Abdelatif; Arshad, Humaira (2018-11-01). "State-of-the-art in artificial neural network applications: A survey". Heliyon. 4 (11): e00938. Bibcode:2018Heliy...400938A. doi:10.1016/j.heliyon.2018.e00938. ISSN 2405-8440. PMC 6260436. PMID 30519653.

- Tealab, Ahmed (2018-12-01). "Time series forecasting using artificial neural networks methodologies: A systematic review". Future Computing and Informatics Journal. 3 (2): 334–340. doi:10.1016/j.fcij.2018.10.003. ISSN 2314-7288.