Matchbox Educable Noughts and Crosses Engine

The Matchbox Educable Noughts and Crosses Engine (sometimes called the Machine Educable Noughts and Crosses Engine or MENACE) was a mechanical computer made from 304 matchboxes designed and built by artificial intelligence researcher Donald Michie in 1961. It was designed to play human opponents in games of noughts and crosses (tic-tac-toe) by returning a move for any given state of play and to refine its strategy through reinforcement learning.

Michie did not have a computer readily available, so he worked around this restriction by building it out of matchboxes. The matchboxes used by Michie each represented a single possible layout of a noughts and crosses grid. When the computer first played, it would randomly choose moves based on the current layout. As it played more games, through a reinforcement loop, it disqualified strategies that led to losing games, and supplemented strategies that led to winning games. Michie held a tournament against MENACE in 1961, wherein he experimented with different openings.

Following MENACE's maiden tournament against Michie, it demonstrated successful artificial intelligence in its strategy. Michie's essays on MENACE's weight initialisation and the BOXES algorithm used by MENACE became popular in the field of computer science research. Michie was honoured for his contribution to machine learning research, and was twice commissioned to program a MENACE simulation on an actual computer.

Origin

Donald Michie (1923–2007) had been on the team decrypting the German Tunny Code during World War II.[1] Fifteen years later, he wanted to further display his mathematical and computational prowess with an early convolutional neural network. Since computer equipment was not obtainable for such uses,[2] and Michie did not have a computer readily available,[2] he decided to display and demonstrate artificial intelligence in a more esoteric format and constructed a functional mechanical computer out of matchboxes and beads.[3][4]

MENACE was constructed as the result of a bet with a computer science colleague who postulated that such a machine was impossible.[5] Michie undertook the task of collecting and defining each matchbox as a "fun project", later turned into a demonstration tool.[6] Michie completed his essay on MENACE in 1963,[4] "Experiments on the mechanization of game-learning", as well as his essay on the BOXES Algorithm, written with R. A. Chambers[6] and had built up an AI research unit in Hope Park Square, Edinburgh, Scotland.[7]

MENACE learned by playing increasing matches of noughts and crosses. Each time, it would eliminate a losing strategy by the human player confiscating the beads that corresponded to each move.[4] It reinforced winning strategies by making the moves more likely, by supplying extra beads.[8] This was one of the earliest versions of the Reinforcement Loop, the schematic algorithm of looping the algorithm, dropping unsuccessful strategies until only the winning ones remain.[4] This model starts as completely random, and gradually learns.[9]

Composition

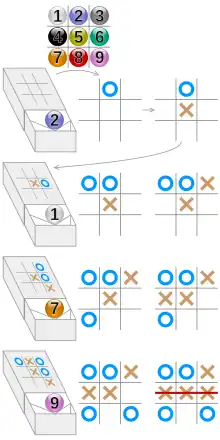

MENACE was made from 304 matchboxes glued together in an arrangement similar to a chest of drawers.[10] Each box had a code number, which was keyed into a chart. This chart had drawings of tic-tac-toe game grids with various configurations of X's, O's and empty squares,[4] corresponding to all possible permutations a game could go through as it progressed.[11] After removing duplicate arrangements (ones that were simply rotations or mirror images of other configurations), MENACE used 304 permutations in its chart and thus that many matchboxes.[12]

Each individual matchbox tray contained a collection of coloured beads.[13] Each colour represented a move on a square on the game grid, and so matchboxes with arrangements where positions on the grid were already taken would not have beads for that position. Additionally, at the front of the tray were two extra pieces of card in a "V" shape,[10] the point of the "V" pointing at the front of the matchbox.[11] Michie and his artificial intelligence team called MENACE's algorithm "Boxes",[7] after the apparatus used for the machine. The first stage "Boxes" operated in five phases, each setting a definition and a precedent for the rules of the algorithm in relation to the game.[14]

Operation

MENACE played first, as O, since all matchboxes represented permutations only relevant to the "X" player.[12][17] To retrieve MENACE's choice of move, the opponent or operator located the matchbox that matched the current game state, or a rotation or mirror image of it. For example, at the start of a game, this would be the matchbox for an empty grid. The tray would be removed and lightly shaken so as to move the beads around.[4] Then, the bead that had rolled into the point of the "V" shape at the front of the tray was the move MENACE had chosen to make.[4] Its colour was then used as the position to play on, and, after accounting for any rotations or flips needed based on the chosen matchbox configuration's relation to the current grid, the O would be placed on that square. Then the player performed their move, the new state was located, a new move selected, and so on, until the game was finished.[12]

When the game had finished, the human player observed the game's outcome. As a game was played, each matchbox that was used for MENACE's turn had its tray returned to it ajar, and the bead used kept aside, so that MENACE's choice of moves and the game states they belonged to were recorded. Michie described his reinforcement system with "reward" and "punishment". Once the game was finished, if MENACE had won, it would then receive a "reward" for its victory. The removed beads showed the sequence of the winning moves.[17] These were returned to their respective trays, easily identifiable since they were slightly open, as well as three bonus beads of the same colour.[11] In this way, in future games MENACE would become more likely to repeat those winning moves, reinforcing winning strategies. If it lost, the removed beads were not returned, "punishing" MENACE, and meaning that in future it would be less likely, and eventually incapable if that colour of bead became absent, to repeat the moves that cause a loss.[3][8] If the game was a draw, one additional bead was added to each box.[11]

Results in practice

Optimal strategy

Noughts and crosses has a well-known optimal strategy.[18] A player must place their symbol in a way that blocks the other player from achieving any rows while simultaneously making a row themself. However, if both players use this strategy, the game always ends in a draw.[18] If the human player is familiar with the optimal strategy, and MENACE can quickly learn it, then the games will eventually only end in draws. The likelihood of the computer winning increases quickly when the computer plays against a random-playing opponent.[3]

When playing against a player using optimal strategy, the odds of a draw grow to 100%. In Donald Michie's official tournament against MENACE in 1961[4] he used optimal strategy, and he and the computer began to draw consistently after twenty games. Michie's tournament[19] had the following milestones: Michie began by consistently opening with "Variant 0", the middle square. At 15 games, MENACE abandoned all non-corner openings. At just over 20, Michie switched to consistently using "Variant 1", the bottom-right square. At 60, he returned to Variant 0. As he neared 80 games, he moved to "Variant 2", the top-middle. At 110, he switched to "Variant 3", the top right. At 135, he switched to "Variant 4", middle-right. At 190, he returned to Variant 1, and at 210, he returned to Variant 0.

The trend in changes of beads in the "2" boxes runs:[19]

| Variant | Match number | Bead change in "2" box |

|---|---|---|

| Variant 0 | 0 | 0 |

| Variant 1 | 20 | -5 |

| Variant 0 | 60 | 5 |

| Variant 2 | 70 | 10 |

| Variant 3 | 110 | 20 |

| Variant 4 | 135 | 25 |

| Variant 1 | 190 | 100 |

| Variant 0 | 210 | 120 |

Correlation

Depending on the strategy employed by the human player, MENACE produces a different trend on scatter graphs of wins.[4] Using a random turn from the human player results in an almost-perfect positive trend. Playing the optimal strategy returns a slightly slower increase.[3] The reinforcement does not create a perfect standard of wins; the algorithm will draw random uncertain conclusions each time. After the j-th round, the correlation of near-perfect play runs:

Where Vi is the outcome (+1 is win, 0 is draw and -1 is loss) and D is the decay factor (average of past values of wins and losses). Below, Mn is the multiplier for the n-th round of the game.[4]

| Outcome | Reinforcement |

|---|---|

| Won | |

| Draw | |

| Lost |

Legacy

Donald Michie's MENACE proved that a computer could learn from failure and success to become good at a task.[17] It used what would become core principles within the field of machine learning before they had been properly theorised. For example, the combination of how MENACE starts with equal numbers of types of beads in each matchbox, and how these are then selected at random, creates a learning behaviour similar to weight initialisation in modern artificial neural networks.[20] In 1968, Donald Michie and R.A Chambers made another BOXES-based algorithm called GLEE (Game Learning Expectimaxing Engine) which had to learn how to balance a pole on a cart.[21]

After the resounding reception of MENACE, Michie was invited to the US Office of Naval Research, where he was commissioned to build a BOXES-running program for an IBM Computer for use at Stanford University.[22] Michie created a simulation program of MENACE on a Pegasus 2 computer with the aid of D. Martin.[4] There have been multiple recreations of MENACE in more recent years, both in its original physical form and as a computer program.[12] Its algorithm was later converged into Christopher Watkin's Q-Learning algorithm.[23] Although not as a functional computer, in examples of demonstration, MENACE has been used as a teaching aid for various neural network classes,[24][25][26] including a public demonstration from University College London Researcher Matthew Scroggs.[27][28] A copy of MENACE built by Scroggs was featured in the 2019 Royal Institution Christmas Lectures,[29][30] and in a 2023 episode of QI XL.[31]

See also

References

- Boden, Margaret (15 August 2007). "Donald Michie (1923–2007)". Nature. 448 (7155): 765. doi:10.1038/448765a. ISSN 1476-4687. PMID 17700692. S2CID 5239830.

- Wright, Matt (31 March 2020). "Donald Michie: The AI pioneer who tested his computer program with a matchbox and some beads". Scroll.in. Archived from the original on 20 October 2020. Retrieved 18 October 2020.

- Child, Oliver (13 March 2016). "Menace: the Machine Educable Noughts And Crosses Engine". Chalkdust. Archived from the original on 12 May 2020. Retrieved 17 May 2020.

- Michie, Donald. "Experiments on the mechanization of Game Learning Part 1. Characterization of the model and its parameters" (PDF). Archived (PDF) from the original on 21 November 2019. Retrieved 1 June 2020.

- "Daily Telegraph obituary for Donald Michie". The Daily Telegraph. 9 July 2007. Archived from the original on 11 June 2020. Retrieved 25 May 2021.

- Donald, Michie (1968). BOXES: An experiment in adaptive control. University of Edinburgh. p. 137. CiteSeerX 10.1.1.474.2430. Archived from the original on 26 June 2020. Retrieved 31 July 2020.

- Muggleton, Stephen (10 July 2007). "Obituary for Donald Michie, an article in The Guardian from 2007". The Guardian. Archived from the original on 1 October 2020. Retrieved 22 May 2021.

- Hardingham, Samantha; Frazer, John; Jones, Emma Letizia (2012). "John Frazer in conversation with Samantha Hardingham". AA Files (64): 69–77. ISSN 0261-6823. JSTOR 41762307.

- Wylie, Caspar (5 October 2018). "How 300 Matchboxes Learned to Play Tic-Tac-Toe Using MENACE". Open Data Science. Archived from the original on 15 May 2021. Retrieved 15 May 2021.

- The Science Book, Second Edition, Dorling Kindersley Ltd., 2015, pg. 288

- Gardner, Martin (1962). "Mathematical Games". Scientific American. 206 (3): 138–154. Bibcode:1962SciAm.206c.138G. doi:10.1038/scientificamerican0362-138. JSTOR 24937263.

- "Matchbox Educable Noughts And Crosses Engine In Empirical Modelling" (PDF). University of Warwick. Retrieved 22 May 2021.

- De Raedt, Luc. "The Machine Learning Revolution in AI". Archived from the original on 12 June 2020.

- Russel, David (2012). Extract from "The BOXES Methodology". (Chapter 2. The Game Metaphor). London: Springer Professional. ISBN 978-1849965279.

- http://chalkdustmagazine.com/features/menace-machine-educable-noughts-crosses-engine

- http://people.csail.mit.edu/brooks/idocs/matchbox.pdf

- "MENACE 2, an artificial intelligence made of wooden drawers and coloured beads". 12 April 2016. Archived from the original on 12 July 2020. Retrieved 22 May 2021.

- Cappiell, Emily (30 November 2020). "How to Win Tic-Tac-Toe: The Strategies You Need to Master". Reader's Digest. Archived from the original on 22 January 2021. Retrieved 6 February 2021.

- Trial and Error, Michie Donald, Penguin Science Surveys 1961 Vol 2

- Yam, Jim Y. F.; Chow, Tommy W. S. (1 January 2000). "A weight initialization method for improving training speed in feedforward neural network". Neurocomputing. 30 (1): 219–232. doi:10.1016/S0925-2312(99)00127-7. ISSN 0925-2312.

- Sutton, Richard S.; Barto, Andrew G. (2018). Reinforcement Learning: An Introduction. MIT Press. p. 753. ISBN 978-0262039246.

- "Professor Donald Michie". The Daily Telegraph. 8 July 2007. ISSN 0307-1235. Archived from the original on 11 June 2020. Retrieved 11 June 2020.

- Scaruffi, Piero (2014). Intelligence is not Artificial – Why the Singularity is not coming any time soon and other Meditations on the Post-Human Condition and the Future of Intelligence. Omniware. p. 27. ISBN 978-0976553199.

- Zhao, Yibo (1 December 2013). "Machine Educable Engine on Noughts And Crosses in Modelling Study". University of Warwick. Archived from the original on 11 June 2020. Retrieved 22 May 2021.

- "AI Topics.. Tic-Tac-Toe strategy in Computational Thinking, Introduction, MENACE". Archived from the original on 8 February 2021. Retrieved 22 May 2021.

- Ute Schmid – "Interactive Learning with Mutual Explanations" (How Humans and Machine Learning Systems can Profit From Each Other) – University of Bamberg, Germany Link

- Scroggs, Matthew (3 July 2017). ‘Building a MENACE machine’, Matthew Scroggs, University College London (Youtube).

- "Inspiring the Next Generation of Computer Scientists | King's Worcester". King's Worcester. 11 November 2019. Archived from the original on 12 June 2020. Retrieved 12 June 2020.

- Scroggs, Matthew (27 December 2019). "Visualising MENACE's learning". mscroggs.co.uk. Archived from the original on 11 July 2020. Retrieved 30 July 2020.

- @rsi_science (27 December 2019). "Menace Machine-creator pitched up with his 304 matchboxes to explain how he made it" (Tweet). Retrieved 14 October 2020 – via Twitter.

- "QI XL Series T, Ticks Tax Toes". BBC. 6 January 2023. Retrieved 4 February 2023.

Sources

- Michie, D.; Chambers, R. A. (1968), "BOXES: An Experiment in Adaptive Control", Machine Intelligence, Edinburgh, UK: Oliver and Boyd, S2CID 18229198 – via Semantic Scholar, Michie and R. A Chambers' paper on the AI implications of BOXES and MENACE.

- Russell, David W. (2012), The BOXES Methodology: Black Box Dynamic Control, Springer London, ISBN 978-1849965286, a book on the "Boxes" algorithm employed by MENACE.