NSynth

NSynth (a portmanteau of "Neural Synthesis") is a WaveNet-based autoencoder for synthesizing audio, outlined in a paper in April 2017.[1]

| Original author(s) | Google Brain, Deep Mind, Magenta |

|---|---|

| Initial release | 6 April 2017 |

| Repository | github |

| Written in | Python |

| Type | Software synthesizer |

| License | Apache 2.0 |

| Website | magenta |

Overview

The model generates sounds through a neural network based synthesis, employing a WaveNet-style autoencoder to learn its own temporal embeddings from four different sounds.[2][3] Google then released an open source hardware interface for the algorithm called NSynth Super,[4] used by notable musicians such as Grimes and YACHT to generate experimental music using artificial intelligence.[5][6] The research and development of the algorithm was part of a collaboration between Google Brain, Magenta and DeepMind.[7]

Technology

Dataset

The NSynth dataset is composed of 305,979 one-shot instrumental notes featuring a unique pitch, timbre, and envelope, sampled from 1,006 instruments from commercial sample libraries.[8] For each instrument the dataset contains four-second 16 kHz audio snippets by ranging over every pitch of a standard MIDI piano, as well as five different velocities.[9] The dataset is made available under a Creative Commons Attribution 4.0 International (CC BY 4.0) license.[10]

Machine learning model

A spectral autoencoder model and a WaveNet autoencoder model are publicly available on GitHub.[11] The baseline model uses a spectrogram with fft_size 1024 and hop_size 256, MSE loss on the magnitudes, and the Griffin-Lim algorithm for reconstruction. The WaveNet model trains on mu-law encoded waveform chunks of size 6144. It learns embeddings with 16 dimensions that are downsampled by 512 in time.[12]

NSynth Super

| NSynth Super | |

|---|---|

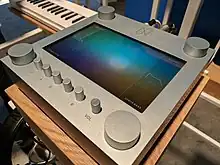

NSynth Super Front Panel | |

| Manufacturer | Google Brain, Google Creative Lab |

| Dates | 2018 |

| Technical specifications | |

| Synthesis type | Neural Network Sample-based synthesis |

| Input/output | |

| Left-hand control | Pitch bend, ADSR |

| External control | MIDI |

In 2018 Google released a hardware interface for the NSynth algorithm, called NSynth Super, designed to provide an accessible physical interface to the algorithm for musicians to use in their artistic production.[13][14]

Design files, source code and internal components are released under an open source Apache License 2.0,[15] enabling hobbyists and musicians to freely build and use the instrument.[16] At the core of the NSynth Super there is a Raspberry Pi, extended with a custom printed circuit board to accommodate the interface elements.[17]

Influence

Despite not being publicly available as a commercial product, NSynth Super has been used by notable artists, including Grimes and YACHT.[18][19]

Grimes reported using the instrument in her 2020 studio album Miss Anthropocene.[5]

YACHT announced an extensive use of NSynth Super in their album Chain Tripping.[20]

Claire L. Evans compared the potential influence of the instrument to the Roland TR-808.[21]

The NSynth Super design was honored with a D&AD Yellow Pencil award in 2018.[22]

References

- Engel, Jesse; Resnick, Cinjon; Roberts, Adam; Dieleman, Sander; Eck, Douglas; Simonyan, Karen; Norouzi, Mohammad (2017). "Neural Audio Synthesis of Musical Notes with WaveNet Autoencoders". arXiv:1704.01279 [cs.LG].

- Engel, Jesse; Resnick, Cinjon; Roberts, Adam; Dieleman, Sander; Eck, Douglas; Simonyan, Karen; Norouzi, Mohammad (2017). "Neural Audio Synthesis of Musical Notes with WaveNet Autoencoders". research.google. arXiv:1704.01279.

- Aaron van den Oord; Dieleman, Sander; Zen, Heiga; Simonyan, Karen; Vinyals, Oriol; Graves, Alex; Kalchbrenner, Nal; Senior, Andrew; Kavukcuoglu, Koray (2016). "WaveNet: A Generative Model for Raw Audio". arXiv:1609.03499 [cs.SD].

- "Google's open-source neural synth is creating totally new sounds". Wired UK.

- "73 | Grimes (c) on Music, Creativity, and Digital Personae – Sean Carroll". www.preposterousuniverse.com.

- Mattise, Nathan (2019-08-31). "How YACHT fed their old music to the machine and got a killer new album". Ars Technica. Retrieved 2022-11-08.

- "NSynth: Neural Audio Synthesis". Magenta. 6 April 2017.

- "NSynth Dataset". Machine Learning Datasets. Retrieved 2022-11-08.

- Ramires, António; Serra, Xavier (2019). "Data Augmentation for Instrument Classification Robust to Audio Effects". arXiv:1907.08520 [cs.SD].

- "The NSynth Dataset". tensorflow.org. 5 April 2017.

- "NSynth: Neural Audio Synthesis". GitHub.

- Engel, Jesse; Resnick, Cinjon; Roberts, Adam; Dieleman, Sander; Eck, Douglas; Simonyan, Karen; Norouzi, Mohammad (2017). "Neural Audio Synthesis of Musical Notes with WaveNet Autoencoders". arXiv:1704.01279 [cs.LG].

- "NSynth Super is an AI-backed touchscreen synth". The Verge. 13 March 2018.

- "Google built a musical instrument that uses AI and released the plans so you can make your own". CNBC. 13 March 2018.

- "googlecreativelab/open-nsynth-super". April 1, 2021 – via GitHub.

- "Open NSynth Super". hackaday.io. Retrieved 2022-11-08.

- "NSYNTH SUPER Hardware". GitHub.

- Mattise, Nathan. "How YACHT Used Machine Learning to Create Their New Album". Wired. ISSN 1059-1028. Retrieved 2023-01-19.

- "Cover Story: Grimes is ready to play the villain". Crack Magazine. Retrieved 2023-01-19.

- "What Machine-Learning Taught the Band YACHT About Themselves". Los Angeleno. 2019-09-18. Retrieved 2023-01-19.

- Music and Machine Learning (Google I/O'19), retrieved 2023-01-19

- "NSynth Super | Google Creative Lab | Google | D&AD Awards 2018 Pencil Winner | Interactive Design for Products | D&AD". www.dandad.org. Retrieved 2023-01-19.

Further reading

- Engel, Jesse; Resnick, Cinjon; Roberts, Adam; Dieleman, Sander; Eck, Douglas; Simonyan, Karen; Norouzi, Mohammad (2017). "Neural Audio Synthesis of Musical Notes with WaveNet Autoencoders". arXiv:1704.01279 [cs.LG].