Usability

Usability can be described as the capacity of a system to provide a condition for its users to perform the tasks safely, effectively, and efficiently while enjoying the experience.[1] In software engineering, usability is the degree to which a software can be used by specified consumers to achieve quantified objectives with effectiveness, efficiency, and satisfaction in a quantified context of use.[2]

The object of use can be a software application, website, book, tool, machine, process, vehicle, or anything a human interacts with. A usability study may be conducted as a primary job function by a usability analyst or as a secondary job function by designers, technical writers, marketing personnel, and others. It is widely used in consumer electronics, communication, and knowledge transfer objects (such as a cookbook, a document or online help) and mechanical objects such as a door handle or a hammer.

Usability includes methods of measuring usability, such as needs analysis[3] and the study of the principles behind an object's perceived efficiency or elegance. In human-computer interaction and computer science, usability studies the elegance and clarity with which the interaction with a computer program or a web site (web usability) is designed. Usability considers user satisfaction and utility as quality components, and aims to improve user experience through iterative design.[4]

Introduction

The primary notion of usability is that an object designed with a generalized users' psychology and physiology in mind is, for example:

- More efficient to use—takes less time to accomplish a particular task

- Easier to learn—operation can be learned by observing the object

- More satisfying to use

Complex computer systems find their way into everyday life, and at the same time the market is saturated with competing brands. This has made usability more popular and widely recognized in recent years, as companies see the benefits of researching and developing their products with user-oriented methods instead of technology-oriented methods. By understanding and researching the interaction between product and user, the usability expert can also provide insight that is unattainable by traditional company-oriented market research. For example, after observing and interviewing users, the usability expert may identify needed functionality or design flaws that were not anticipated. A method called contextual inquiry does this in the naturally occurring context of the users own environment. In the user-centered design paradigm, the product is designed with its intended users in mind at all times. In the user-driven or participatory design paradigm, some of the users become actual or de facto members of the design team.[5]

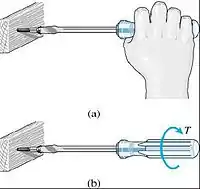

The term user friendly is often used as a synonym for usable, though it may also refer to accessibility. Usability describes the quality of user experience across websites, software, products, and environments. There is no consensus about the relation of the terms ergonomics (or human factors) and usability. Some think of usability as the software specialization of the larger topic of ergonomics. Others view these topics as tangential, with ergonomics focusing on physiological matters (e.g., turning a door handle) and usability focusing on psychological matters (e.g., recognizing that a door can be opened by turning its handle). Usability is also important in website development (web usability). According to Jakob Nielsen, "Studies of user behavior on the Web find a low tolerance for difficult designs or slow sites. People don't want to wait. And they don't want to learn how to use a home page. There's no such thing as a training class or a manual for a Web site. People have to be able to grasp the functioning of the site immediately after scanning the home page—for a few seconds at most."[6] Otherwise, most casual users simply leave the site and browse or shop elsewhere.

Usability can also include the concept of prototypicality, which is how much a particular thing conforms to the expected shared norm, for instance, in website design, users prefer sites that conform to recognised design norms.[7]

Definition

ISO defines usability as "The extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency, and satisfaction in a specified context of use." The word "usability" also refers to methods for improving ease-of-use during the design process. Usability consultant Jakob Nielsen and computer science professor Ben Shneiderman have written (separately) about a framework of system acceptability, where usability is a part of "usefulness" and is composed of:[8]

- Learnability: How easy is it for users to accomplish basic tasks the first time they encounter the design?

- Efficiency: Once users have learned the design, how quickly can they perform tasks?

- Memorability: When users return to the design after a period of not using it, how easily can they re-establish proficiency?

- Errors: How many errors do users make, how severe are these errors, and how easily can they recover from the errors?

- Satisfaction: How pleasant is it to use the design?

Usability is often associated with the functionalities of the product (cf. ISO definition, below), in addition to being solely a characteristic of the user interface (cf. framework of system acceptability, also below, which separates usefulness into usability and utility). For example, in the context of mainstream consumer products, an automobile lacking a reverse gear could be considered unusable according to the former view, and lacking in utility according to the latter view. When evaluating user interfaces for usability, the definition can be as simple as "the perception of a target user of the effectiveness (fit for purpose) and efficiency (work or time required to use) of the Interface". Each component may be measured subjectively against criteria, e.g., Principles of User Interface Design, to provide a metric, often expressed as a percentage. It is important to distinguish between usability testing and usability engineering. Usability testing is the measurement of ease of use of a product or piece of software. In contrast, usability engineering (UE) is the research and design process that ensures a product with good usability. Usability is a non-functional requirement. As with other non-functional requirements, usability cannot be directly measured but must be quantified by means of indirect measures or attributes such as, for example, the number of reported problems with ease-of-use of a system.

Intuitive interaction or intuitive use

The term intuitive is often listed as a desirable trait in usable interfaces, sometimes used as a synonym for learnable. In the past, Jef Raskin discouraged using this term in user interface design, claiming that easy to use interfaces are often easy because of the user's exposure to previous similar systems, thus the term 'familiar' should be preferred.[9] As an example: Two vertical lines "||" on media player buttons do not intuitively mean "pause"—they do so by convention. This association between intuitive use and familiarity has since been empirically demonstrated in multiple studies by a range of researchers across the world, and intuitive interaction is accepted in the research community as being use of an interface based on past experience with similar interfaces or something else, often not fully conscious,[10] and sometimes involving a feeling of "magic"[11] since the course of the knowledge itself may not be consciously available to the user . Researchers have also investigated intuitive interaction for older people,[12] people living with dementia,[13] and children.[14]

Some have argued that aiming for "intuitive" interfaces (based on reusing existing skills with interaction systems) could lead designers to discard a better design solution only because it would require a novel approach and to stick with boring designs. However, applying familiar features into a new interface has been shown not to result in boring design if designers use creative approaches rather than simple copying.[15] The throwaway remark that "the only intuitive interface is the nipple; everything else is learned."[16] is still occasionally mentioned. Any breastfeeding mother or lactation consultant will tell you this is inaccurate and the nipple does in fact require learning on both sides. In 1992, Bruce Tognazzini even denied the existence of "intuitive" interfaces, since such interfaces must be able to intuit, i.e., "perceive the patterns of the user's behavior and draw inferences."[17] Instead, he advocated the term "intuitable," i.e., "that users could intuit the workings of an application by seeing it and using it". However, the term intuitive interaction has become well accepted in the research community over the past 20 or so years and, although not perfect, it should probably be accepted and used.

ISO standards

ISO/TR 16982:2002 standard

ISO/TR 16982:2002 ("Ergonomics of human-system interaction—Usability methods supporting human-centered design") is an International Standards Organization (ISO) standard that provides information on human-centered usability methods that can be used for design and evaluation. It details the advantages, disadvantages, and other factors relevant to using each usability method. It explains the implications of the stage of the life cycle and the individual project characteristics for the selection of usability methods and provides examples of usability methods in context. The main users of ISO/TR 16982:2002 are project managers. It therefore addresses technical human factors and ergonomics issues only to the extent necessary to allow managers to understand their relevance and importance in the design process as a whole. The guidance in ISO/TR 16982:2002 can be tailored for specific design situations by using the lists of issues characterizing the context of use of the product to be delivered. Selection of appropriate usability methods should also take account of the relevant life-cycle process. ISO/TR 16982:2002 is restricted to methods that are widely used by usability specialists and project managers. It does not specify the details of how to implement or carry out the usability methods described.

ISO 9241 standard

ISO 9241 is a multi-part standard that covers a number of aspects of people working with computers. Although originally titled Ergonomic requirements for office work with visual display terminals (VDTs), it has been retitled to the more generic Ergonomics of Human System Interaction.[18] As part of this change, ISO is renumbering some parts of the standard so that it can cover more topics, e.g. tactile and haptic interaction. The first part to be renumbered was part 10 in 2006, now part 110.[19]

IEC 62366

IEC 62366-1:2015 + COR1:2016 & IEC/TR 62366-2 provide guidance on usability engineering specific to a medical device.

Designing for usability

Any system or device designed for use by people should be easy to use, easy to learn, easy to remember (the instructions), and helpful to users. John Gould and Clayton Lewis recommend that designers striving for usability follow these three design principles[20]

- Early focus on end users and the tasks they need the system/device to do

- Empirical measurement using quantitative or qualitative measures

- Iterative design, in which the designers work in a series of stages, improving the design each time

Early focus on users and tasks

The design team should be user-driven and it should be in direct contact with potential users. Several evaluation methods, including personas, cognitive modeling, inspection, inquiry, prototyping, and testing methods may contribute to understanding potential users and their perceptions of how well the product or process works. Usability considerations, such as who the users are and their experience with similar systems must be examined. As part of understanding users, this knowledge must "...be played against the tasks that the users will be expected to perform."[20] This includes the analysis of what tasks the users will perform, which are most important, and what decisions the users will make while using your system. Designers must understand how cognitive and emotional characteristics of users will relate to a proposed system. One way to stress the importance of these issues in the designers' minds is to use personas, which are made-up representative users. See below for further discussion of personas. Another more expensive but more insightful method is to have a panel of potential users work closely with the design team from the early stages.[21]

Empirical measurement

Test the system early on, and test the system on real users using behavioral measurements. This includes testing the system for both learnability and usability. (See Evaluation Methods). It is important in this stage to use quantitative usability specifications such as time and errors to complete tasks and number of users to test, as well as examine performance and attitudes of the users testing the system.[21] Finally, "reviewing or demonstrating" a system before the user tests it can result in misleading results. The emphasis of empirical measurement is on measurement, both informal and formal, which can be carried out through a variety of evaluation methods.[20]

Iterative design

Iterative design is a design methodology based on a cyclic process of prototyping, testing, analyzing, and refining a product or process. Based on the results of testing the most recent iteration of a design, changes and refinements are made. This process is intended to ultimately improve the quality and functionality of a design. In iterative design, interaction with the designed system is used as a form of research for informing and evolving a project, as successive versions, or iterations of a design are implemented. The key requirements for Iterative Design are: identification of required changes, an ability to make changes, and a willingness to make changes. When a problem is encountered, there is no set method to determine the correct solution. Rather, there are empirical methods that can be used during system development or after the system is delivered, usually a more inopportune time. Ultimately, iterative design works towards meeting goals such as making the system user friendly, easy to use, easy to operate, simple, etc.[21]

Evaluation methods

There are a variety of usability evaluation methods. Certain methods use data from users, while others rely on usability experts. There are usability evaluation methods for all stages of design and development, from product definition to final design modifications. When choosing a method, consider cost, time constraints, and appropriateness. For a brief overview of methods, see Comparison of usability evaluation methods or continue reading below. Usability methods can be further classified into the subcategories below.

Cognitive modeling methods

Cognitive modeling involves creating a computational model to estimate how long it takes people to perform a given task. Models are based on psychological principles and experimental studies to determine times for cognitive processing and motor movements. Cognitive models can be used to improve user interfaces or predict problem errors and pitfalls during the design process. A few examples of cognitive models include:

- Parallel design

With parallel design, several people create an initial design from the same set of requirements. Each person works independently, and when finished, shares concepts with the group. The design team considers each solution, and each designer uses the best ideas to further improve their own solution. This process helps generate many different, diverse ideas, and ensures that the best ideas from each design are integrated into the final concept. This process can be repeated several times until the team is satisfied with the final concept.

- GOMS

GOMS stands for goals, operator, methods, and selection rules. It is a family of techniques that analyzes the user complexity of interactive systems. Goals are what the user must accomplish. An operator is an action performed in pursuit of a goal. A method is a sequence of operators that accomplish a goal. Selection rules specify which method satisfies a given goal, based on context.

- Human processor model

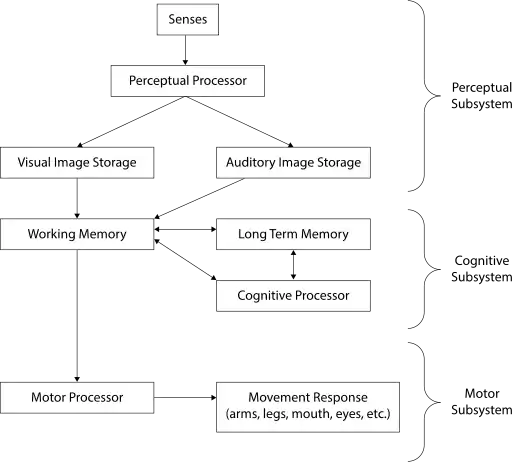

Sometimes it is useful to break a task down and analyze each individual aspect separately. This helps the tester locate specific areas for improvement. To do this, it is necessary to understand how the human brain processes information. A model of the human processor is shown below.

Many studies have been done to estimate the cycle times, decay times, and capacities of each of these processors. Variables that affect these can include subject age, aptitudes, ability, and the surrounding environment. For a younger adult, reasonable estimates are:

| Parameter | Mean | Range |

|---|---|---|

| Eye movement time | 230 ms | 70–700 ms |

| Decay half-life of visual image storage | 200 ms | 90–1000 ms |

| Perceptual processor cycle time | 100 ms | 50–200 ms |

| Cognitive processor cycle time | 70 ms | 25–170 ms |

| Motor processor cycle time | 70 ms | 30–100 ms |

| Effective working memory capacity | 2 items | 2–3 items |

Long-term memory is believed to have an infinite capacity and decay time.[22]

- Keystroke level modeling

Keystroke level modeling is essentially a less comprehensive version of GOMS that makes simplifying assumptions in order to reduce calculation time and complexity.

Inspection methods

These usability evaluation methods involve observation of users by an experimenter, or the testing and evaluation of a program by an expert reviewer. They provide more quantitative data as tasks can be timed and recorded.

- Card sorts

Card sorting is a way to involve users in grouping information for a website's usability review. Participants in a card sorting session are asked to organize the content from a Web site in a way that makes sense to them. Participants review items from a Web site and then group these items into categories. Card sorting helps to learn how users think about the content and how they would organize the information on the Web site. Card sorting helps to build the structure for a Web site, decide what to put on the home page, and label the home page categories. It also helps to ensure that information is organized on the site in a way that is logical to users.

- Tree tests

Tree testing is a way to evaluate the effectiveness of a website's top-down organization. Participants are given "find it" tasks, then asked to drill down through successive text lists of topics and subtopics to find a suitable answer. Tree testing evaluates the findability and labeling of topics in a site, separate from its navigation controls or visual design.

- Ethnography

Ethnographic analysis is derived from anthropology. Field observations are taken at a site of a possible user, which track the artifacts of work such as Post-It notes, items on desktop, shortcuts, and items in trash bins. These observations also gather the sequence of work and interruptions that determine the user's typical day.

- Heuristic evaluation

Heuristic evaluation is a usability engineering method for finding and assessing usability problems in a user interface design as part of an iterative design process. It involves having a small set of evaluators examining the interface and using recognized usability principles (the "heuristics"). It is the most popular of the usability inspection methods, as it is quick, cheap, and easy. Heuristic evaluation was developed to aid in the design of computer user-interface design. It relies on expert reviewers to discover usability problems and then categorize and rate them by a set of principles (heuristics.) It is widely used based on its speed and cost-effectiveness. Jakob Nielsen's list of ten heuristics is the most commonly used in industry. These are ten general principles for user interface design. They are called "heuristics" because they are more in the nature of rules of thumb than specific usability guidelines.

- Visibility of system status: The system should always keep users informed about what is going on, through appropriate feedback within reasonable time.

- Match between system and the real world: The system should speak the users' language, with words, phrases and concepts familiar to the user, rather than system-oriented terms. Follow real-world conventions, making information appear in a natural and logical order.

- User control and freedom: Users often choose system functions by mistake and will need a clearly marked "emergency exit" to leave the unwanted state without having to go through an extended dialogue. Support undo and redo.

- Consistency and standards: Users should not have to wonder whether different words, situations, or actions mean the same thing. Follow platform conventions.

- Error prevention: Even better than good error messages is a careful design that prevents a problem from occurring in the first place. Either eliminate error-prone conditions or check for them and present users with a confirmation option before they commit to the action.

- Recognition rather than recall:[23] Minimize the user's memory load by making objects, actions, and options visible. The user should not have to remember information from one part of the dialogue to another. Instructions for use of the system should be visible or easily retrievable whenever appropriate.

- Flexibility and efficiency of use: Accelerators—unseen by the novice user—may often speed up the interaction for the expert user such that the system can cater to both inexperienced and experienced users. Allow users to tailor frequent actions.

- Aesthetic and minimalist design: Dialogues should not contain information that is irrelevant or rarely needed. Every extra unit of information in a dialogue competes with the relevant units of information and diminishes their relative visibility.

- Help users recognize, diagnose, and recover from errors: Error messages should be expressed in plain language (no codes), precisely indicate the problem, and constructively suggest a solution.

- Help and documentation: Even though it is better if the system can be used without documentation, it may be necessary to provide help and documentation. Any such information should be easy to search, focused on the user's task, list concrete steps to be carried out, and not be too large.

Thus, by determining which guidelines are violated, the usability of a device can be determined.

- Usability inspection

Usability inspection is a review of a system based on a set of guidelines. The review is conducted by a group of experts who are deeply familiar with the concepts of usability in design. The experts focus on a list of areas in design that have been shown to be troublesome for users.

- Pluralistic inspection

Pluralistic Inspections are meetings where users, developers, and human factors people meet together to discuss and evaluate step by step of a task scenario. As more people inspect the scenario for problems, the higher the probability to find problems. In addition, the more interaction in the team, the faster the usability issues are resolved.

- Consistency inspection

In consistency inspection, expert designers review products or projects to ensure consistency across multiple products to look if it does things in the same way as their own designs.

- Activity Analysis

Activity analysis is a usability method used in preliminary stages of development to get a sense of situation. It involves an investigator observing users as they work in the field. Also referred to as user observation, it is useful for specifying user requirements and studying currently used tasks and subtasks. The data collected are qualitative and useful for defining the problem. It should be used when you wish to frame what is needed, or "What do we want to know?"

Inquiry methods

The following usability evaluation methods involve collecting qualitative data from users. Although the data collected is subjective, it provides valuable information on what the user wants.

- Task analysis

Task analysis means learning about users' goals and users' ways of working. Task analysis can also mean figuring out what more specific tasks users must do to meet those goals and what steps they must take to accomplish those tasks. Along with user and task analysis, a third analysis is often used: understanding users' environments (physical, social, cultural, and technological environments).

- Focus groups

A focus group is a focused discussion where a moderator leads a group of participants through a set of questions on a particular topic. Although typically used as a marketing tool, Focus Groups are sometimes used to evaluate usability. Used in the product definition stage, a group of 6 to 10 users are gathered to discuss what they desire in a product. An experienced focus group facilitator is hired to guide the discussion to areas of interest for the developers. Focus groups are typically videotaped to help get verbatim quotes, and clips are often used to summarize opinions. The data gathered is not usually quantitative, but can help get an idea of a target group's opinion.

- Questionnaires/surveys

Surveys have the advantages of being inexpensive, require no testing equipment, and results reflect the users' opinions. When written carefully and given to actual users who have experience with the product and knowledge of design, surveys provide useful feedback on the strong and weak areas of the usability of a design. This is a very common method and often does not appear to be a survey, but just a warranty card.

Prototyping methods

It is often very difficult for designers to conduct usability tests with the exact system being designed. Cost constraints, size, and design constraints usually lead the designer to creating a prototype of the system. Instead of creating the complete final system, the designer may test different sections of the system, thus making several small models of each component of the system. Prototyping is an attitude and an output, as it is a process for generating and reflecting on tangible ideas by allowing failure to occur early.[24] prototyping helps people to see what could be of communicating a shared vision, and of giving shape to the future. The types of usability prototypes may vary from using paper models, index cards, hand drawn models, or storyboards.[25] Prototypes are able to be modified quickly, often are faster and easier to create with less time invested by designers and are more apt to change design; although sometimes are not an adequate representation of the whole system, are often not durable and testing results may not be parallel to those of the actual system.

The Tool Kit Approach

This tool kit is a wide library of methods that used the traditional programming language and it is primarily developed for computer programmers. The code created for testing in the tool kit approach can be used in the final product. However, to get the highest benefit from the tool, the user must be an expert programmer.[26]

The Parts Kit Approach

The two elements of this approach include a parts library and a method used for identifying the connection between the parts. This approach can be used by almost anyone and it is a great asset for designers with repetitive tasks.[26]

Animation Language Metaphor

This approach is a combination of the tool kit approach and the part kit approach. Both the dialogue designers and the programmers are able to interact with this prototyping tool.[26]

Rapid prototyping

Rapid prototyping is a method used in early stages of development to validate and refine the usability of a system. It can be used to quickly and cheaply evaluate user-interface designs without the need for an expensive working model. This can help remove hesitation to change the design, since it is implemented before any real programming begins. One such method of rapid prototyping is paper prototyping.

Testing methods

These usability evaluation methods involve testing of subjects for the most quantitative data. Usually recorded on video, they provide task completion time and allow for observation of attitude. Regardless to how carefully a system is designed, all theories must be tested using usability tests. Usability tests involve typical users using the system (or product) in a realistic environment [see simulation]. Observation of the user's behavior, emotions, and difficulties while performing different tasks, often identify areas of improvement for the system.

Metrics

While conducting usability tests, designers must use usability metrics to identify what it is they are going to measure, or the usability metrics. These metrics are often variable, and change in conjunction with the scope and goals of the project. The number of subjects being tested can also affect usability metrics, as it is often easier to focus on specific demographics. Qualitative design phases, such as general usability (can the task be accomplished?), and user satisfaction are also typically done with smaller groups of subjects.[27] Using inexpensive prototypes on small user groups provides more detailed information, because of the more interactive atmosphere, and the designer's ability to focus more on the individual user.

As the designs become more complex, the testing must become more formalized. Testing equipment will become more sophisticated and testing metrics become more quantitative. With a more refined prototype, designers often test effectiveness, efficiency, and subjective satisfaction, by asking the user to complete various tasks. These categories are measured by the percent that complete the task, how long it takes to complete the tasks, ratios of success to failure to complete the task, time spent on errors, the number of errors, rating scale of satisfactions, number of times user seems frustrated, etc.[28] Additional observations of the users give designers insight on navigation difficulties, controls, conceptual models, etc. The ultimate goal of analyzing these metrics is to find/create a prototype design that users like and use to successfully perform given tasks.[25] After conducting usability tests, it is important for a designer to record what was observed, in addition to why such behavior occurred and modify the model according to the results. Often it is quite difficult to distinguish the source of the design errors, and what the user did wrong. However, effective usability tests will not generate a solution to the problems, but provide modified design guidelines for continued testing.

- Remote usability testing

Remote usability testing (also known as unmoderated or asynchronous usability testing) involves the use of a specially modified online survey, allowing the quantification of user testing studies by providing the ability to generate large sample sizes, or a deep qualitative analysis without the need for dedicated facilities. Additionally, this style of user testing also provides an opportunity to segment feedback by demographic, attitudinal and behavioral type. The tests are carried out in the user's own environment (rather than labs) helping further simulate real-life scenario testing. This approach also provides a vehicle to easily solicit feedback from users in remote areas. There are two types, quantitative or qualitative. Quantitative use large sample sized and task based surveys. These types of studies are useful for validating suspected usability issues. Qualitative studies are best used as exploratory research, in small sample sizes but frequent, even daily iterations. Qualitative usually allows for observing respondent's screens and verbal think aloud commentary (Screen Recording Video, SRV), and for a richer level of insight also include the webcam view of the respondent (Video-in-Video, ViV, sometimes referred to as Picture-in-Picture, PiP)

- Remote usability testing for mobile devices

The growth in mobile and associated platforms and services (e.g.: Mobile gaming has experienced 20x growth in 2010-2012) has generated a need for unmoderated remote usability testing on mobile devices, both for websites but especially for app interactions. One methodology consists of shipping cameras and special camera holding fixtures to dedicated testers, and having them record the screens of the mobile smart-phone or tablet device, usually using an HD camera. A drawback of this approach is that the finger movements of the respondent can obscure the view of the screen, in addition to the bias and logistical issues inherent in shipping special hardware to selected respondents. A newer approach uses a wireless projection of the mobile device screen onto the computer desktop screen of the respondent, who can then be recorded through their webcam, and thus a combined Video-in-Video view of the participant and the screen interactions viewed simultaneously while incorporating the verbal think aloud commentary of the respondents.

- Thinking aloud

The Think aloud protocol is a method of gathering data that is used in both usability and psychology studies. It involves getting a user to verbalize their thought processes (i.e. expressing their opinions, thoughts, anticipations, and actions)[29] as they perform a task or set of tasks. As a widespread method of usability testing, think aloud provides the researchers with the ability to discover what user really think during task performance and completion.[29]

Often an instructor is present to prompt the user into being more vocal as they work. Similar to the Subjects-in-Tandem method, it is useful in pinpointing problems and is relatively simple to set up. Additionally, it can provide insight into the user's attitude, which can not usually be discerned from a survey or questionnaire.

- RITE method

Rapid Iterative Testing and Evaluation (RITE)[30] is an iterative usability method similar to traditional "discount" usability testing. The tester and team must define a target population for testing, schedule participants to come into the lab, decide on how the users behaviors will be measured, construct a test script and have participants engage in a verbal protocol (e.g., think aloud). However it differs from these methods in that it advocates that changes to the user interface are made as soon as a problem is identified and a solution is clear. Sometimes this can occur after observing as few as 1 participant. Once the data for a participant has been collected the usability engineer and team decide if they will be making any changes to the prototype prior to the next participant. The changed interface is then tested with the remaining users.

- Subjects-in-tandem or co-discovery

Subjects-in-tandem (also called co-discovery) is the pairing of subjects in a usability test to gather important information on the ease of use of a product. Subjects tend to discuss the tasks they have to accomplish out loud and through these discussions observers learn where the problem areas of a design are. To encourage co-operative problem-solving between the two subjects, and the attendant discussions leading to it, the tests can be designed to make the subjects dependent on each other by assigning them complementary areas of responsibility (e.g. for testing of software, one subject may be put in charge of the mouse and the other of the keyboard.)

- Component-based usability testing

Component-based usability testing is an approach which aims to test the usability of elementary units of an interaction system, referred to as interaction components. The approach includes component-specific quantitative measures based on user interaction recorded in log files, and component-based usability questionnaires.

Other methods

- Cognitive walk through

Cognitive walkthrough is a method of evaluating the user interaction of a working prototype or final product. It is used to evaluate the system's ease of learning. Cognitive walk through is useful to understand the user's thought processes and decision making when interacting with a system, specially for first-time or infrequent users.

- Benchmarking

Benchmarking creates standardized test materials for a specific type of design. Four key characteristics are considered when establishing a benchmark: time to do the core task, time to fix errors, time to learn applications, and the functionality of the system. Once there is a benchmark, other designs can be compared to it to determine the usability of the system. Many of the common objectives of usability studies, such as trying to understand user behavior or exploring alternative designs, must be put aside. Unlike many other usability methods or types of labs studies, benchmark studies more closely resemble true experimental psychology lab studies, with greater attention to detail on methodology, study protocol and data analysis.[31]

- Meta-analysis

Meta-analysis is a statistical procedure to combine results across studies to integrate the findings. This phrase was coined in 1976 as a quantitative literature review. This type of evaluation is very powerful for determining the usability of a device because it combines multiple studies to provide very accurate quantitative support.

- Persona

Personas are fictitious characters created to represent a site or product's different user types and their associated demographics and technographics. Alan Cooper introduced the concept of using personas as a part of interactive design in 1998 in his book The Inmates Are Running the Asylum,[32] but had used this concept since as early as 1975. Personas are a usability evaluation method that can be used at various design stages. The most typical time to create personas is at the beginning of designing so that designers have a tangible idea of who the users of their product will be. Personas are the archetypes that represent actual groups of users and their needs, which can be a general description of person, context, or usage scenario. This technique turns marketing data on target user population into a few physical concepts of users to create empathy among the design team, with the final aim of tailoring a product more closely to how the personas will use it. To gather the marketing data that personas require, several tools can be used, including online surveys, web analytics, customer feedback forms, and usability tests, and interviews with customer-service representatives.[33]

Benefits

The key benefits of usability are:

- Higher revenues through increased sales

- Increased user efficiency and user satisfaction

- Reduced development costs

- Reduced support costs

Corporate integration

An increase in usability generally positively affects several facets of a company's output quality. In particular, the benefits fall into several common areas:[34]

- Increased productivity

- Decreased training and support costs

- Increased sales and revenues

- Reduced development time and costs

- Reduced maintenance costs

- Increased customer satisfaction

Increased usability in the workplace fosters several responses from employees: "Workers who enjoy their work do it better, stay longer in the face of temptation, and contribute ideas and enthusiasm to the evolution of enhanced productivity."[35] To create standards, companies often implement experimental design techniques that create baseline levels. Areas of concern in an office environment include (though are not necessarily limited to):[36]

- Working posture

- Design of workstation furniture

- Screen displays

- Input devices

- Organization issues

- Office environment

- Software interface

By working to improve said factors, corporations can achieve their goals of increased output at lower costs, while potentially creating optimal levels of customer satisfaction. There are numerous reasons why each of these factors correlates to overall improvement. For example, making software user interfaces easier to understand reduces the need for extensive training. The improved interface tends to lower the time needed to perform tasks, and so would both raise the productivity levels for employees and reduce development time (and thus costs). Each of the aforementioned factors are not mutually exclusive; rather they should be understood to work in conjunction to form the overall workplace environment. In the 2010s, usability is recognized as an important software quality attribute, earning its place among more traditional attributes such as performance, robustness and aesthetic appearance. Various academic programs focus on usability.[37] Several usability consultancy companies have emerged, and traditional consultancy and design firms offer similar services.

There is some resistance to integrating usability work in organisations. Usability is seen as a vague concept, it is difficult to measure and other areas are prioritised when IT projects run out of time or money.[38]

Professional development

Usability practitioners are sometimes trained as industrial engineers, psychologists, kinesiologists, systems design engineers, or with a degree in information architecture, information or library science, or Human-Computer Interaction (HCI). More often though they are people who are trained in specific applied fields who have taken on a usability focus within their organization. Anyone who aims to make tools easier to use and more effective for their desired function within the context of work or everyday living can benefit from studying usability principles and guidelines. For those seeking to extend their training, the User Experience Professionals' Association offers online resources, reference lists, courses, conferences, and local chapter meetings. The UXPA also sponsors World Usability Day each November.[39] Related professional organizations include the Human Factors and Ergonomics Society (HFES) and the Association for Computing Machinery's special interest groups in Computer Human Interaction (SIGCHI), Design of Communication (SIGDOC) and Computer Graphics and Interactive Techniques (SIGGRAPH). The Society for Technical Communication also has a special interest group on Usability and User Experience (UUX). They publish a quarterly newsletter called Usability Interface.[40]

See also

- Accessibility

- Chief experience officer (CXO)

- Design for All (inclusion)

- Experience design

- Fitts's law

- Form follows function

- Gemba or customer visit

- GOMS

- Gotcha (programming)

- GUI

- Human factors

- Information architecture

- Interaction design

- Interactive systems engineering

- Internationalization

- Learnability

- List of human-computer interaction topics

- List of system quality attributes

- Machine-Readable Documents

- Natural mapping (interface design)

- Needs analysis

- Non-functional requirement

- RITE method

- System Usability Scale

- Universal usability

- Usability goals

- Usability testing

- Usability engineering

- User experience

- User experience design

- Web usability

- World Usability Day

References

- Lee, Ju Yeon; Kim, Ju Young; You, Seung Ju; Kim, You Soo; Koo, Hye Yeon; Kim, Jeong Hyun; Kim, Sohye; Park, Jung Ha; Han, Jong Soo; Kil, Siye; Kim, Hyerim (2019-09-30). "Development and Usability of a Life-Logging Behavior Monitoring Application for Obese Patients". Journal of Obesity & Metabolic Syndrome. 28 (3): 194–202. doi:10.7570/jomes.2019.28.3.194. ISSN 2508-6235. PMC 6774444. PMID 31583384.

- Ergonomic Requirements for Office Work with Visual Display Terminals, ISO 9241-11, ISO, Geneva, 1998.

- Smith, K Tara (2011). "Needs Analysis: Or, How Do You Capture, Represent, and Validate User Requirements in a Formal Manner/Notation before Design". In Karwowski, W.; Soares, M.M.; Stanton, N.A. (eds.). Human Factors and Ergonomics in Consumer Product Design: Methods and Techniques (Handbook of Human Factors in Consumer Product Design). CRC Press.

- Nielsen, Jakob (4 January 2012). "Usability 101: Introduction to Usability". Nielsen Norman Group. Archived from the original on 1 September 2016. Retrieved 7 August 2016.

- Holm, Ivar (2006). Ideas and Beliefs in Architecture and Industrial design: How attitudes, orientations, and underlying assumptions shape the built environment. Oslo School of Architecture and Design. ISBN 82-547-0174-1.

- Nielsen, Jakob; Norman, Donald A. (14 January 2000). "Web-Site Usability: Usability On The Web Isn't A Luxury". JND.org. Archived from the original on 28 March 2015.

- Tuch, Alexandre N.; Presslaber, Eva E.; Stöcklin, Markus; Opwis, Klaus; Bargas-Avila, Javier A. (2012-11-01). "The role of visual complexity and prototypicality regarding first impression of websites: Working towards understanding aesthetic judgments". International Journal of Human-Computer Studies. 70 (11): 794–811. doi:10.1016/j.ijhcs.2012.06.003. ISSN 1071-5819.

- Usability 101: Introduction to Usability Archived 2011-04-08 at the Wayback Machine, Jakob Nielsen's Alertbox. Retrieved 2010-06-01

- Intuitive equals familiar Archived 2009-10-05 at the Wayback Machine, Communications of the ACM. 37:9, September 1994, pg. 17.

- Intuitive interaction : research and application. Alethea Blackler. Boca Raton, FL. 2018. ISBN 978-1-315-16714-5. OCLC 1044734346.

{{cite book}}: CS1 maint: others (link) - Ullrich, Daniel; Diefenbach, Sarah (2010-10-16). "From magical experience to effortlessness: an exploration of the components of intuitive interaction". Proceedings of the 6th Nordic Conference on Human-Computer Interaction: Extending Boundaries. NordiCHI '10. New York, NY, USA: Association for Computing Machinery: 801–804. doi:10.1145/1868914.1869033. ISBN 978-1-60558-934-3. S2CID 5378990.

- Lawry, Simon; Popovic, Vesna; Blackler, Alethea; Thompson, Helen (January 2019). "Age, familiarity, and intuitive use: An empirical investigation". Applied Ergonomics. 74: 74–84. doi:10.1016/j.apergo.2018.08.016. PMID 30487112. S2CID 54105210.

- Blackler, Alethea; Chen, Li-Hao; Desai, Shital; Astell, Arlene (2020), Brankaert, Rens; Kenning, Gail (eds.), "Intuitive Interaction Framework in User-Product Interaction for People Living with Dementia", HCI and Design in the Context of Dementia, Cham: Springer International Publishing, pp. 147–169, doi:10.1007/978-3-030-32835-1_10, ISBN 978-3-030-32834-4, S2CID 220794844, retrieved 2022-10-24

- Desai, Shital; Blackler, Alethea; Popovic, Vesna (2019-09-01). "Children's embodied intuitive interaction – Design aspects of embodiment". International Journal of Child-Computer Interaction. 21: 89–103. doi:10.1016/j.ijcci.2019.06.001. ISSN 2212-8689. S2CID 197709773.

- Hurtienne, J.; Klockner, K.; Diefenbach, S.; Nass, C.; Maier, A. (2015-05-01). "Designing with Image Schemas: Resolving the Tension Between Innovation, Inclusion and Intuitive Use". Interacting with Computers. 27 (3): 235–255. doi:10.1093/iwc/iwu049. ISSN 0953-5438.

- Kettlewell, Richard. "The Only Intuitive Interface Is The Nipple". Greenend.org.uk. Archived from the original on 2012-01-30. Retrieved 2013-11-01.

- Tognazzini, B. (1992), Tog on Interface, Boston, MA: Addison-Wesley, p. 246.

- "ISO 9241". 1992. Archived from the original on 2012-01-12.

- "ISO 9241-10:1996". International Organization for Standardization. Archived from the original on 26 July 2011. Retrieved 22 July 2011.

- Gould, J.D., Lewis, C.: "Designing for Usability: Key Principles and What Designers Think", Communications of the ACM, March 1985, 28(3)

- Archived November 27, 2010, at the Wayback Machine

- Card, S.K., Moran, T.P., & Newell, A. (1983). The psychology of human-computer interaction. Hillsdale, NJ: Lawrence Erlbaum Associates.

- "Memory Recognition and Recall in User Interfaces". www.nngroup.com. Archived from the original on 2017-01-05. Retrieved 2017-01-04.

- Short, Eden Jayne; Reay, Stephen; Gilderdale, Peter (2017-07-28). "Wayfinding for health seeking: Exploring how hospital wayfinding can employ communication design to improve the outpatient experience". The Design Journal. 20 (sup1): S2551–S2568. doi:10.1080/14606925.2017.1352767. ISSN 1460-6925.

- Wickens, C.D et al. (2004). An Introduction to Human Factors Engineering (2nd Ed), Pearson Education, Inc., Upper Saddle River, NJ : Prentice Hall.

- Wilson, James; Rosenberg, Daniel (1988-01-01), Helander, MARTIN (ed.), "Chapter 39 - Rapid Prototyping for User Interface Design", Handbook of Human-Computer Interaction, North-Holland, pp. 859–875, doi:10.1016/b978-0-444-70536-5.50044-0, ISBN 978-0-444-70536-5, retrieved 2020-04-02

- Dumas, J.S. and Redish, J.C. (1999). A Practical Guide to Usability Testing (revised ed.), Bristol, U.K.: Intellect Books.

- Kuniavsky, M. (2003). Observing the User Experience: A Practitioner's Guide to User Research, San Francisco, CA: Morgan Kaufmann.

- Georgsson, Mattias; Staggers, Nancy (January 2016). "Quantifying usability: an evaluation of a diabetes mHealth system on effectiveness, efficiency, and satisfaction metrics with associated user characteristics". Journal of the American Medical Informatics Association. 23 (1): 5–11. doi:10.1093/jamia/ocv099. ISSN 1067-5027. PMC 4713903. PMID 26377990.

- Medlock, M.C., Wixon, D., Terrano, M., Romero, R., and Fulton, B. (2002). Using the RITE method to improve products: A definition and a case study. Presented at the Usability Professionsals Association 2002, Orlando FL.

- "#27 – The art of usability benchmarking". Scottberkun.com. 2010-04-16. Archived from the original on 2013-11-04. Retrieved 2013-11-01.

- Cooper, A. (1999). The Inmates Are Running the Asylum, Sams Publishers, ISBN 0-672-31649-8

- "How I Built 4 Personas For My SEO Site". Seoroi.com. Archived from the original on 2013-11-03. Retrieved 2013-11-01.

- "Usability Resources: Usability in the Real World: Business Benefits". Usabilityprofessionals.org. Archived from the original on 2013-10-31. Retrieved 2013-11-01.

- Landauer, T. K. (1996). The trouble with computers. Cambridge, MA, The MIT Press. p158.

- McKeown, Celine (2008). Office ergonomics: practical applications. Boca Raton, FL, Taylor & Francis Group, LLC.

- Usability at Curlie

- Cajander, Åsa (2010), Usability - who cares? : the introduction of user-centred systems design in organisations, Acta Universitatis Upsaliensis, ISBN 9789155477974, OCLC 652387306

- "UXPA - The User Experience Professionals Association". Usabilityprofessionals.org. 2013-03-31. Archived from the original on 2013-10-21. Retrieved 2013-11-01.

- "STC Usability Interface - Newsletter Home Page". Stcsig.org. Archived from the original on 2013-10-23. Retrieved 2013-11-01.

Further reading

- R. G. Bias and D. J. Mayhew (eds) (2005), Cost-Justifying Usability: An Update for the Internet Age, Morgan Kaufmann

- Donald A. Norman (2013), The Design of Everyday Things, Basic Books, ISBN 0-465-07299-2

- Donald A. Norman (2004), Emotional Design: Why we love (or hate) everyday things, Basic Books, ISBN 0-465-05136-7

- Jakob Nielsen (1994), Usability Engineering, Morgan Kaufmann Publishers, ISBN 0-12-518406-9

- Jakob Nielsen (1994), Usability Inspection Methods, John Wiley & Sons, ISBN 0-471-01877-5

- Ben Shneiderman, Software Psychology, 1980, ISBN 0-87626-816-5