DeepDream

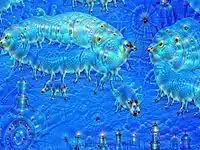

DeepDream is a computer vision program created by Google engineer Alexander Mordvintsev that uses a convolutional neural network to find and enhance patterns in images via algorithmic pareidolia, thus creating a dream-like appearance reminiscent of a psychedelic experience in the deliberately overprocessed images.[1][2][3]

| Part of a series on |

| Machine learning and data mining |

|---|

|

Google's program popularized the term (deep) "dreaming" to refer to the generation of images that produce desired activations in a trained deep network, and the term now refers to a collection of related approaches.

History

The DeepDream software, originated in a deep convolutional network codenamed "Inception" after the film of the same name,[1][2][3] was developed for the ImageNet Large-Scale Visual Recognition Challenge (ILSVRC) in 2014[3] and released in July 2015.

The dreaming idea and name became popular on the internet in 2015 thanks to Google's DeepDream program. The idea dates from early in the history of neural networks,[4] and similar methods have been used to synthesize visual textures.[5] Related visualization ideas were developed (prior to Google's work) by several research groups.[6][7]

After Google published their techniques and made their code open-source,[8] a number of tools in the form of web services, mobile applications, and desktop software appeared on the market to enable users to transform their own photos.[9]

Process

.jpg.webp)

The software is designed to detect faces and other patterns in images, with the aim of automatically classifying images.[10] However, once trained, the network can also be run in reverse, being asked to adjust the original image slightly so that a given output neuron (e.g. the one for faces or certain animals) yields a higher confidence score. This can be used for visualizations to understand the emergent structure of the neural network better, and is the basis for the DeepDream concept. This reversal procedure is never perfectly clear and unambiguous because it utilizes a one-to-many mapping process.[11] However, after enough reiterations, even imagery initially devoid of the sought features will be adjusted enough that a form of pareidolia results, by which psychedelic and surreal images are generated algorithmically. The optimization resembles backpropagation; however, instead of adjusting the network weights, the weights are held fixed and the input is adjusted.

For example, an existing image can be altered so that it is "more cat-like", and the resulting enhanced image can be again input to the procedure.[2] This usage resembles the activity of looking for animals or other patterns in clouds.

Applying gradient descent independently to each pixel of the input produces images in which adjacent pixels have little relation and thus the image has too much high frequency information. The generated images can be greatly improved by including a prior or regularizer that prefers inputs that have natural image statistics (without a preference for any particular image), or are simply smooth.[7][12][13] For example, Mahendran et al.[12] used the total variation regularizer that prefers images that are piecewise constant. Various regularizers are discussed further in Yosinski et al.[13] An in-depth, visual exploration of feature visualization and regularization techniques was published more recently.[14]

The cited resemblance of the imagery to LSD- and psilocybin-induced hallucinations is suggestive of a functional resemblance between artificial neural networks and particular layers of the visual cortex.[15]

Neural networks such as DeepDream have biological analogies providing insight into brain processing and the formation of consciousness. Hallucinogens such as DMT alter the function of the serotonergic system which is present within the layers of the visual cortex. Neural networks are trained on input vectors and are altered by internal variations during the training process. The input and internal modifications represent the processing of exogenous and endogenous signals respectively in the visual cortex. As internal variations are modified in deep neural networks the output image reflect these changes. This specific manipulation demonstrates how inner brain mechanisms are analogous to internal layers of neural networks. Internal noise level modifications represent how hallucinogens omit external sensory information leading internal preconceived conceptions to strongly influence visual perception.[16]

Usage

.jpg.webp)

The dreaming idea can be applied to hidden (internal) neurons other than those in the output, which allows exploration of the roles and representations of various parts of the network.[13] It is also possible to optimize the input to satisfy either a single neuron (this usage is sometimes called Activity Maximization)[17] or an entire layer of neurons.

While dreaming is most often used for visualizing networks or producing computer art, it has recently been proposed that adding "dreamed" inputs to the training set can improve training times for abstractions in Computer Science.[18]

The DeepDream model has also been demonstrated to have application in the field of art history.[19]

DeepDream was used for Foster the People's music video for the song "Doing It for the Money".[20]

In 2017, a research group out of the University of Sussex created a Hallucination Machine, applying the DeepDream algorithm to a pre-recorded panoramic video, allowing users to explore virtual reality environments to mimic the experience of psychoactive substances and/or psychopathological conditions.[21] They were able to demonstrate that the subjective experiences induced by the Hallucination Machine differed significantly from control (non-‘hallucinogenic’) videos, while bearing phenomenological similarities to the psychedelic state (following administration of psilocybin).

In 2021, a study published in the journal Entropy demonstrated the similarity between DeepDream and actual psychedelic experience with neuroscientific evidence.[22] The authors recorded Electroencephalography (EEG) of human participants during passive vision of a movie clip and its DeepDream-generated counterpart. They found that DeepDream video triggered a higher entropy in the EEG signal and a higher level of functional connectivity between brain areas,[22] both well-known biomarkers of actual psychedelic experience.[23]

In 2022, a research group coordinated by the University of Trento "measure[d] participants’ cognitive flexibility and creativity after the exposure to virtual reality panoramic videos and their hallucinatory-like counterparts generated by the DeepDream algorithm ... following the simulated psychedelic exposure, individuals exhibited ... an attenuated contribution of the automatic process and chaotic dynamics underlying their decision processes, presumably due to a reorganization in the cognitive dynamics that facilitates the exploration of uncommon decision strategies and inhibits automated choices."[24]

See also

References

- Mordvintsev, Alexander; Olah, Christopher; Tyka, Mike (2015). "DeepDream - a code example for visualizing Neural Networks". Google Research. Archived from the original on 2015-07-08.

- Mordvintsev, Alexander; Olah, Christopher; Tyka, Mike (2015). "Inceptionism: Going Deeper into Neural Networks". Google Research. Archived from the original on 2015-07-03.

- Szegedy, Christian; Liu, Wei; Jia, Yangqing; Sermanet, Pierre; Reed, Scott E.; Anguelov, Dragomir; Erhan, Dumitru; Vanhoucke, Vincent; Rabinovich, Andrew (2015). "Going deeper with convolutions". IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2015, Boston, MA, USA, June 7–12, 2015. IEEE Computer Society. pp. 1–9. arXiv:1409.4842. doi:10.1109/CVPR.2015.7298594.

- Lewis, J.P. (1988). "Creation by refinement: a creativity paradigm for gradient descent learning networks". IEEE International Conference on Neural Networks. IEEE International Conference on Neural Networks. pp. 229-233 vol.2. doi:10.1109/ICNN.1988.23933. ISBN 0-7803-0999-5.

- Portilla, J; Simoncelli, Eero (2000). "A parametric texture model based on joint statistics of complex wavelet coefficients". International Journal of Computer Vision. 40: 49–70. doi:10.1023/A:1026553619983. S2CID 2475577.

- Erhan, Dumitru. (2009). Visualizing Higher-Layer Features of a Deep Network. International Conference on Machine Learning Workshop on Learning Feature Hierarchies. S2CID 15127402.

- Simonyan, Karen; Vedaldi, Andrea; Zisserman, Andrew (2014). Deep Inside Convolutional Networks: Visualising Image Classification Models and Saliency Maps. International Conference on Learning Representations Workshop. arXiv:1312.6034.

- deepdream on GitHub

- Daniel Culpan (2015-07-03). "These Google "Deep Dream" Images Are Weirdly Mesmerising". Wired. Retrieved 2015-07-25.

- Rich McCormick (7 July 2015). "Fear and Loathing in Las Vegas is terrifying through the eyes of a computer". The Verge. Retrieved 2015-07-25.

- Hayes, Brian (2015). "Computer Vision and Computer Hallucinations". American Scientist. 103 (6): 380. doi:10.1511/2015.117.380. ISSN 0003-0996.

- Mahendran, Aravindh; Vedaldi, Andrea (2015). "Understanding Deep Image Representations by Inverting Them". 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). IEEE Conference on Computer Vision and Pattern Recognition. pp. 5188–5196. arXiv:1412.0035. doi:10.1109/CVPR.2015.7299155. ISBN 978-1-4673-6964-0.

- Yosinski, Jason; Clune, Jeff; Nguyen, Anh; Fuchs, Thomas (2015). Understanding Neural Networks Through Deep Visualization. Deep Learning Workshop, International Conference on Machine Learning (ICML) Deep Learning Workshop. arXiv:1506.06579.

- Olah, Chris; Mordvintsev, Alexander; Schubert, Ludwig (2017-11-07). "Feature Visualization". Distill. 2 (11). doi:10.23915/distill.00007. ISSN 2476-0757.

- LaFrance, Adrienne (2015-09-03). "When Robots Hallucinate". The Atlantic. Retrieved 24 September 2015.

- Timmermann, Christopher (2020-12-12). "Neural Network Models for DMT-induced Visual Hallucinations". Neuroscience of Consciousness. NIH. 2020 (1): niaa024. doi:10.1093/nc/niaa024. PMC 7734438. PMID 33343929.

- Nguyen, Anh; Dosovitskiy, Alexey; Yosinski, Jason; Brox, Thomas (2016). Synthesizing the preferred inputs for neurons in neural networks via deep generator networks. arxiv. arXiv:1605.09304. Bibcode:2016arXiv160509304N.

- Arora, Sanjeev; Liang, Yingyu; Tengyu, Ma (2016). Why are deep nets reversible: A simple theory, with implications for training. arxiv. arXiv:1511.05653. Bibcode:2015arXiv151105653A.

- Spratt, Emily L. (2017). "Dream Formulations and Deep Neural Networks: Humanistic Themes in the Iconology of the Machine-Learned Image" (PDF). Kunsttexte. Humboldt-Universität zu Berlin. 4. arXiv:1802.01274. Bibcode:2018arXiv180201274S.

- fosterthepeopleVEVO (2017-08-11), Foster The People - Doing It for the Money, retrieved 2017-08-15

- Suzuki, Keisuke (22 November 2017). "A Deep-Dream Virtual Reality Platform for Studying Altered Perceptual Phenomenology". Sci Rep. 7 (1): 15982. Bibcode:2017NatSR...715982S. doi:10.1038/s41598-017-16316-2. PMC 5700081. PMID 29167538.

- Greco, Antonino; Gallitto, Giuseppe; D’Alessandro, Marco; Rastelli, Clara (July 2021). "Increased Entropic Brain Dynamics during DeepDream-Induced Altered Perceptual Phenomenology". Entropy. 23 (7): 839. Bibcode:2021Entrp..23..839G. doi:10.3390/e23070839. ISSN 1099-4300. PMC 8306862. PMID 34208923.

- Carhart-Harris, Robin; Leech, Robert; Hellyer, Peter; Shanahan, Murray; Feilding, Amanda; Tagliazucchi, Enzo; Chialvo, Dante; Nutt, David (2014). "The entropic brain: a theory of conscious states informed by neuroimaging research with psychedelic drugs". Frontiers in Human Neuroscience. 8: 20. doi:10.3389/fnhum.2014.00020. ISSN 1662-5161. PMC 3909994. PMID 24550805.

- Rastelli, Clara; Greco, Antonino; Kennett, Yoed; Finocchiaro, Chiara; De Pisapia, Nicola (7 March 2022). "Simulated visual hallucinations in virtual reality enhance cognitive flexibility". Sci Rep. 12 (1): 4027. Bibcode:2022NatSR..12.4027R. doi:10.1038/s41598-022-08047-w. PMC 8901713. PMID 35256740.

External links

| External video | |

|---|---|

- Deep Dream, python notebook on GitHub

- Mordvintsev, Alexander; Olah, Christopher; Tyka, Mike (June 17, 2015). "Inceptionism: Going Deeper into Neural Networks". Archived from the original on 2015-07-03.