Inner product space

In mathematics, an inner product space (or, rarely, a Hausdorff pre-Hilbert space[1][2]) is a real vector space or a complex vector space with an operation called an inner product. The inner product of two vectors in the space is a scalar, often denoted with angle brackets such as in . Inner products allow formal definitions of intuitive geometric notions, such as lengths, angles, and orthogonality (zero inner product) of vectors. Inner product spaces generalize Euclidean vector spaces, in which the inner product is the dot product or scalar product of Cartesian coordinates. Inner product spaces of infinite dimension are widely used in functional analysis. Inner product spaces over the field of complex numbers are sometimes referred to as unitary spaces. The first usage of the concept of a vector space with an inner product is due to Giuseppe Peano, in 1898.[3]

.png.webp)

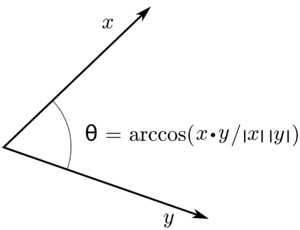

An inner product naturally induces an associated norm, (denoted and in the picture); so, every inner product space is a normed vector space. If this normed space is also complete (that is, a Banach space) then the inner product space is a Hilbert space.[1] If an inner product space H is not a Hilbert space, it can be extended by completion to a Hilbert space This means that is a linear subspace of the inner product of is the restriction of that of and is dense in for the topology defined by the norm.[1][4]

Definition

In this article, F denotes a field that is either the real numbers or the complex numbers A scalar is thus an element of F. A bar over an expression representing a scalar denotes the complex conjugate of this scalar. A zero vector is denoted for distinguishing it from the scalar 0.

An inner product space is a vector space V over the field F together with an inner product, that is a map

that satisfies the following three properties for all vectors and all scalars .[5][6]

- Conjugate symmetry: As if and only if a is real, conjugate symmetry implies that is always a real number. If F is , conjugate symmetry is just symmetry.

- Linearity in the first argument:[Note 1]

- Positive-definiteness: if x is not zero, then (conjugate symmetry implies that is real).

If the positive-definiteness condition is replaced by merely requiring that for all x, then one obtains the definition of positive semi-definite Hermitian form. A positive semi-definite Hermitian form is an inner product if and only if for all x, if then x = 0.[7]

Basic properties

In the following properties, which result almost immediately from the definition of an inner product, x, y and z are arbitrary vectors, and a and b are arbitrary scalars.

- is real and nonnegative.

- if and only if

This implies that an inner product is a sesquilinear form.- where

denotes the real part of its argument.

Over , conjugate-symmetry reduces to symmetry, and sesquilinearity reduces to bilinearity. Hence an inner product on a real vector space is a positive-definite symmetric bilinear form. The binomial expansion of a square becomes

Convention variant

Some authors, especially in physics and matrix algebra, prefer to define inner products and sesquilinear forms with linearity in the second argument rather than the first. Then the first argument becomes conjugate linear, rather than the second.

Some examples

Real and complex numbers

Among the simplest examples of inner product spaces are and The real numbers are a vector space over that becomes an inner product space with arithmetic multiplication as its inner product:

The complex numbers are a vector space over that becomes an inner product space with the inner product

Unlike with the real numbers, the assignment does not define a complex inner product on

Euclidean vector space

More generally, the real -space with the dot product is an inner product space, an example of a Euclidean vector space.

where is the transpose of

A function is an inner product on if and only if there exists a symmetric positive-definite matrix such that for all If is the identity matrix then is the dot product. For another example, if and is positive-definite (which happens if and only if and one/both diagonal elements are positive) then for any

As mentioned earlier, every inner product on is of this form (where and satisfy ).

Complex coordinate space

The general form of an inner product on is known as the Hermitian form and is given by

where is any Hermitian positive-definite matrix and is the conjugate transpose of For the real case, this corresponds to the dot product of the results of directionally-different scaling of the two vectors, with positive scale factors and orthogonal directions of scaling. It is a weighted-sum version of the dot product with positive weights—up to an orthogonal transformation.

Hilbert space

The article on Hilbert spaces has several examples of inner product spaces, wherein the metric induced by the inner product yields a complete metric space. An example of an inner product space which induces an incomplete metric is the space of continuous complex valued functions and on the interval The inner product is

This space is not complete; consider for example, for the interval [−1, 1] the sequence of continuous "step" functions, defined by:

This sequence is a Cauchy sequence for the norm induced by the preceding inner product, which does not converge to a continuous function.

Random variables

For real random variables and the expected value of their product

is an inner product.[8][9][10] In this case, if and only if (that is, almost surely), where denotes the probability of the event. This definition of expectation as inner product can be extended to random vectors as well.

Complex matrices

The inner product for complex square matrices of the same size is the Frobenius inner product . Since trace and transposition are linear and the conjugation is on the second matrix, it is a sesquilinear operator. We further get Hermitian symmetry by,

Finally, since for nonzero, , we get that the Frobenius inner product is positive definite too, and so is an inner product.

Vector spaces with forms

On an inner product space, or more generally a vector space with a nondegenerate form (hence an isomorphism ), vectors can be sent to covectors (in coordinates, via transpose), so that one can take the inner product and outer product of two vectors—not simply of a vector and a covector.

Basic results, terminology, and definitions

Norm properties

Every inner product space induces a norm, called its canonical norm, that is defined by

With this norm, every inner product space becomes a normed vector space.

So, every general property of normed vector spaces applies to inner product spaces. In particular, one has the following properties:

- Absolute homogeneity

-

for every and (this results from ).

- Triangle inequality

-

for

These two properties show that one has indeed a norm. - Cauchy–Schwarz inequality

-

for every with equality if and only if and are linearly dependent.

- Parallelogram law

-

for every

The parallelogram law is a necessary and sufficient condition for a norm to be defined by an inner product.

- Polarization identity

-

for every The inner product can be retrieved from the norm by the polarization identity, since its imaginary part is the real part of

- Ptolemy's inequality

-

for every Ptolemy's inequality is a necessary and sufficient condition for a seminorm to be the norm defined by an inner product.[11]

Orthogonality

- Orthogonality

-

Two vectors and are said to be orthogonal, often written if their inner product is zero, that is, if

This happens if and only if for all scalars [12] and if and only if the real-valued function is non-negative. (This is a consequence of the fact that, if then the scalar minimizes with value which is always non positive).

For a complex − but not real − inner product space a linear operator is identically if and only if for every [12] - Orthogonal complement

- The orthogonal complement of a subset is the set of the vectors that are orthogonal to all elements of C; that is,

This set is always a closed vector subspace of and if the closure of in is a vector subspace then

- Pythagorean theorem

-

If and are orthogonal, then

This may be proved by expressing the squared norms in terms of the inner products, using additivity for expanding the right-hand side of the equation.

The name Pythagorean theorem arises from the geometric interpretation in Euclidean geometry.

- Parseval's identity

-

An induction on the Pythagorean theorem yields: if are pairwise orthogonal, then

- Angle

-

When is a real number then the Cauchy–Schwarz inequality implies that and thus that

is a real number. This allows defining the (non oriented) angle of two vectors in modern definitions of Euclidean geometry in terms of linear algebra. This is also used in data analysis, under the name "cosine similarity", for comparing two vectors of data.

Real and complex parts of inner products

Suppose that is an inner product on (so it is antilinear in its second argument). The polarization identity shows that the real part of the inner product is

If is a real vector space then

and the imaginary part (also called the complex part) of is always

Assume for the rest of this section that is a complex vector space. The polarization identity for complex vector spaces shows that

The map defined by for all satisfies the axioms of the inner product except that it is antilinear in its first, rather than its second, argument. The real part of both and are equal to but the inner products differ in their complex part:

The last equality is similar to the formula expressing a linear functional in terms of its real part.

These formulas show that every complex inner product is completely determined by its real part. Moreover, this real part defines an inner product on considered as a real vector space. There is thus a one-to-one correspondence between complex inner products on a complex vector space and real inner products on

For example, suppose that for some integer When is considered as a real vector space in the usual way (meaning that it is identified with the dimensional real vector space with each identified with ), then the dot product defines a real inner product on this space. The unique complex inner product on induced by the dot product is the map that sends to (because the real part of this map is equal to the dot product).

Real vs. complex inner products

Let denote considered as a vector space over the real numbers rather than complex numbers. The real part of the complex inner product is the map which necessarily forms a real inner product on the real vector space Every inner product on a real vector space is a bilinear and symmetric map.

For example, if with inner product where is a vector space over the field then is a vector space over and is the dot product where is identified with the point (and similarly for ); thus the standard inner product on is an "extension" the dot product . Also, had been instead defined to be the symmetric map (rather than the usual conjugate symmetric map ) then its real part would not be the dot product; furthermore, without the complex conjugate, if but then so the assignment would not define a norm.

The next examples show that although real and complex inner products have many properties and results in common, they are not entirely interchangeable. For instance, if then but the next example shows that the converse is in general not true. Given any the vector (which is the vector rotated by 90°) belongs to and so also belongs to (although scalar multiplication of by is not defined in the vector in denoted by is nevertheless still also an element of ). For the complex inner product, whereas for the real inner product the value is always

If is a complex inner product and is a continuous linear operator that satisfies for all then This statement is no longer true if is instead a real inner product, as this next example shows. Suppose that has the inner product mentioned above. Then the map defined by is a linear map (linear for both and ) that denotes rotation by in the plane. Because and perpendicular vectors and is just the dot product, for all vectors nevertheless, this rotation map is certainly not identically In contrast, using the complex inner product gives which (as expected) is not identically zero.

Orthonormal sequences

Let be a finite dimensional inner product space of dimension Recall that every basis of consists of exactly linearly independent vectors. Using the Gram–Schmidt process we may start with an arbitrary basis and transform it into an orthonormal basis. That is, into a basis in which all the elements are orthogonal and have unit norm. In symbols, a basis is orthonormal if for every and for each index

This definition of orthonormal basis generalizes to the case of infinite-dimensional inner product spaces in the following way. Let be any inner product space. Then a collection

is a basis for if the subspace of generated by finite linear combinations of elements of is dense in (in the norm induced by the inner product). Say that is an orthonormal basis for if it is a basis and

if and for all

Using an infinite-dimensional analog of the Gram-Schmidt process one may show:

Theorem. Any separable inner product space has an orthonormal basis.

Using the Hausdorff maximal principle and the fact that in a complete inner product space orthogonal projection onto linear subspaces is well-defined, one may also show that

Theorem. Any complete inner product space has an orthonormal basis.

The two previous theorems raise the question of whether all inner product spaces have an orthonormal basis. The answer, it turns out is negative. This is a non-trivial result, and is proved below. The following proof is taken from Halmos's A Hilbert Space Problem Book (see the references).

Proof Recall that the dimension of an inner product space is the cardinality of a maximal orthonormal system that it contains (by Zorn's lemma it contains at least one, and any two have the same cardinality). An orthonormal basis is certainly a maximal orthonormal system but the converse need not hold in general. If is a dense subspace of an inner product space then any orthonormal basis for is automatically an orthonormal basis for Thus, it suffices to construct an inner product space with a dense subspace whose dimension is strictly smaller than that of Let be a Hilbert space of dimension (for instance, ). Let be an orthonormal basis of so Extend to a Hamel basis for where Since it is known that the Hamel dimension of is the cardinality of the continuum, it must be that

Let be a Hilbert space of dimension (for instance, ). Let be an orthonormal basis for and let be a bijection. Then there is a linear transformation such that for and for

Let and let be the graph of Let be the closure of in ; we will show Since for any we have it follows that

Next, if then for some so ; since as well, we also have It follows that so and is dense in

Finally, is a maximal orthonormal set in ; if

for all then so is the zero vector in Hence the dimension of is whereas it is clear that the dimension of is This completes the proof.

Parseval's identity leads immediately to the following theorem:

Theorem. Let be a separable inner product space and an orthonormal basis of Then the map

is an isometric linear map with a dense image.

This theorem can be regarded as an abstract form of Fourier series, in which an arbitrary orthonormal basis plays the role of the sequence of trigonometric polynomials. Note that the underlying index set can be taken to be any countable set (and in fact any set whatsoever, provided is defined appropriately, as is explained in the article Hilbert space). In particular, we obtain the following result in the theory of Fourier series:

Theorem. Let be the inner product space Then the sequence (indexed on set of all integers) of continuous functions

is an orthonormal basis of the space with the inner product. The mapping

is an isometric linear map with dense image.

Orthogonality of the sequence follows immediately from the fact that if then

Normality of the sequence is by design, that is, the coefficients are so chosen so that the norm comes out to 1. Finally the fact that the sequence has a dense algebraic span, in the inner product norm, follows from the fact that the sequence has a dense algebraic span, this time in the space of continuous periodic functions on with the uniform norm. This is the content of the Weierstrass theorem on the uniform density of trigonometric polynomials.

Operators on inner product spaces

Several types of linear maps between inner product spaces and are of relevance:

- Continuous linear maps: is linear and continuous with respect to the metric defined above, or equivalently, is linear and the set of non-negative reals where ranges over the closed unit ball of is bounded.

- Symmetric linear operators: is linear and for all

- Isometries: satisfies for all A linear isometry (resp. an antilinear isometry) is an isometry that is also a linear map (resp. an antilinear map). For inner product spaces, the polarization identity can be used to show that is an isometry if and only if for all All isometries are injective. The Mazur–Ulam theorem establishes that every surjective isometry between two real normed spaces is an affine transformation. Consequently, an isometry between real inner product spaces is a linear map if and only if Isometries are morphisms between inner product spaces, and morphisms of real inner product spaces are orthogonal transformations (compare with orthogonal matrix).

- Isometrical isomorphisms: is an isometry which is surjective (and hence bijective). Isometrical isomorphisms are also known as unitary operators (compare with unitary matrix).

From the point of view of inner product space theory, there is no need to distinguish between two spaces which are isometrically isomorphic. The spectral theorem provides a canonical form for symmetric, unitary and more generally normal operators on finite dimensional inner product spaces. A generalization of the spectral theorem holds for continuous normal operators in Hilbert spaces.[13]

Generalizations

Any of the axioms of an inner product may be weakened, yielding generalized notions. The generalizations that are closest to inner products occur where bilinearity and conjugate symmetry are retained, but positive-definiteness is weakened.

Degenerate inner products

If is a vector space and a semi-definite sesquilinear form, then the function:

makes sense and satisfies all the properties of norm except that does not imply (such a functional is then called a semi-norm). We can produce an inner product space by considering the quotient The sesquilinear form factors through

This construction is used in numerous contexts. The Gelfand–Naimark–Segal construction is a particularly important example of the use of this technique. Another example is the representation of semi-definite kernels on arbitrary sets.

Nondegenerate conjugate symmetric forms

Alternatively, one may require that the pairing be a nondegenerate form, meaning that for all non-zero there exists some such that though need not equal ; in other words, the induced map to the dual space is injective. This generalization is important in differential geometry: a manifold whose tangent spaces have an inner product is a Riemannian manifold, while if this is related to nondegenerate conjugate symmetric form the manifold is a pseudo-Riemannian manifold. By Sylvester's law of inertia, just as every inner product is similar to the dot product with positive weights on a set of vectors, every nondegenerate conjugate symmetric form is similar to the dot product with nonzero weights on a set of vectors, and the number of positive and negative weights are called respectively the positive index and negative index. Product of vectors in Minkowski space is an example of indefinite inner product, although, technically speaking, it is not an inner product according to the standard definition above. Minkowski space has four dimensions and indices 3 and 1 (assignment of "+" and "−" to them differs depending on conventions).

Purely algebraic statements (ones that do not use positivity) usually only rely on the nondegeneracy (the injective homomorphism ) and thus hold more generally.

Related products

The term "inner product" is opposed to outer product, which is a slightly more general opposite. Simply, in coordinates, the inner product is the product of a covector with an vector, yielding a matrix (a scalar), while the outer product is the product of an vector with a covector, yielding an matrix. The outer product is defined for different dimensions, while the inner product requires the same dimension. If the dimensions are the same, then the inner product is the trace of the outer product (trace only being properly defined for square matrices). In an informal summary: "inner is horizontal times vertical and shrinks down, outer is vertical times horizontal and expands out".

More abstractly, the outer product is the bilinear map sending a vector and a covector to a rank 1 linear transformation (simple tensor of type (1, 1)), while the inner product is the bilinear evaluation map given by evaluating a covector on a vector; the order of the domain vector spaces here reflects the covector/vector distinction.

The inner product and outer product should not be confused with the interior product and exterior product, which are instead operations on vector fields and differential forms, or more generally on the exterior algebra.

As a further complication, in geometric algebra the inner product and the exterior (Grassmann) product are combined in the geometric product (the Clifford product in a Clifford algebra) – the inner product sends two vectors (1-vectors) to a scalar (a 0-vector), while the exterior product sends two vectors to a bivector (2-vector) – and in this context the exterior product is usually called the outer product (alternatively, wedge product). The inner product is more correctly called a scalar product in this context, as the nondegenerate quadratic form in question need not be positive definite (need not be an inner product).

See also

- Bilinear form – Scalar-valued bilinear function

- Biorthogonal system

- Dual space – In mathematics, vector space of linear forms

- Energetic space

- L-semi-inner product – Generalization of inner products that applies to all normed spaces

- Minkowski distance

- Orthogonal basis

- Orthogonal complement

- Orthonormal basis – Specific linear basis (mathematics)

Notes

- By combining the linear in the first argument property with the conjugate symmetry property you get conjugate-linear in the second argument: . This is how the inner product was originally defined and is used in most mathematical contexts. A different convention has been adopted in theoretical physics and quantum mechanics, originating in the bra-ket notation of Paul Dirac, where the inner product is taken to be linear in the second argument and conjugate-linear in the first argument; this convention is used in many other domains such as engineering and computer science.

References

- Trèves 2006, pp. 112–125.

- Schaefer & Wolff 1999, pp. 40–45.

- Moore, Gregory H. (1995). "The axiomatization of linear algebra: 1875-1940". Historia Mathematica. 22 (3): 262–303. doi:10.1006/hmat.1995.1025.

- Schaefer & Wolff 1999, pp. 36–72.

- Jain, P. K.; Ahmad, Khalil (1995). "5.1 Definitions and basic properties of inner product spaces and Hilbert spaces". Functional Analysis (2nd ed.). New Age International. p. 203. ISBN 81-224-0801-X.

- Prugovečki, Eduard (1981). "Definition 2.1". Quantum Mechanics in Hilbert Space (2nd ed.). Academic Press. pp. 18ff. ISBN 0-12-566060-X.

- Schaefer 1999, p. 44.

- Ouwehand, Peter (November 2010). "Spaces of Random Variables" (PDF). AIMS. Retrieved 2017-09-05.

- Siegrist, Kyle (1997). "Vector Spaces of Random Variables". Random: Probability, Mathematical Statistics, Stochastic Processes. Retrieved 2017-09-05.

- Bigoni, Daniele (2015). "Appendix B: Probability theory and functional spaces" (PDF). Uncertainty Quantification with Applications to Engineering Problems (PhD). Technical University of Denmark. Retrieved 2017-09-05.

- Apostol, Tom M. (1967). "Ptolemy's Inequality and the Chordal Metric". Mathematics Magazine. 40 (5): 233–235. doi:10.2307/2688275. JSTOR 2688275.

- Rudin 1991, pp. 306–312.

- Rudin 1991

Bibliography

- Axler, Sheldon (1997). Linear Algebra Done Right (2nd ed.). Berlin, New York: Springer-Verlag. ISBN 978-0-387-98258-8.

- Dieudonné, Jean (1969). Treatise on Analysis, Vol. I [Foundations of Modern Analysis] (2nd ed.). Academic Press. ISBN 978-1-4067-2791-3.

- Emch, Gerard G. (1972). Algebraic Methods in Statistical Mechanics and Quantum Field Theory. Wiley-Interscience. ISBN 978-0-471-23900-0.

- Halmos, Paul R. (8 November 1982). A Hilbert Space Problem Book. Graduate Texts in Mathematics. Vol. 19 (2nd ed.). New York: Springer-Verlag. ISBN 978-0-387-90685-0. OCLC 8169781.

- Lax, Peter D. (2002). Functional Analysis (PDF). Pure and Applied Mathematics. New York: Wiley-Interscience. ISBN 978-0-471-55604-6. OCLC 47767143. Retrieved July 22, 2020.

- Rudin, Walter (1991). Functional Analysis. International Series in Pure and Applied Mathematics. Vol. 8 (Second ed.). New York, NY: McGraw-Hill Science/Engineering/Math. ISBN 978-0-07-054236-5. OCLC 21163277.

- Schaefer, Helmut H.; Wolff, Manfred P. (1999). Topological Vector Spaces. GTM. Vol. 8 (Second ed.). New York, NY: Springer New York Imprint Springer. ISBN 978-1-4612-7155-0. OCLC 840278135.

- Schechter, Eric (1996). Handbook of Analysis and Its Foundations. San Diego, CA: Academic Press. ISBN 978-0-12-622760-4. OCLC 175294365.

- Swartz, Charles (1992). An introduction to Functional Analysis. New York: M. Dekker. ISBN 978-0-8247-8643-4. OCLC 24909067.

- Trèves, François (2006) [1967]. Topological Vector Spaces, Distributions and Kernels. Mineola, N.Y.: Dover Publications. ISBN 978-0-486-45352-1. OCLC 853623322.

- Young, Nicholas (1988). An Introduction to Hilbert Space. Cambridge University Press. ISBN 978-0-521-33717-5.