Shellsort

Shellsort, also known as Shell sort or Shell's method, is an in-place comparison sort. It can be seen as either a generalization of sorting by exchange (bubble sort) or sorting by insertion (insertion sort).[3] The method starts by sorting pairs of elements far apart from each other, then progressively reducing the gap between elements to be compared. By starting with far apart elements, it can move some out-of-place elements into position faster than a simple nearest neighbor exchange. Donald Shell published the first version of this sort in 1959.[4][5] The running time of Shellsort is heavily dependent on the gap sequence it uses. For many practical variants, determining their time complexity remains an open problem.

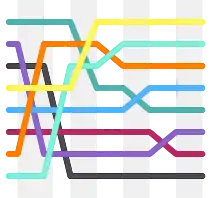

Shellsort with gaps 23, 10, 4, 1 in action | |

| Class | Sorting algorithm |

|---|---|

| Data structure | Array |

| Worst-case performance | O(n2) (worst known worst case gap sequence) O(n log2n) (best known worst case gap sequence)[1] |

| Best-case performance | O(n log n) (most gap sequences) O(n log2n) (best known worst-case gap sequence)[2] |

| Average performance | depends on gap sequence |

| Worst-case space complexity | О(n) total, O(1) auxiliary |

Description

Shellsort is an optimization of insertion sort that allows the exchange of items that are far apart. The idea is to arrange the list of elements so that, starting anywhere, taking every hth element produces a sorted list. Such a list is said to be h-sorted. It can also be thought of as h interleaved lists, each individually sorted.[6] Beginning with large values of h allows elements to move long distances in the original list, reducing large amounts of disorder quickly, and leaving less work for smaller h-sort steps to do.[7] If the list is then k-sorted for some smaller integer k, then the list remains h-sorted. Following this idea for a decreasing sequence of h values ending in 1 is guaranteed to leave a sorted list in the end.[6]

In simplistic terms, this means if we have an array of 1024 numbers, our first gap (h) could be 512. We then run through the list comparing each element in the first half to the element in the second half. Our second gap (k) is 256, which breaks the array into four sections (starting at 0,256,512,768), and we make sure the first items in each section are sorted relative to each other, then the second item in each section, and so on. In practice the gap sequence could be anything, but the last gap is always 1 to finish the sort (effectively finishing with an ordinary insertion sort).

An example run of Shellsort with gaps 5, 3 and 1 is shown below.

| a1 | a2 | a3 | a4 | a5 | a6 | a7 | a8 | a9 | a10 | a11 | a12 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Input data | 62 | 83 | 18 | 53 | 07 | 17 | 95 | 86 | 47 | 69 | 25 | 28 |

| After 5-sorting | 17 | 28 | 18 | 47 | 07 | 25 | 83 | 86 | 53 | 69 | 62 | 95 |

| After 3-sorting | 17 | 07 | 18 | 47 | 28 | 25 | 69 | 62 | 53 | 83 | 86 | 95 |

| After 1-sorting | 07 | 17 | 18 | 25 | 28 | 47 | 53 | 62 | 69 | 83 | 86 | 95 |

The first pass, 5-sorting, performs insertion sort on five separate subarrays (a1, a6, a11), (a2, a7, a12), (a3, a8), (a4, a9), (a5, a10). For instance, it changes the subarray (a1, a6, a11) from (62, 17, 25) to (17, 25, 62). The next pass, 3-sorting, performs insertion sort on the three subarrays (a1, a4, a7, a10), (a2, a5, a8, a11), (a3, a6, a9, a12). The last pass, 1-sorting, is an ordinary insertion sort of the entire array (a1,..., a12).

As the example illustrates, the subarrays that Shellsort operates on are initially short; later they are longer but almost ordered. In both cases insertion sort works efficiently.

Shellsort is not stable: it may change the relative order of elements with equal values. It is an adaptive sorting algorithm in that it executes faster when the input is partially sorted.

Pseudocode

Using Marcin Ciura's gap sequence, with an inner insertion sort.

# Sort an array a[0...n-1].

gaps = [701, 301, 132, 57, 23, 10, 4, 1] // Ciura gap sequence

# Start with the largest gap and work down to a gap of 1

# similar to insertion sort but instead of 1, gap is being used in each step

foreach (gap in gaps)

{

# Do a gapped insertion sort for every elements in gaps

# Each loop leaves a[0..gap-1] in gapped order

for (i = gap; i < n; i += 1)

{

# save a[i] in temp and make a hole at position i

temp = a[i]

# shift earlier gap-sorted elements up until the correct location for a[i] is found

for (j = i; (j >= gap) && (a[j - gap] > temp); j -= gap)

{

a[j] = a[j - gap]

}

# put temp (the original a[i]) in its correct location

a[j] = temp

}

}

Gap sequences

The question of deciding which gap sequence to use is difficult. Every gap sequence that contains 1 yields a correct sort (as this makes the final pass an ordinary insertion sort); however, the properties of thus obtained versions of Shellsort may be very different. Too few gaps slows down the passes, and too many gaps produces an overhead.

The table below compares most proposed gap sequences published so far. Some of them have decreasing elements that depend on the size of the sorted array (N). Others are increasing infinite sequences, whose elements less than N should be used in reverse order.

| OEIS | General term (k ≥ 1) | Concrete gaps | Worst-case time complexity |

Author and year of publication |

|---|---|---|---|---|

| [e.g. when N = 2p] | Shell, 1959[4] | |||

| Frank & Lazarus, 1960[8] | ||||

| A000225 | Hibbard, 1963[9] | |||

| A083318 | , prefixed with 1 | Papernov & Stasevich, 1965[10] | ||

| A003586 | Successive numbers of the form (3-smooth numbers) | Pratt, 1971[1] | ||

| A003462 | , not greater than | Knuth, 1973,[3] based on Pratt, 1971[1] | ||

| A036569 | Incerpi & Sedgewick, 1985,[11] Knuth[3] | |||

| A036562 | , prefixed with 1 | Sedgewick, 1982[6] | ||

| A033622 | Sedgewick, 1986[12] | |||

| Unknown | Gonnet & Baeza-Yates, 1991[13] | |||

| A108870 | Unknown | Tokuda, 1992[14] | ||

| A102549 | Unknown (experimentally derived) | Unknown | Ciura, 2001[15] |

When the binary representation of N contains many consecutive zeroes, Shellsort using Shell's original gap sequence makes Θ(N2) comparisons in the worst case. For instance, this case occurs for N equal to a power of two when elements greater and smaller than the median occupy odd and even positions respectively, since they are compared only in the last pass.

Although it has higher complexity than the O(N log N) that is optimal for comparison sorts, Pratt's version lends itself to sorting networks and has the same asymptotic gate complexity as Batcher's bitonic sorter.

Gonnet and Baeza-Yates observed that Shellsort makes the fewest comparisons on average when the ratios of successive gaps are roughly equal to 2.2.[13] This is why their sequence with ratio 2.2 and Tokuda's sequence with ratio 2.25 prove efficient. However, it is not known why this is so. Sedgewick recommends using gaps which have low greatest common divisors or are pairwise coprime.[16]

With respect to the average number of comparisons, Ciura's sequence[15] has the best known performance; gaps from 701 were not determined but the sequence can be further extended according to the recursive formula .

Tokuda's sequence, defined by the simple formula , where , , can be recommended for practical applications.

If the maximum input size is small, as may occur if Shellsort is used on small subarrays by another recursive sorting algorithm such as quicksort or merge sort, then it is possible to tabulate an optimal sequence for each input size.[17]

Computational complexity

The following property holds: after h2-sorting of any h1-sorted array, the array remains h1-sorted.[18] Every h1-sorted and h2-sorted array is also (a1h1+a2h2)-sorted, for any nonnegative integers a1 and a2. The worst-case complexity of Shellsort is therefore connected with the Frobenius problem: for given integers h1,..., hn with gcd = 1, the Frobenius number g(h1,..., hn) is the greatest integer that cannot be represented as a1h1+ ... +anhn with nonnegative integer a1,..., an. Using known formulae for Frobenius numbers, we can determine the worst-case complexity of Shellsort for several classes of gap sequences.[19] Proven results are shown in the above table.

With respect to the average number of operations, none of the proven results concerns a practical gap sequence. For gaps that are powers of two, Espelid computed this average as .[20] Knuth determined the average complexity of sorting an N-element array with two gaps (h, 1) to be .[3] It follows that a two-pass Shellsort with h = Θ(N1/3) makes on average O(N5/3) comparisons/inversions/running time. Yao found the average complexity of a three-pass Shellsort.[21] His result was refined by Janson and Knuth:[22] the average number of comparisons/inversions/running time made during a Shellsort with three gaps (ch, cg, 1), where h and g are coprime, is in the first pass, in the second pass and in the third pass. ψ(h, g) in the last formula is a complicated function asymptotically equal to . In particular, when h = Θ(N7/15) and g = Θ(N1/5), the average time of sorting is O(N23/15).

Based on experiments, it is conjectured that Shellsort with Hibbard's gap sequence runs in O(N5/4) average time,[3] and that Gonnet and Baeza-Yates's sequence requires on average 0.41N ln N (ln ln N + 1/6) element moves.[13] Approximations of the average number of operations formerly put forward for other sequences fail when sorted arrays contain millions of elements.

The graph below shows the average number of element comparisons in various variants of Shellsort, divided by the theoretical lower bound, i.e. log2N!, where the sequence 1, 4, 10, 23, 57, 132, 301, 701 has been extended according to the formula .

.svg.png.webp)

Applying the theory of Kolmogorov complexity, Jiang, Li, and Vitányi [23] proved the following lower bound for the order of the average number of operations/running time in a p-pass Shellsort: Ω(pN1+1/p) when p ≤ log2N and Ω(pN) when p > log2N. Therefore, Shellsort has prospects of running in an average time that asymptotically grows like N logN only when using gap sequences whose number of gaps grows in proportion to the logarithm of the array size. It is, however, unknown whether Shellsort can reach this asymptotic order of average-case complexity, which is optimal for comparison sorts. The lower bound was improved by Vitányi[24] for every number of passes to where . This result implies for example the Jiang-Li-Vitányi lower bound for all -pass increment sequences and improves that lower bound for particular increment sequences. In fact all bounds (lower and upper) currently known for the average case are precisely matched by this lower bound. For example, this gives the new result that the Janson-Knuth upper bound is matched by the resulting lower bound for the used increment sequence, showing that three pass Shellsort for this increment sequence uses comparisons/inversions/running time. The formula allows us to search for increment sequences that yield lower bounds which are unknown; for example an increment sequence for four passes which has a lower bound greater than for the increment sequence . The lower bound becomes

The worst-case complexity of any version of Shellsort is of higher order: Plaxton, Poonen, and Suel showed that it grows at least as rapidly as .[25][26] Robert Cypher proved a stronger lower bound: when for all .[27]

Applications

Shellsort performs more operations and has higher cache miss ratio than quicksort. However, since it can be implemented using little code and does not use the call stack, some implementations of the qsort function in the C standard library targeted at embedded systems use it instead of quicksort. Shellsort is, for example, used in the uClibc library.[28] For similar reasons, in the past, Shellsort was used in the Linux kernel.[29]

Shellsort can also serve as a sub-algorithm of introspective sort, to sort short subarrays and to prevent a slowdown when the recursion depth exceeds a given limit. This principle is employed, for instance, in the bzip2 compressor.[30]

See also

- Comb sort

References

- Pratt, Vaughan Ronald (1979). Shellsort and Sorting Networks (Outstanding Dissertations in the Computer Sciences) (PDF). Garland. ISBN 978-0-8240-4406-0. Archived (PDF) from the original on 7 September 2021.

- "Shellsort & Comparisons".

- Knuth, Donald E. (1997). "Shell's method". The Art of Computer Programming. Volume 3: Sorting and Searching (2nd ed.). Reading, Massachusetts: Addison-Wesley. pp. 83–95. ISBN 978-0-201-89685-5.

- Shell, D. L. (1959). "A High-Speed Sorting Procedure" (PDF). Communications of the ACM. 2 (7): 30–32. doi:10.1145/368370.368387.

- Some older textbooks and references call this the "Shell–Metzner" sort after Marlene Metzner Norton, but according to Metzner, "I had nothing to do with the sort, and my name should never have been attached to it." See "Shell sort". National Institute of Standards and Technology. Retrieved 17 July 2007.

- Sedgewick, Robert (1998). Algorithms in C. Vol. 1 (3rd ed.). Addison-Wesley. pp. 273–281. ISBN 978-0-201-31452-6.

- Kernighan, Brian W.; Ritchie, Dennis M. (1996). The C Programming Language (2nd ed.). Prentice Hall. p. 62. ISBN 978-7-302-02412-5.

- Frank, R. M.; Lazarus, R. B. (1960). "A High-Speed Sorting Procedure". Communications of the ACM. 3 (1): 20–22. doi:10.1145/366947.366957.

- Hibbard, Thomas N. (1963). "An Empirical Study of Minimal Storage Sorting". Communications of the ACM. 6 (5): 206–213. doi:10.1145/366552.366557.

- Papernov, A. A.; Stasevich, G. V. (1965). "A Method of Information Sorting in Computer Memories" (PDF). Problems of Information Transmission. 1 (3): 63–75.

- Incerpi, Janet; Sedgewick, Robert (1985). "Improved Upper Bounds on Shellsort" (PDF). Journal of Computer and System Sciences. 31 (2): 210–224. doi:10.1016/0022-0000(85)90042-x.

- Sedgewick, Robert (1986). "A New Upper Bound for Shellsort". Journal of Algorithms. 7 (2): 159–173. doi:10.1016/0196-6774(86)90001-5.

- Gonnet, Gaston H.; Baeza-Yates, Ricardo (1991). "Shellsort". Handbook of Algorithms and Data Structures: In Pascal and C (2nd ed.). Reading, Massachusetts: Addison-Wesley. pp. 161–163. ISBN 978-0-201-41607-7.

Extensive experiments indicate that the sequence defined by α = 0.45454 < 5/11 performs significantly better than other sequences. The easiest way to compute ⌊0.45454n⌋ is by

(5 * n — 1)/11using integer arithmetic. - Tokuda, Naoyuki (1992). "An Improved Shellsort". In van Leeuven, Jan (ed.). Proceedings of the IFIP 12th World Computer Congress on Algorithms, Software, Architecture. Amsterdam: North-Holland Publishing Co. pp. 449–457. ISBN 978-0-444-89747-3.

- Ciura, Marcin (2001). "Best Increments for the Average Case of Shellsort" (PDF). In Freiwalds, Rusins (ed.). Proceedings of the 13th International Symposium on Fundamentals of Computation Theory. London: Springer-Verlag. pp. 106–117. ISBN 978-3-540-42487-1.

- Sedgewick, Robert (1998). "Shellsort". Algorithms in C++, Parts 1–4: Fundamentals, Data Structure, Sorting, Searching. Reading, Massachusetts: Addison-Wesley. pp. 285–292. ISBN 978-0-201-35088-3.

- Forshell, Olof (22 May 2018). "How to choose the lengths of my sub sequences for a shell sort?". Stack Overflow. Additional commentary at Fastest gap sequence for shell sort? (23 May 2018).

- Gale, David; Karp, Richard M. (April 1972). "A Phenomenon in the Theory of Sorting" (PDF). Journal of Computer and System Sciences. 6 (2): 103–115. doi:10.1016/S0022-0000(72)80016-3.

- Selmer, Ernst S. (March 1989). "On Shellsort and the Frobenius Problem" (PDF). BIT Numerical Mathematics. 29 (1): 37–40. doi:10.1007/BF01932703. hdl:1956/19572.

- Espelid, Terje O. (December 1973). "Analysis of a Shellsort Algorithm". BIT Numerical Mathematics. 13 (4): 394–400. doi:10.1007/BF01933401. The quoted result is equation (8) on p. 399.

- Yao, Andrew Chi-Chih (1980). "An Analysis of (h, k, 1)-Shellsort" (PDF). Journal of Algorithms. 1 (1): 14–50. doi:10.1016/0196-6774(80)90003-6. STAN-CS-79-726. Archived from the original (PDF) on 4 March 2019.

- Janson, Svante; Knuth, Donald E. (1997). "Shellsort with Three Increments" (PDF). Random Structures and Algorithms. 10 (1–2): 125–142. arXiv:cs/9608105. CiteSeerX 10.1.1.54.9911. doi:10.1002/(SICI)1098-2418(199701/03)10:1/2<125::AID-RSA6>3.0.CO;2-X.

- Jiang, Tao; Li, Ming; Vitányi, Paul (September 2000). "A Lower Bound on the Average-Case Complexity of Shellsort" (PDF). Journal of the ACM. 47 (5): 905–911. arXiv:cs/9906008. CiteSeerX 10.1.1.6.6508. doi:10.1145/355483.355488.

- Vitányi, Paul (March 2018). "On the average-case complexity of Shellsort" (PDF). Random Structures and Algorithms. 52 (2): 354–363. arXiv:1501.06461. doi:10.1002/rsa.20737.

- Plaxton, C. Greg; Poonen, Bjorn; Suel, Torsten (24–27 October 1992). Improved Lower Bounds for Shellsort (PDF). Annual Symposium on Foundations of Computer Science (FOCS 1992). Vol. 33. Pittsburgh, United States. pp. 226–235. CiteSeerX 10.1.1.43.1393. doi:10.1109/SFCS.1992.267769. ISBN 978-0-8186-2900-6.

- Plaxton, C. Greg; Suel, Torsten (May 1997). "Lower Bounds for Shellsort" (PDF). Journal of Algorithms. 23 (2): 221–240. CiteSeerX 10.1.1.460.2429. doi:10.1006/jagm.1996.0825.

- Cypher, Robert (1993). "A Lower Bound on the Size of Shellsort Sorting Networks". SIAM Journal on Computing. 22: 62–71.

- Novoa, Manuel III. "libc/stdlib/stdlib.c". Retrieved 29 October 2014.

- "kernel/groups.c". Retrieved 5 May 2012.

- Julian Seward. "bzip2/blocksort.c". Retrieved 30 March 2011.

Bibliography

- Knuth, Donald E. (1997). "Shell's method". The Art of Computer Programming. Volume 3: Sorting and Searching (2nd ed.). Reading, Massachusetts: Addison-Wesley. pp. 83–95. ISBN 978-0-201-89685-5.

- Analysis of Shellsort and Related Algorithms, Robert Sedgewick, Fourth European Symposium on Algorithms, Barcelona, September 1996.

External links

- Animated Sorting Algorithms: Shell Sort at the Wayback Machine (archived 10 March 2015) – graphical demonstration

- Shellsort with gaps 5, 3, 1 as a Hungarian folk dance